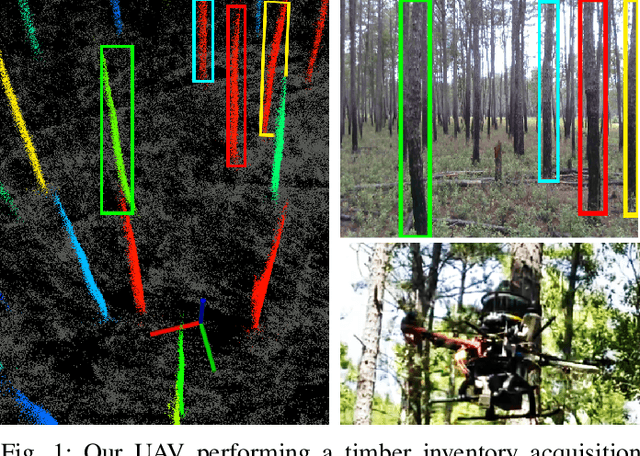

SLOAM: Semantic Lidar Odometry and Mapping for Forest Inventory

Paper and Code

Dec 29, 2019

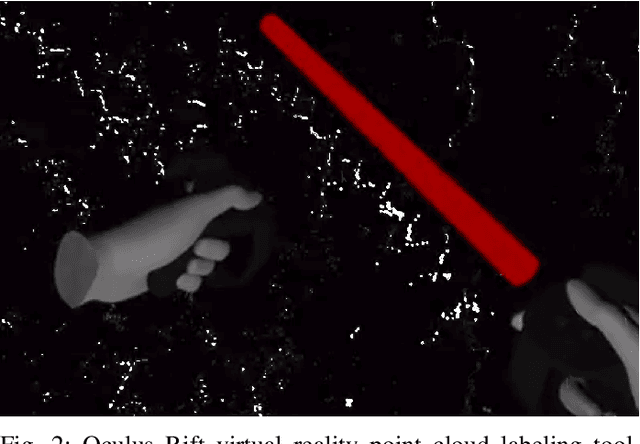

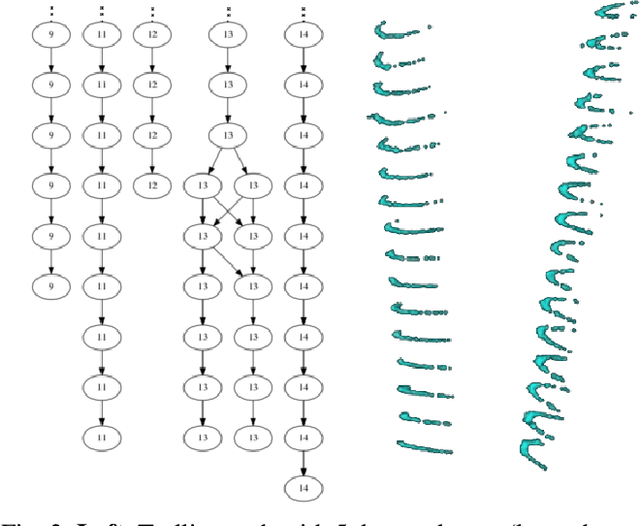

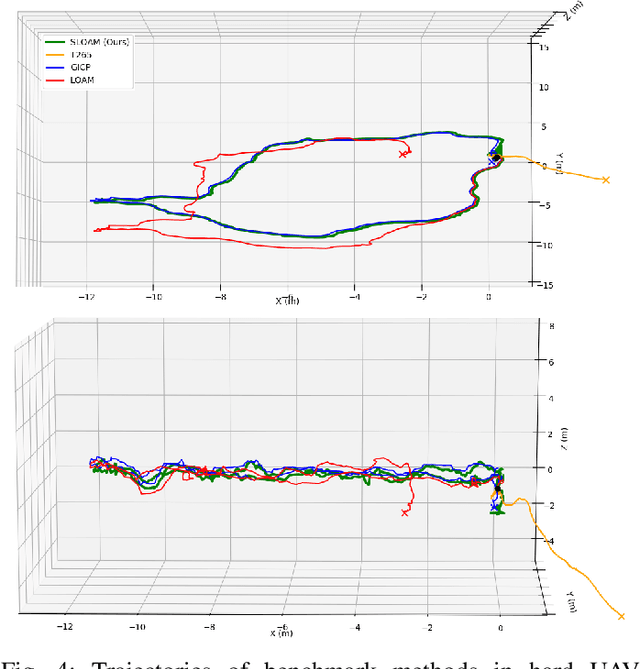

This paper describes an end-to-end pipeline for tree diameter estimation based on semantic segmentation and lidar odometry and mapping. Accurate mapping of this type of environment is challenging since the ground and the trees are surrounded by leaves, thorns and vines, and the sensor typically experiences extreme motion. We propose a semantic feature based pose optimization that simultaneously refines the tree models while estimating the robot pose. The pipeline utilizes a custom virtual reality tool for labeling 3D scans that is used to train a semantic segmentation network. The masked point cloud is used to compute a trellis graph that identifies individual instances and extracts relevant features that are used by the SLAM module. We show that traditional lidar and image based methods fail in the forest environment on both Unmanned Aerial Vehicle (UAV) and hand-carry systems, while our method is more robust, scalable, and automatically generates tree diameter estimations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge