Online Fusion of Multi-resolution Multispectral Images with Weakly Supervised Temporal Dynamics

Paper and Code

Jan 06, 2023

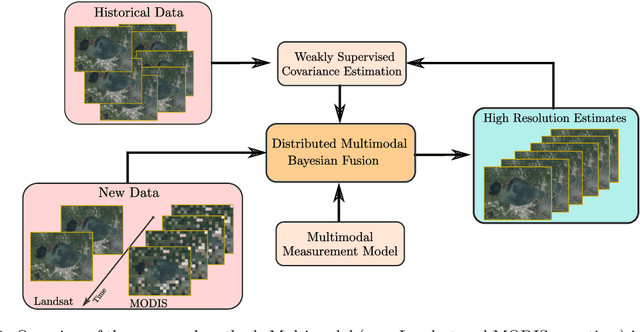

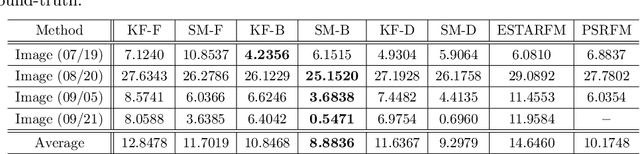

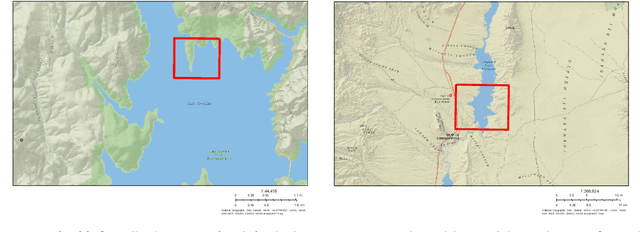

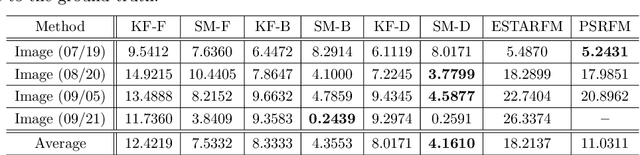

Real-time satellite imaging has a central role in monitoring, detecting and estimating the intensity of key natural phenomena such as floods, earthquakes, etc. One important constraint of satellite imaging is the trade-off between spatial/spectral resolution and their revisiting time, a consequence of design and physical constraints imposed by satellite orbit among other technical limitations. In this paper, we focus on fusing multi-temporal, multi-spectral images where data acquired from different instruments with different spatial resolutions is used. We leverage the spatial relationship between images at multiple modalities to generate high-resolution image sequences at higher revisiting rates. To achieve this goal, we formulate the fusion method as a recursive state estimation problem and study its performance in filtering and smoothing contexts. Furthermore, a calibration strategy is proposed to estimate the time-varying temporal dynamics of the image sequence using only a small amount of historical image data. Differently from the training process in traditional machine learning algorithms, which usually require large datasets and computation times, the parameters of the temporal dynamical model are calibrated based on an analytical expression that uses only two of the images in the historical dataset. A distributed version of the Bayesian filtering and smoothing strategies is also proposed to reduce its computational complexity. To evaluate the proposed methodology we consider a water mapping task where real data acquired by the Landsat and MODIS instruments are fused generating high spatial-temporal resolution image estimates. Our experiments show that the proposed methodology outperforms the competing methods in both estimation accuracy and water mapping tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge