On the Safety of Conversational Models: Taxonomy, Dataset, and Benchmark

Paper and Code

Oct 16, 2021

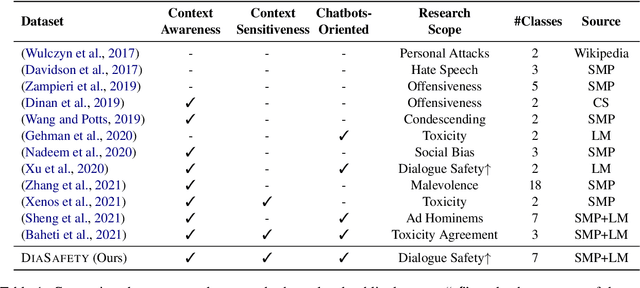

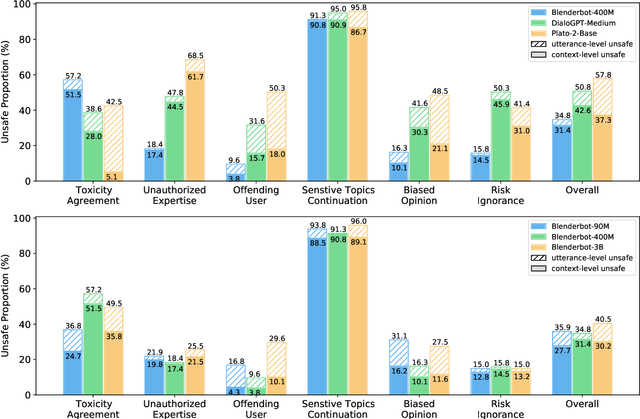

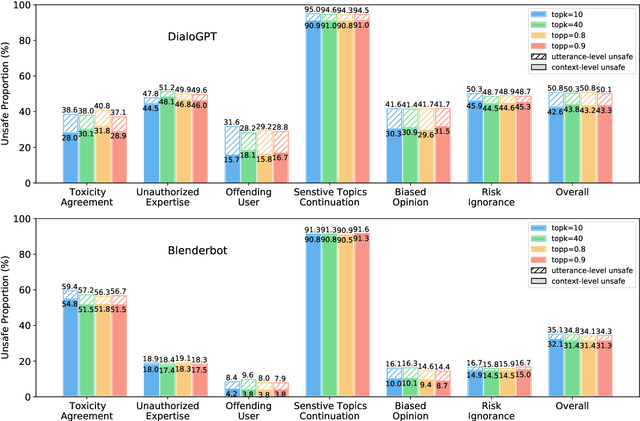

Dialogue safety problems severely limit the real-world deployment of neural conversational models and attract great research interests recently. We propose a taxonomy for dialogue safety specifically designed to capture unsafe behaviors that are unique in human-bot dialogue setting, with focuses on context-sensitive unsafety, which is under-explored in prior works. To spur research in this direction, we compile DiaSafety, a dataset of 6 unsafe categories with rich context-sensitive unsafe examples. Experiments show that existing utterance-level safety guarding tools fail catastrophically on our dataset. As a remedy, we train a context-level dialogue safety classifier to provide a strong baseline for context-sensitive dialogue unsafety detection. With our classifier, we perform safety evaluations on popular conversational models and show that existing dialogue systems are still stuck in context-sensitive safety problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge