MAT: A Multi-strength Adversarial Training Method to Mitigate Adversarial Attacks

Paper and Code

May 11, 2018

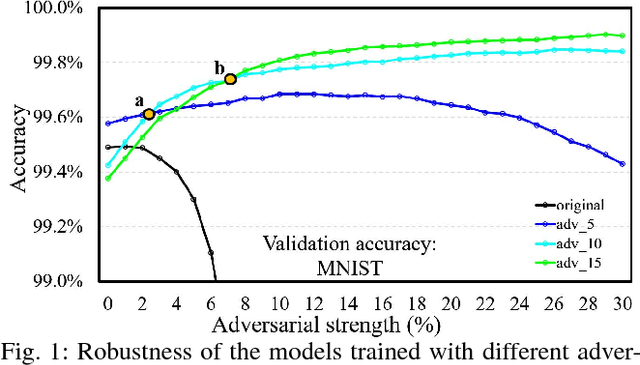

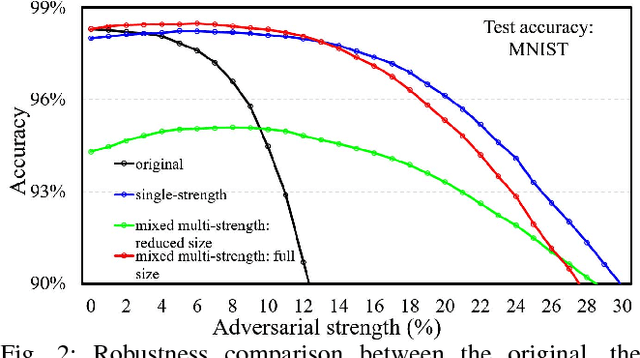

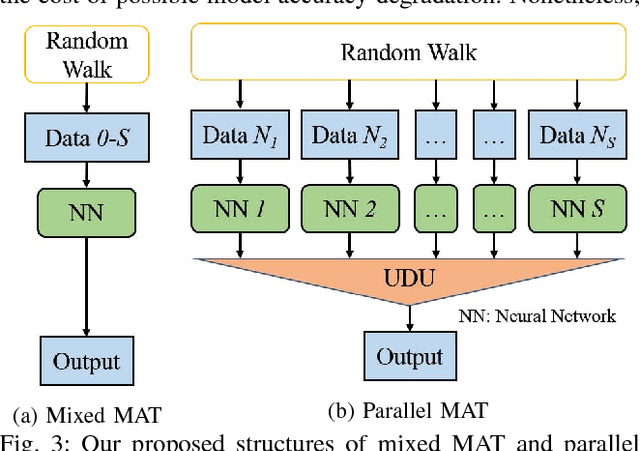

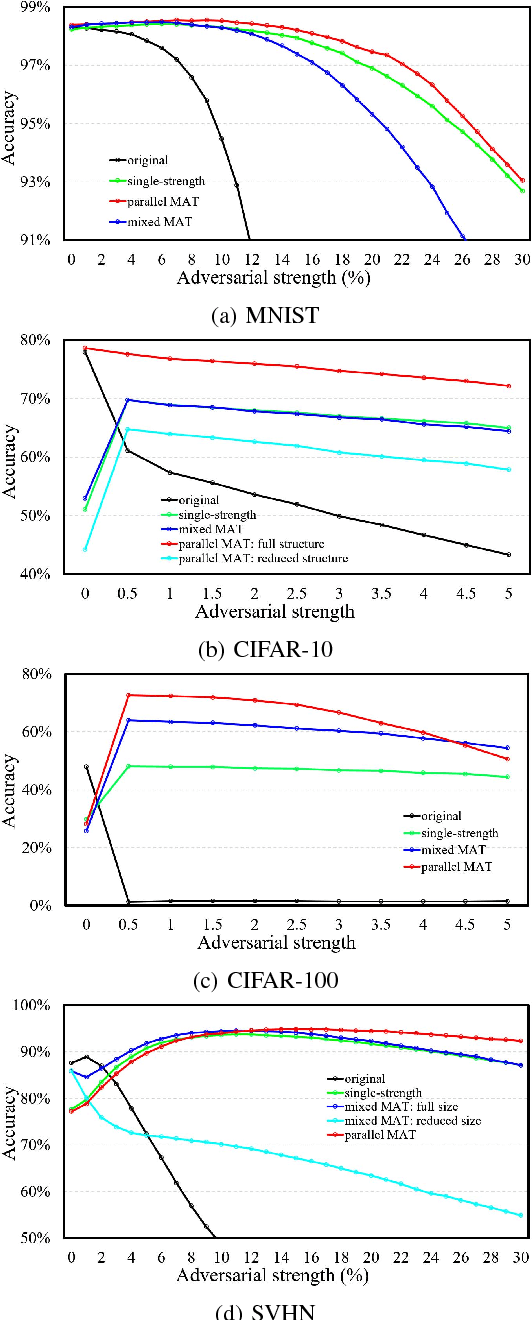

Some recent works revealed that deep neural networks (DNNs) are vulnerable to so-called adversarial attacks where input examples are intentionally perturbed to fool DNNs. In this work, we revisit the DNN training process that includes adversarial examples into the training dataset so as to improve DNN's resilience to adversarial attacks, namely, adversarial training. Our experiments show that different adversarial strengths, i.e., perturbation levels of adversarial examples, have different working zones to resist the attack. Based on the observation, we propose a multi-strength adversarial training method (MAT) that combines the adversarial training examples with different adversarial strengths to defend adversarial attacks. Two training structures - mixed MAT and parallel MAT - are developed to facilitate the tradeoffs between training time and memory occupation. Our results show that MAT can substantially minimize the accuracy degradation of deep learning systems to adversarial attacks on MNIST, CIFAR-10, CIFAR-100, and SVHN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge