Filling Knowledge Gaps in a Broad-Coverage Machine Translation System

Paper and Code

Jun 10, 1995

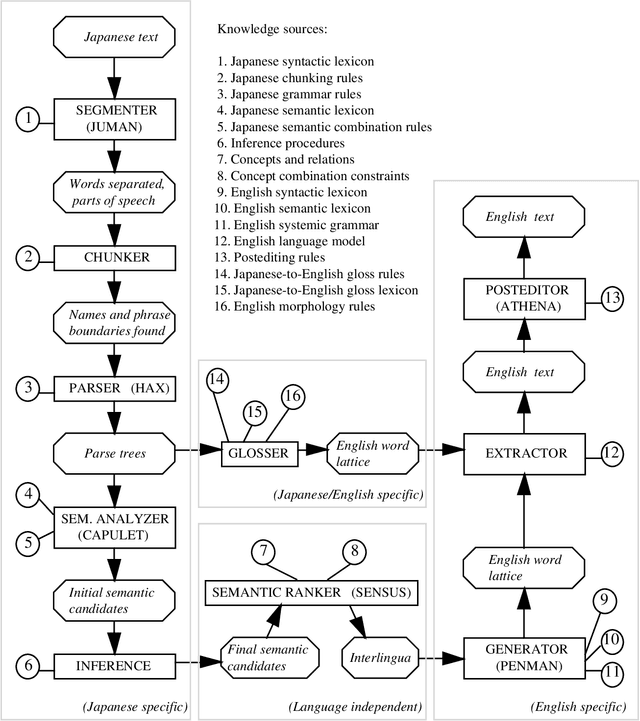

Knowledge-based machine translation (KBMT) techniques yield high quality in domains with detailed semantic models, limited vocabulary, and controlled input grammar. Scaling up along these dimensions means acquiring large knowledge resources. It also means behaving reasonably when definitive knowledge is not yet available. This paper describes how we can fill various KBMT knowledge gaps, often using robust statistical techniques. We describe quantitative and qualitative results from JAPANGLOSS, a broad-coverage Japanese-English MT system.

* 7 pages, Compressed and uuencoded postscript. To appear: IJCAI-95

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge