FedMix: Mixed Supervised Federated Learning for Medical Image Segmentation

Paper and Code

May 04, 2022

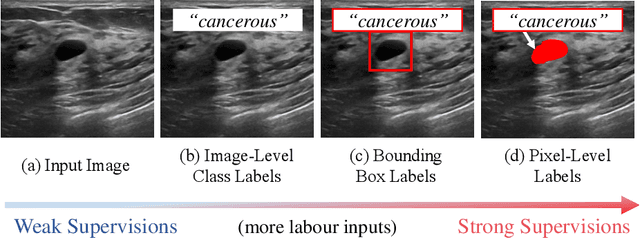

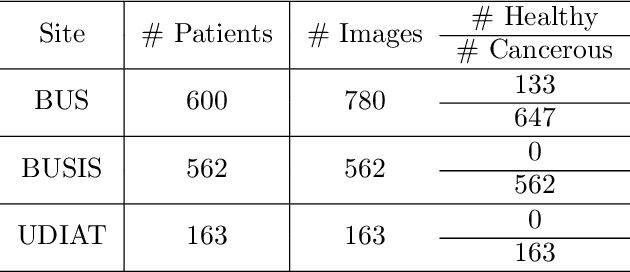

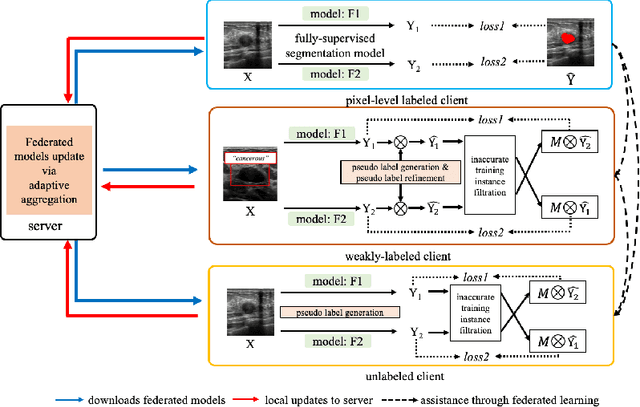

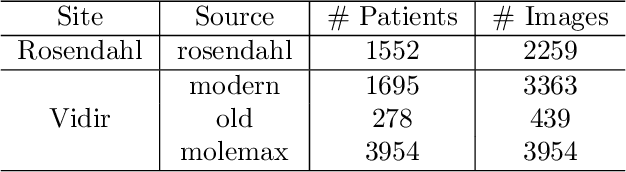

The purpose of federated learning is to enable multiple clients to jointly train a machine learning model without sharing data. However, the existing methods for training an image segmentation model have been based on an unrealistic assumption that the training set for each local client is annotated in a similar fashion and thus follows the same image supervision level. To relax this assumption, in this work, we propose a label-agnostic unified federated learning framework, named FedMix, for medical image segmentation based on mixed image labels. In FedMix, each client updates the federated model by integrating and effectively making use of all available labeled data ranging from strong pixel-level labels, weak bounding box labels, to weakest image-level class labels. Based on these local models, we further propose an adaptive weight assignment procedure across local clients, where each client learns an aggregation weight during the global model update. Compared to the existing methods, FedMix not only breaks through the constraint of a single level of image supervision, but also can dynamically adjust the aggregation weight of each local client, achieving rich yet discriminative feature representations. To evaluate its effectiveness, experiments have been carried out on two challenging medical image segmentation tasks, i.e., breast tumor segmentation and skin lesion segmentation. The results validate that our proposed FedMix outperforms the state-of-the-art method by a large margin.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge