Federated Split GANs

Paper and Code

Jul 04, 2022

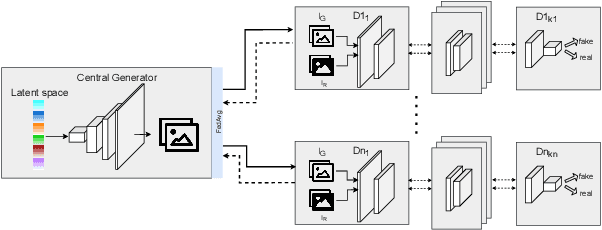

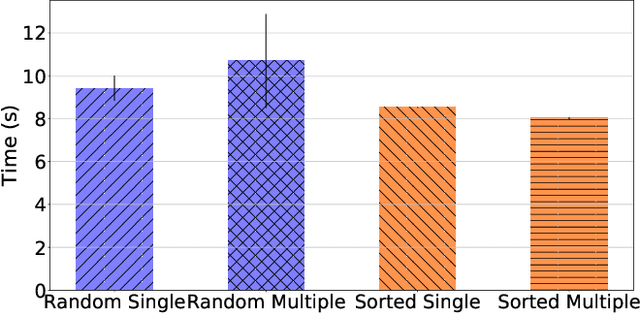

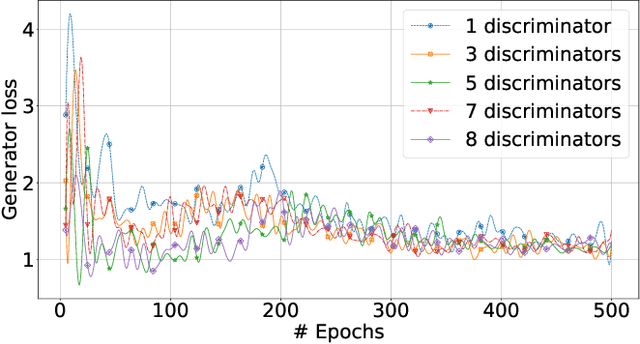

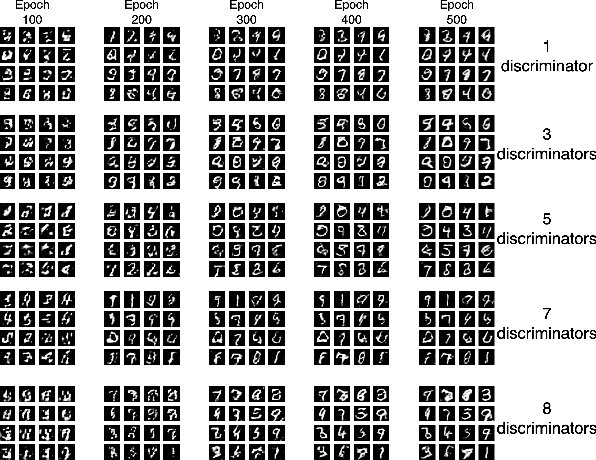

Mobile devices and the immense amount and variety of data they generate are key enablers of machine learning (ML)-based applications. Traditional ML techniques have shifted toward new paradigms such as federated (FL) and split learning (SL) to improve the protection of user's data privacy. However, these paradigms often rely on server(s) located in the edge or cloud to train computationally-heavy parts of a ML model to avoid draining the limited resource on client devices, resulting in exposing device data to such third parties. This work proposes an alternative approach to train computationally-heavy ML models in user's devices themselves, where corresponding device data resides. Specifically, we focus on GANs (generative adversarial networks) and leverage their inherent privacy-preserving attribute. We train the discriminative part of a GAN with raw data on user's devices, whereas the generative model is trained remotely (e.g., server) for which there is no need to access sensor true data. Moreover, our approach ensures that the computational load of training the discriminative model is shared among user's devices-proportional to their computation capabilities-by means of SL. We implement our proposed collaborative training scheme of a computationally-heavy GAN model in real resource-constrained devices. The results show that our system preserves data privacy, keeps a short training time, and yields same accuracy of model training in unconstrained devices (e.g., cloud). Our code can be found on https://github.com/YukariSonz/FSL-GAN

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge