A Human-Centered Safe Robot Reinforcement Learning Framework with Interactive Behaviors

Paper and Code

Mar 02, 2023

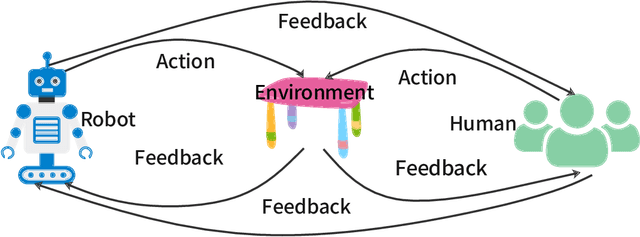

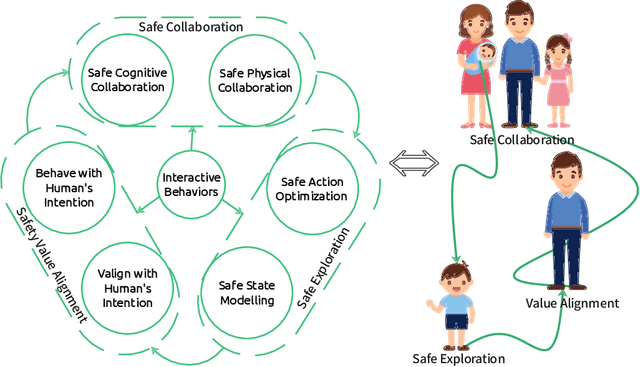

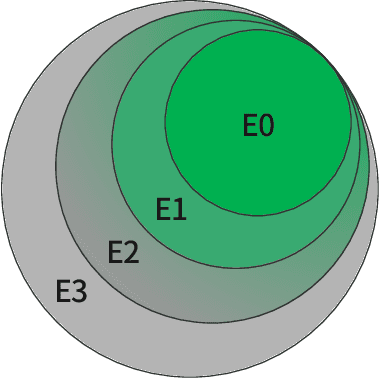

Deployment of reinforcement learning algorithms for robotics applications in the real world requires ensuring the safety of the robot and its environment. Safe robot reinforcement learning (SRRL) is a crucial step towards achieving human-robot coexistence. In this paper, we envision a human-centered SRRL framework consisting of three stages: safe exploration, safety value alignment, and safe collaboration. We examine the research gaps in these areas and propose to leverage interactive behaviors for SRRL. Interactive behaviors enable bi-directional information transfer between humans and robots, such as conversational robot ChatGPT. We argue that interactive behaviors need further attention from the SRRL community. We discuss four open challenges related to the robustness, efficiency, transparency, and adaptability of SRRL with interactive behaviors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge