Zikun Ye

Document Valuation in LLM Summaries: A Cluster Shapley Approach

May 28, 2025Abstract:Large Language Models (LLMs) are increasingly used in systems that retrieve and summarize content from multiple sources, such as search engines and AI assistants. While these models enhance user experience by generating coherent summaries, they obscure the contributions of original content creators, raising concerns about credit attribution and compensation. We address the challenge of valuing individual documents used in LLM-generated summaries. We propose using Shapley values, a game-theoretic method that allocates credit based on each document's marginal contribution. Although theoretically appealing, Shapley values are expensive to compute at scale. We therefore propose Cluster Shapley, an efficient approximation algorithm that leverages semantic similarity between documents. By clustering documents using LLM-based embeddings and computing Shapley values at the cluster level, our method significantly reduces computation while maintaining attribution quality. We demonstrate our approach to a summarization task using Amazon product reviews. Cluster Shapley significantly reduces computational complexity while maintaining high accuracy, outperforming baseline methods such as Monte Carlo sampling and Kernel SHAP with a better efficient frontier. Our approach is agnostic to the exact LLM used, the summarization process used, and the evaluation procedure, which makes it broadly applicable to a variety of summarization settings.

LOLA: LLM-Assisted Online Learning Algorithm for Content Experiments

Jun 03, 2024

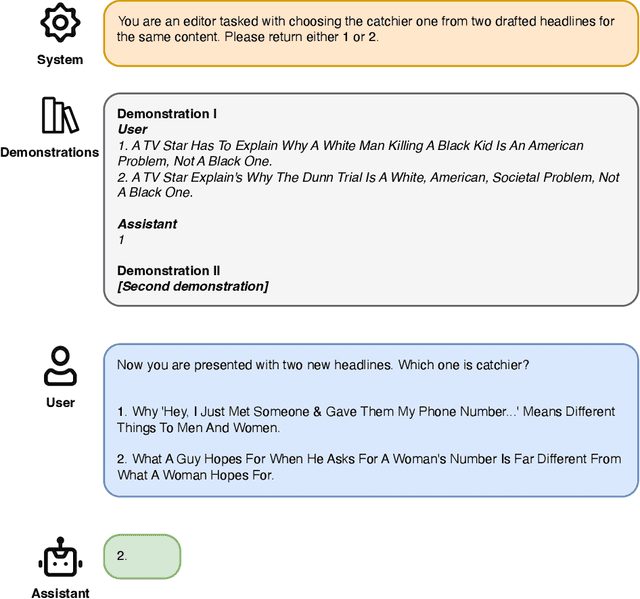

Abstract:In the rapidly evolving digital content landscape, media firms and news publishers require automated and efficient methods to enhance user engagement. This paper introduces the LLM-Assisted Online Learning Algorithm (LOLA), a novel framework that integrates Large Language Models (LLMs) with adaptive experimentation to optimize content delivery. Leveraging a large-scale dataset from Upworthy, which includes 17,681 headline A/B tests aimed at evaluating the performance of various headlines associated with the same article content, we first investigate three broad pure-LLM approaches: prompt-based methods, embedding-based classification models, and fine-tuned open-source LLMs. Our findings indicate that prompt-based approaches perform poorly, achieving no more than 65% accuracy in identifying the catchier headline among two options. In contrast, OpenAI-embedding-based classification models and fine-tuned Llama-3-8b models achieve comparable accuracy, around 82-84%, though still falling short of the performance of experimentation with sufficient traffic. We then introduce LOLA, which combines the best pure-LLM approach with the Upper Confidence Bound algorithm to adaptively allocate traffic and maximize clicks. Our numerical experiments on Upworthy data show that LOLA outperforms the standard A/B testing method (the current status quo at Upworthy), pure bandit algorithms, and pure-LLM approaches, particularly in scenarios with limited experimental traffic or numerous arms. Our approach is both scalable and broadly applicable to content experiments across a variety of digital settings where firms seek to optimize user engagement, including digital advertising and social media recommendations.

Seller-side Outcome Fairness in Online Marketplaces

Dec 06, 2023

Abstract:This paper aims to investigate and achieve seller-side fairness within online marketplaces, where many sellers and their items are not sufficiently exposed to customers in an e-commerce platform. This phenomenon raises concerns regarding the potential loss of revenue associated with less exposed items as well as less marketplace diversity. We introduce the notion of seller-side outcome fairness and build an optimization model to balance collected recommendation rewards and the fairness metric. We then propose a gradient-based data-driven algorithm based on the duality and bandit theory. Our numerical experiments on real e-commerce data sets show that our algorithm can lift seller fairness measures while not hurting metrics like collected Gross Merchandise Value (GMV) and total purchases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge