Zhiwei Yao

Lightweight and Post-Training Structured Pruning for On-Device Large Lanaguage Models

Jan 25, 2025

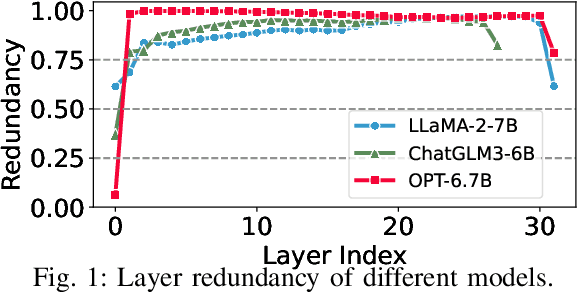

Abstract:Considering the hardware-friendly characteristics and broad applicability, structured pruning has emerged as an efficient solution to reduce the resource demands of large language models (LLMs) on resource-constrained devices. Traditional structured pruning methods often need fine-tuning to recover performance loss, which incurs high memory overhead and substantial data requirements, rendering them unsuitable for on-device applications. Additionally, post-training structured pruning techniques typically necessitate specific activation functions or architectural modifications, thereby limiting their scope of applications. Herein, we introduce COMP, a lightweight post-training structured pruning method that employs a hybrid-granularity pruning strategy. COMP initially prunes selected model layers based on their importance at a coarse granularity, followed by fine-grained neuron pruning within the dense layers of each remaining model layer. To more accurately evaluate neuron importance, COMP introduces a new matrix condition-based metric. Subsequently, COMP utilizes mask tuning to recover accuracy without the need for fine-tuning, significantly reducing memory consumption. Experimental results demonstrate that COMP improves performance by 6.13\% on the LLaMA-2-7B model with a 20\% pruning ratio compared to LLM-Pruner, while simultaneously reducing memory overhead by 80\%.

Efficient Deployment of Large Language Models on Resource-constrained Devices

Jan 05, 2025Abstract:Deploying Large Language Models (LLMs) on resource-constrained (or weak) devices presents significant challenges due to limited resources and heterogeneous data distribution. To address the data concern, it is necessary to fine-tune LLMs using on-device private data for various downstream tasks. While Federated Learning (FL) offers a promising privacy-preserving solution, existing fine-tuning methods retain the original LLM size, leaving issues of high inference latency and excessive memory demands unresolved. Hence, we design FedSpine, an FL framework that combines Parameter- Efficient Fine-Tuning (PEFT) with structured pruning for efficient deployment of LLMs on resource-constrained devices. Specifically, FedSpine introduces an iterative process to prune and tune the parameters of LLMs. To mitigate the impact of device heterogeneity, an online Multi-Armed Bandit (MAB) algorithm is employed to adaptively determine different pruning ratios and LoRA ranks for heterogeneous devices without any prior knowledge of their computing and communication capabilities. As a result, FedSpine maintains higher inference accuracy while improving fine-tuning efficiency. Experimental results conducted on a physical platform with 80 devices demonstrate that FedSpine can speed up fine-tuning by 1.4$\times$-6.9$\times$ and improve final accuracy by 0.4%-4.5% under the same sparsity level compared to other baselines.

An Efficient NAS-based Approach for Handling Imbalanced Datasets

Jun 22, 2024Abstract:Class imbalance is a common issue in real-world data distributions, negatively impacting the training of accurate classifiers. Traditional approaches to mitigate this problem fall into three main categories: class re-balancing, information transfer, and representation learning. This paper introduces a novel approach to enhance performance on long-tailed datasets by optimizing the backbone architecture through neural architecture search (NAS). Our research shows that an architecture's accuracy on a balanced dataset does not reliably predict its performance on imbalanced datasets. This necessitates a complete NAS run on long-tailed datasets, which can be computationally expensive. To address this computational challenge, we focus on existing work, called IMB-NAS, which proposes efficiently adapting a NAS super-network trained on a balanced source dataset to an imbalanced target dataset. A detailed description of the fundamental techniques for IMB-NAS is provided in this paper, including NAS and architecture transfer. Among various adaptation strategies, we find that the most effective approach is to retrain the linear classification head with reweighted loss while keeping the backbone NAS super-network trained on the balanced source dataset frozen. Finally, we conducted a series of experiments on the imbalanced CIFAR dataset for performance evaluation. Our conclusions are the same as those proposed in the IMB-NAS paper.

MergeSFL: Split Federated Learning with Feature Merging and Batch Size Regulation

Nov 22, 2023Abstract:Recently, federated learning (FL) has emerged as a popular technique for edge AI to mine valuable knowledge in edge computing (EC) systems. To mitigate the computing/communication burden on resource-constrained workers and protect model privacy, split federated learning (SFL) has been released by integrating both data and model parallelism. Despite resource limitations, SFL still faces two other critical challenges in EC, i.e., statistical heterogeneity and system heterogeneity. To address these challenges, we propose a novel SFL framework, termed MergeSFL, by incorporating feature merging and batch size regulation in SFL. Concretely, feature merging aims to merge the features from workers into a mixed feature sequence, which is approximately equivalent to the features derived from IID data and is employed to promote model accuracy. While batch size regulation aims to assign diverse and suitable batch sizes for heterogeneous workers to improve training efficiency. Moreover, MergeSFL explores to jointly optimize these two strategies upon their coupled relationship to better enhance the performance of SFL. Extensive experiments are conducted on a physical platform with 80 NVIDIA Jetson edge devices, and the experimental results show that MergeSFL can improve the final model accuracy by 5.82% to 26.22%, with a speedup by about 1.74x to 4.14x, compared to the baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge