Zhichen Wang

DepthMamba with Adaptive Fusion

Dec 28, 2024Abstract:Multi-view depth estimation has achieved impressive performance over various benchmarks. However, almost all current multi-view systems rely on given ideal camera poses, which are unavailable in many real-world scenarios, such as autonomous driving. In this work, we propose a new robustness benchmark to evaluate the depth estimation system under various noisy pose settings. Surprisingly, we find current multi-view depth estimation methods or single-view and multi-view fusion methods will fail when given noisy pose settings. To tackle this challenge, we propose a two-branch network architecture which fuses the depth estimation results of single-view and multi-view branch. In specific, we introduced mamba to serve as feature extraction backbone and propose an attention-based fusion methods which adaptively select the most robust estimation results between the two branches. Thus, the proposed method can perform well on some challenging scenes including dynamic objects, texture-less regions, etc. Ablation studies prove the effectiveness of the backbone and fusion method, while evaluation experiments on challenging benchmarks (KITTI and DDAD) show that the proposed method achieves a competitive performance compared to the state-of-the-art methods.

Interpretable Motion Planner for Urban Driving via Hierarchical Imitation Learning

Mar 24, 2023

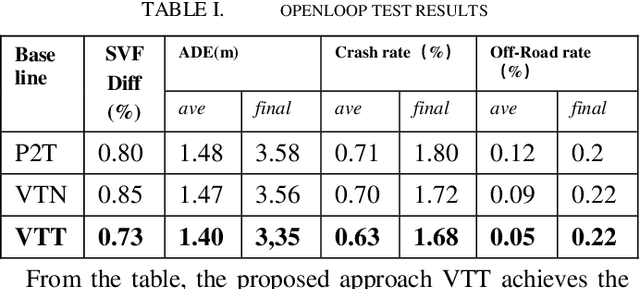

Abstract:Learning-based approaches have achieved impressive performance for autonomous driving and an increasing number of data-driven works are being studied in the decision-making and planning module. However, the reliability and the stability of the neural network is still full of challenges. In this paper, we introduce a hierarchical imitation method including a high-level grid-based behavior planner and a low-level trajectory planner, which is not only an individual data-driven driving policy and can also be easily embedded into the rule-based architecture. We evaluate our method both in closed-loop simulation and real world driving, and demonstrate the neural network planner has outstanding performance in complex urban autonomous driving scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge