Zemin Eitan Liu

Multi-Agent Reinforcement Learning for Connected and Automated Vehicles Control: Recent Advancements and Future Prospects

Dec 18, 2023

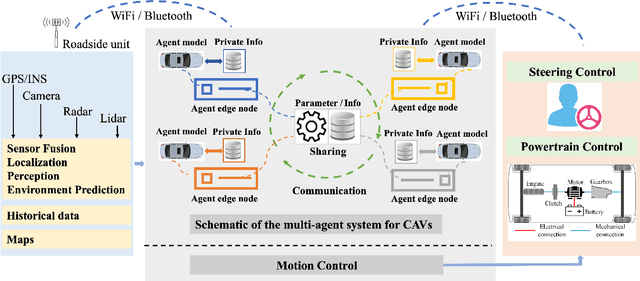

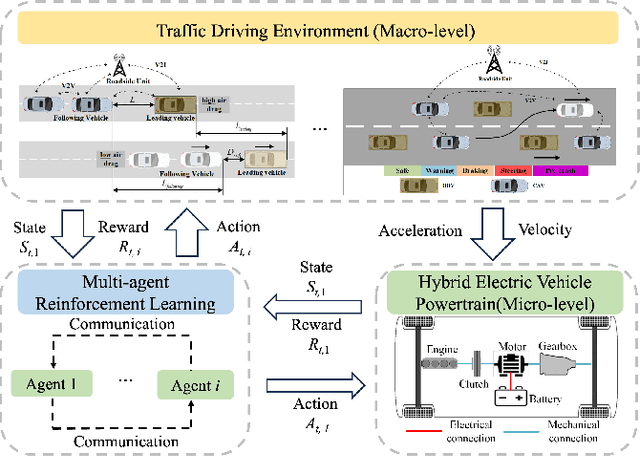

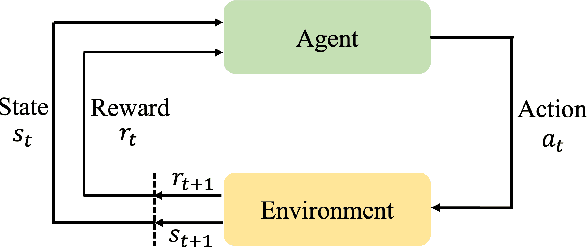

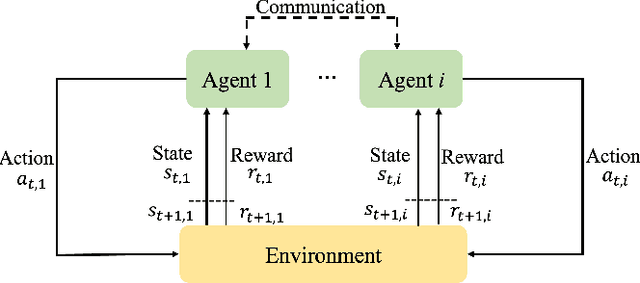

Abstract:Connected and automated vehicles (CAVs) have emerged as a potential solution to the future challenges of developing safe, efficient, and eco-friendly transportation systems. However, CAV control presents significant challenges, given the complexity of interconnectivity and coordination required among the vehicles. To address this, multi-agent reinforcement learning (MARL), with its notable advancements in addressing complex problems in autonomous driving, robotics, and human-vehicle interaction, has emerged as a promising tool for enhancing the capabilities of CAVs. However, there is a notable absence of current reviews on the state-of-the-art MARL algorithms in the context of CAVs. Therefore, this paper delivers a comprehensive review of the application of MARL techniques within the field of CAV control. The paper begins by introducing MARL, followed by a detailed explanation of its unique advantages in addressing complex mobility and traffic scenarios that involve multiple agents. It then presents a comprehensive survey of MARL applications on the extent of control dimensions for CAVs, covering critical and typical scenarios such as platooning control, lane-changing, and unsignalized intersections. In addition, the paper provides a comprehensive review of the prominent simulation platforms used to create reliable environments for training in MARL. Lastly, the paper examines the current challenges associated with deploying MARL within CAV control and outlines potential solutions that can effectively overcome these issues. Through this review, the study highlights the tremendous potential of MARL to enhance the performance and collaboration of CAV control in terms of safety, travel efficiency, and economy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge