Zahra Tabatabaei

THIR: Topological Histopathological Image Retrieval

Nov 17, 2025Abstract:According to the World Health Organization, breast cancer claimed the lives of approximately 685,000 women in 2020. Early diagnosis and accurate clinical decision making are critical in reducing this global burden. In this study, we propose THIR, a novel Content-Based Medical Image Retrieval (CBMIR) framework that leverages topological data analysis specifically, Betti numbers derived from persistent homology to characterize and retrieve histopathological images based on their intrinsic structural patterns. Unlike conventional deep learning approaches that rely on extensive training, annotated datasets, and powerful GPU resources, THIR operates entirely without supervision. It extracts topological fingerprints directly from RGB histopathological images using cubical persistence, encoding the evolution of loops as compact, interpretable feature vectors. The similarity retrieval is then performed by computing the distances between these topological descriptors, efficiently returning the top-K most relevant matches. Extensive experiments on the BreaKHis dataset demonstrate that THIR outperforms state of the art supervised and unsupervised methods. It processes the entire dataset in under 20 minutes on a standard CPU, offering a fast, scalable, and training free solution for clinical image retrieval.

Siamese Content-based Search Engine for a More Transparent Skin and Breast Cancer Diagnosis through Histological Imaging

Jan 16, 2024

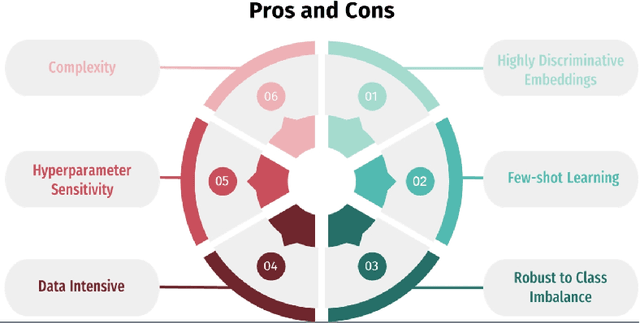

Abstract:Computer Aid Diagnosis (CAD) has developed digital pathology with Deep Learning (DL)-based tools to assist pathologists in decision-making. Content-Based Histopathological Image Retrieval (CBHIR) is a novel tool to seek highly correlated patches in terms of similarity in histopathological features. In this work, we proposed two CBHIR approaches on breast (Breast-twins) and skin cancer (Skin-twins) data sets for robust and accurate patch-level retrieval, integrating a custom-built Siamese network as a feature extractor. The proposed Siamese network is able to generalize for unseen images by focusing on the similar histopathological features of the input pairs. The proposed CBHIR approaches are evaluated on the Breast (public) and Skin (private) data sets with top K accuracy. Finding the optimum amount of K is challenging, but also, as much as K increases, the dissimilarity between the query and the returned images increases which might mislead the pathologists. To the best of the author's belief, this paper is tackling this issue for the first time on histopathological images by evaluating the top first retrieved images. The Breast-twins model achieves 70% of the F1score at the top first, which exceeds the other state-of-the-art methods at a higher amount of K such as 5 and 400. Skin-twins overpasses the recently proposed Convolutional Auto Encoder (CAE) by 67%, increasing the precision. Besides, the Skin-twins model tackles the challenges of Spitzoid Tumors of Uncertain Malignant Potential (STUMP) to assist pathologists with retrieving top K images and their corresponding labels. So, this approach can offer a more explainable CAD tool to pathologists in terms of transparency, trustworthiness, or reliability among other characteristics.

Towards More Transparent and Accurate Cancer Diagnosis with an Unsupervised CAE Approach

May 19, 2023Abstract:Digital pathology has revolutionized cancer diagnosis by leveraging Content-Based Medical Image Retrieval (CBMIR) for analyzing histopathological Whole Slide Images (WSIs). CBMIR enables searching for similar content, enhancing diagnostic reliability and accuracy. In 2020, breast and prostate cancer constituted 11.7% and 14.1% of cases, respectively, as reported by the Global Cancer Observatory (GCO). The proposed Unsupervised CBMIR (UCBMIR) replicates the traditional cancer diagnosis workflow, offering a dependable method to support pathologists in WSI-based diagnostic conclusions. This approach alleviates pathologists' workload, potentially enhancing diagnostic efficiency. To address the challenge of the lack of labeled histopathological images in CBMIR, a customized unsupervised Convolutional Auto Encoder (CAE) was developed, extracting 200 features per image for the search engine component. UCBMIR was evaluated using widely-used numerical techniques in CBMIR, alongside visual evaluation and comparison with a classifier. The validation involved three distinct datasets, with an external evaluation demonstrating its effectiveness. UCBMIR outperformed previous studies, achieving a top 5 recall of 99% and 80% on BreaKHis and SICAPv2, respectively, using the first evaluation technique. Precision rates of 91% and 70% were achieved for BreaKHis and SICAPv2, respectively, using the second evaluation technique. Furthermore, UCBMIR demonstrated the capability to identify various patterns in patches, achieving an 81% accuracy in the top 5 when tested on an external image from Arvaniti.

WWFedCBMIR: World-Wide Federated Content-Based Medical Image Retrieval

May 05, 2023Abstract:The paper proposes a Federated Content-Based Medical Image Retrieval (FedCBMIR) platform that utilizes Federated Learning (FL) to address the challenges of acquiring a diverse medical data set for training CBMIR models. CBMIR assists pathologists in diagnosing breast cancer more rapidly by identifying similar medical images and relevant patches in prior cases compared to traditional cancer detection methods. However, CBMIR in histopathology necessitates a pool of Whole Slide Images (WSIs) to train to extract an optimal embedding vector that leverages search engine performance, which may not be available in all centers. The strict regulations surrounding data sharing in medical data sets also hinder research and model development, making it difficult to collect a rich data set. The proposed FedCBMIR distributes the model to collaborative centers for training without sharing the data set, resulting in shorter training times than local training. FedCBMIR was evaluated in two experiments with three scenarios on BreaKHis and Camelyon17 (CAM17). The study shows that the FedCBMIR method increases the F1-Score (F1S) of each client to 98%, 96%, 94%, and 97% in the BreaKHis experiment with a generalized model of four magnifications and does so in 6.30 hours less time than total local training. FedCBMIR also achieves 98% accuracy with CAM17 in 2.49 hours less training time than local training, demonstrating that our FedCBMIR is both fast and accurate for both pathologists and engineers. In addition, our FedCBMIR provides similar images with higher magnification for non-developed countries where participate in the worldwide FedCBMIR with developed countries to facilitate mitosis measuring in breast cancer diagnosis. We evaluate this scenario by scattering BreaKHis into four centers with different magnifications.

Self-supervised learning of a tailored Convolutional Auto Encoder for histopathological prostate grading

Mar 21, 2023Abstract:According to GLOBOCAN 2020, prostate cancer is the second most common cancer in men worldwide and the fourth most prevalent cancer overall. For pathologists, grading prostate cancer is challenging, especially when discriminating between Grade 3 (G3) and Grade 4 (G4). This paper proposes a Self-Supervised Learning (SSL) framework to classify prostate histopathological images when labeled images are scarce. In particular, a tailored Convolutional Auto Encoder (CAE) is trained to reconstruct 128x128x3 patches of prostate cancer Whole Slide Images (WSIs) as a pretext task. The downstream task of the proposed SSL paradigm is the automatic grading of histopathological patches of prostate cancer. The presented framework reports promising results on the validation set, obtaining an overall accuracy of 83% and on the test set, achieving an overall accuracy value of 76% with F1-score of 77% in G4.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge