Zahra Pooranian

SETTI: A Self-supervised Adversarial Malware Detection Architecture in an IoT Environment

Apr 16, 2022

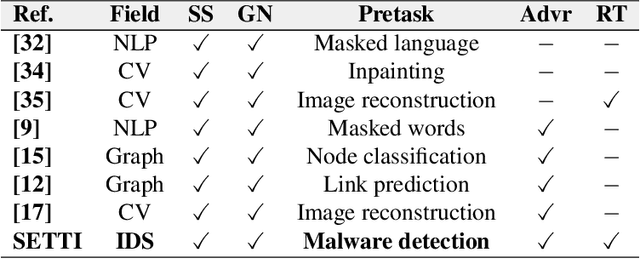

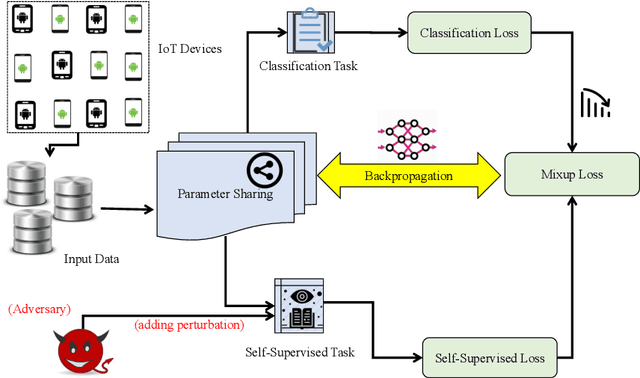

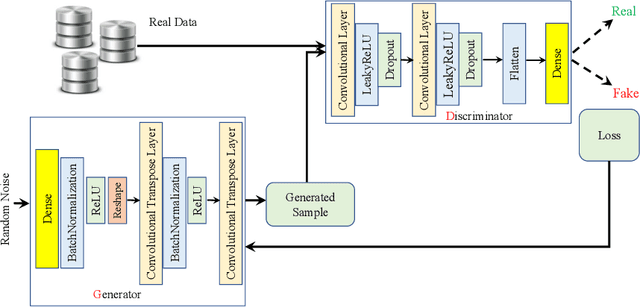

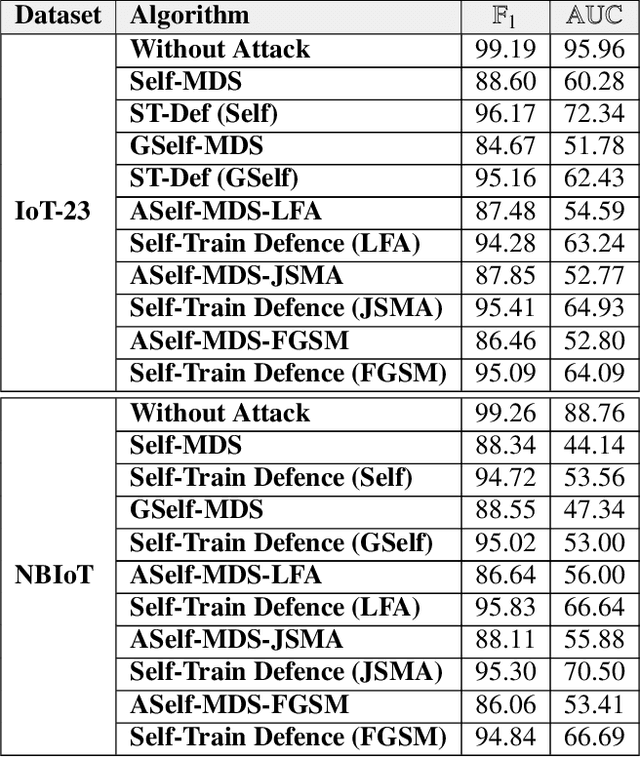

Abstract:In recent years, malware detection has become an active research topic in the area of Internet of Things (IoT) security. The principle is to exploit knowledge from large quantities of continuously generated malware. Existing algorithms practice available malware features for IoT devices and lack real-time prediction behaviors. More research is thus required on malware detection to cope with real-time misclassification of the input IoT data. Motivated by this, in this paper we propose an adversarial self-supervised architecture for detecting malware in IoT networks, SETTI, considering samples of IoT network traffic that may not be labeled. In the SETTI architecture, we design three self-supervised attack techniques, namely Self-MDS, GSelf-MDS and ASelf-MDS. The Self-MDS method considers the IoT input data and the adversarial sample generation in real-time. The GSelf-MDS builds a generative adversarial network model to generate adversarial samples in the self-supervised structure. Finally, ASelf-MDS utilizes three well-known perturbation sample techniques to develop adversarial malware and inject it over the self-supervised architecture. Also, we apply a defence method to mitigate these attacks, namely adversarial self-supervised training to protect the malware detection architecture against injecting the malicious samples. To validate the attack and defence algorithms, we conduct experiments on two recent IoT datasets: IoT23 and NBIoT. Comparison of the results shows that in the IoT23 dataset, the Self-MDS method has the most damaging consequences from the attacker's point of view by reducing the accuracy rate from 98% to 74%. In the NBIoT dataset, the ASelf-MDS method is the most devastating algorithm that can plunge the accuracy rate from 98% to 77%.

Similarity-based Android Malware Detection Using Hamming Distance of Static Binary Features

Aug 13, 2019

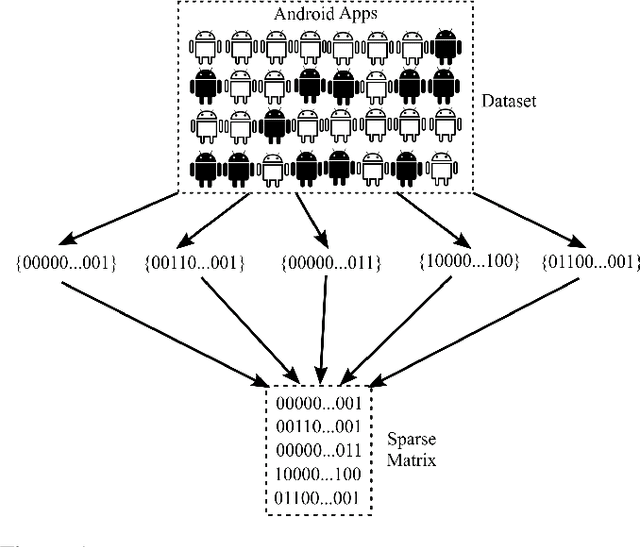

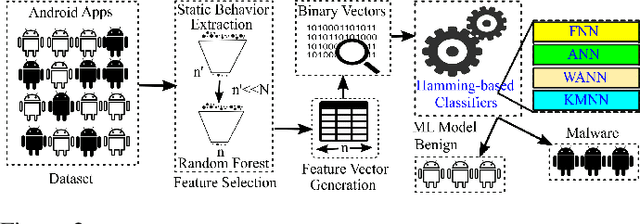

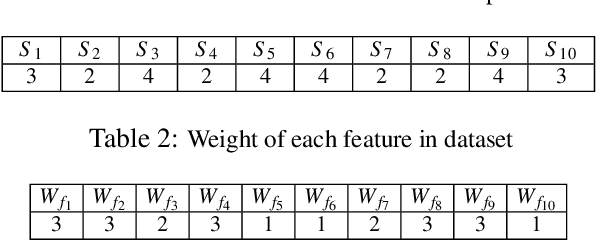

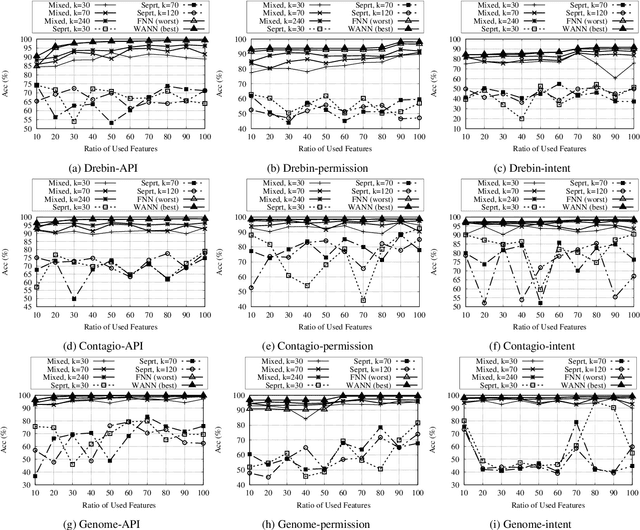

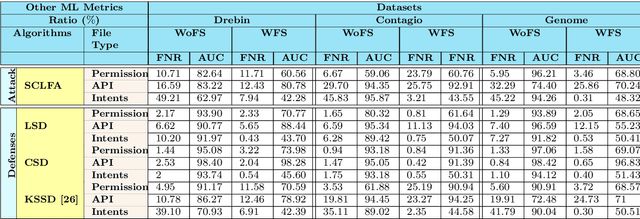

Abstract:In this paper, we develop four malware detection methods using Hamming distance to find similarity between samples which are first nearest neighbors (FNN), all nearest neighbors (ANN), weighted all nearest neighbors (WANN), and k-medoid based nearest neighbors (KMNN). In our proposed methods, we can trigger the alarm if we detect an Android app is malicious. Hence, our solutions help us to avoid the spread of detected malware on a broader scale. We provide a detailed description of the proposed detection methods and related algorithms. We include an extensive analysis to asses the suitability of our proposed similarity-based detection methods. In this way, we perform our experiments on three datasets, including benign and malware Android apps like Drebin, Contagio, and Genome. Thus, to corroborate the actual effectiveness of our classifier, we carry out performance comparisons with some state-of-the-art classification and malware detection algorithms, namely Mixed and Separated solutions, the program dissimilarity measure based on entropy (PDME) and the FalDroid algorithms. We test our experiments in a different type of features: API, intent, and permission features on these three datasets. The results confirm that accuracy rates of proposed algorithms are more than 90% and in some cases (i.e., considering API features) are more than 99%, and are comparable with existing state-of-the-art solutions.

On Defending Against Label Flipping Attacks on Malware Detection Systems

Aug 13, 2019

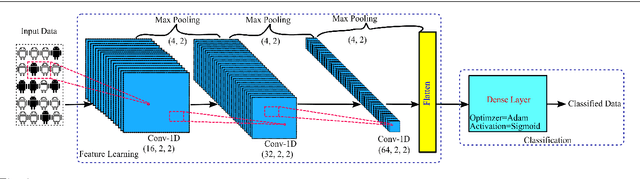

Abstract:Label manipulation attacks are a subclass of data poisoning attacks in adversarial machine learning used against different applications, such as malware detection. These types of attacks represent a serious threat to detection systems in environments having high noise rate or uncertainty, such as complex networks and Internet of Thing (IoT). Recent work in the literature has suggested using the $K$-Nearest Neighboring (KNN) algorithm to defend against such attacks. However, such an approach can suffer from low to wrong detection accuracy. In this paper, we design an architecture to tackle the Android malware detection problem in IoT systems. We develop an attack mechanism based on Silhouette clustering method, modified for mobile Android platforms. We proposed two Convolutional Neural Network (CNN)-type deep learning algorithms against this \emph{Silhouette Clustering-based Label Flipping Attack (SCLFA)}. We show the effectiveness of these two defense algorithms - \emph{Label-based Semi-supervised Defense (LSD)} and \emph{clustering-based Semi-supervised Defense (CSD)} - in correcting labels being attacked. We evaluate the performance of the proposed algorithms by varying the various machine learning parameters on three Android datasets: Drebin, Contagio, and Genome and three types of features: API, intent, and permission. Our evaluation shows that using random forest feature selection and varying ratios of features can result in an improvement of up to 19\% accuracy when compared with the state-of-the-art method in the literature.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge