Yuqi He

A Cyber Manufacturing IoT System for Adaptive Machine Learning Model Deployment by Interactive Causality Enabled Self-Labeling

Apr 09, 2024Abstract:Machine Learning (ML) has been demonstrated to improve productivity in many manufacturing applications. To host these ML applications, several software and Industrial Internet of Things (IIoT) systems have been proposed for manufacturing applications to deploy ML applications and provide real-time intelligence. Recently, an interactive causality enabled self-labeling method has been proposed to advance adaptive ML applications in cyber-physical systems, especially manufacturing, by automatically adapting and personalizing ML models after deployment to counter data distribution shifts. The unique features of the self-labeling method require a novel software system to support dynamism at various levels. This paper proposes the AdaptIoT system, comprised of an end-to-end data streaming pipeline, ML service integration, and an automated self-labeling service. The self-labeling service consists of causal knowledge bases and automated full-cycle self-labeling workflows to adapt multiple ML models simultaneously. AdaptIoT employs a containerized microservice architecture to deliver a scalable and portable solution for small and medium-sized manufacturers. A field demonstration of a self-labeling adaptive ML application is conducted with a makerspace and shows reliable performance.

FlowGNN: A Dataflow Architecture for Universal Graph Neural Network Inference via Multi-Queue Streaming

Apr 27, 2022

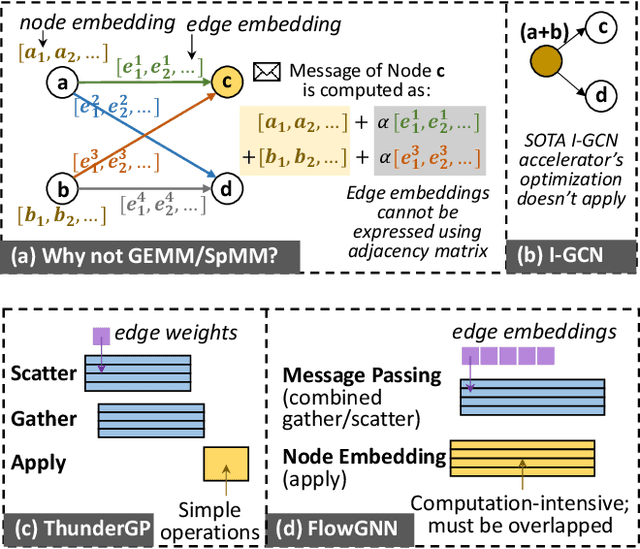

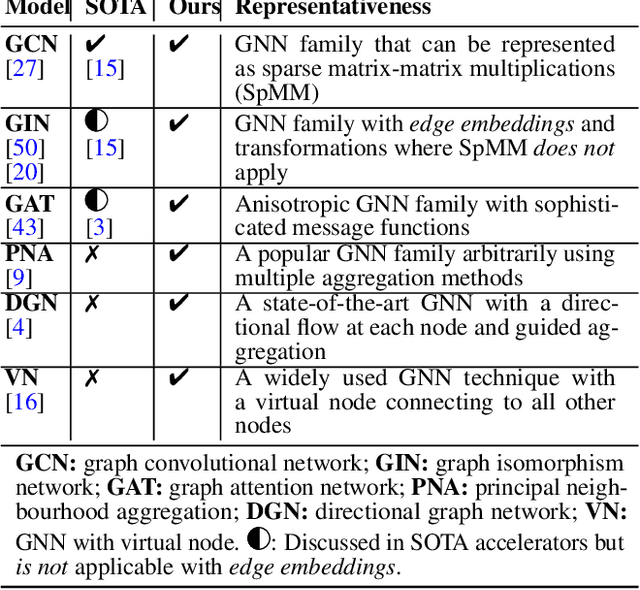

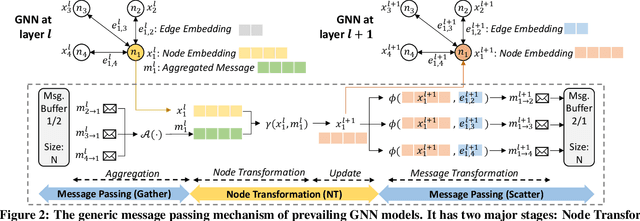

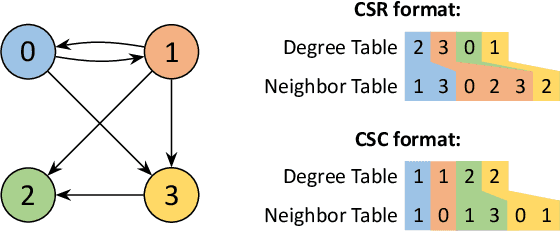

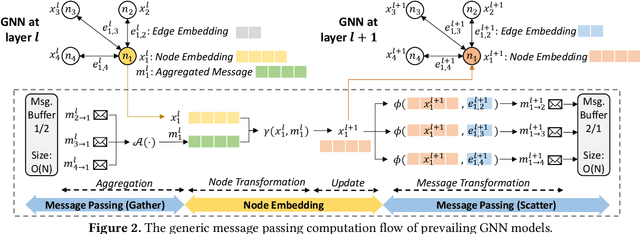

Abstract:Graph neural networks (GNNs) have recently exploded in popularity thanks to their broad applicability to graph-related problems such as quantum chemistry, drug discovery, and high energy physics. However, meeting demand for novel GNN models and fast inference simultaneously is challenging because of the gap between developing efficient accelerators and the rapid creation of new GNN models. Prior art focuses on the acceleration of specific classes of GNNs, such as Graph Convolutional Network (GCN), but lacks the generality to support a wide range of existing or new GNN models. Meanwhile, most work rely on graph pre-processing to exploit data locality, making them unsuitable for real-time applications. To address these limitations, in this work, we propose a generic dataflow architecture for GNN acceleration, named FlowGNN, which can flexibly support the majority of message-passing GNNs. The contributions are three-fold. First, we propose a novel and scalable dataflow architecture, which flexibly supports a wide range of GNN models with message-passing mechanism. The architecture features a configurable dataflow optimized for simultaneous computation of node embedding, edge embedding, and message passing, which is generally applicable to all models. We also propose a rich library of model-specific components. Second, we deliver ultra-fast real-time GNN inference without any graph pre-processing, making it agnostic to dynamically changing graph structures. Third, we verify our architecture on the Xilinx Alveo U50 FPGA board and measure the on-board end-to-end performance. We achieve a speed-up of up to 51-254x against CPU (6226R) and 1.3-477x against GPU (A6000) (with batch sizes 1 through 1024); we also outperform the SOTA GNN accelerator I-GCN by 1.03x and 1.25x across two datasets. Our implementation code and on-board measurement are publicly available on GitHub.

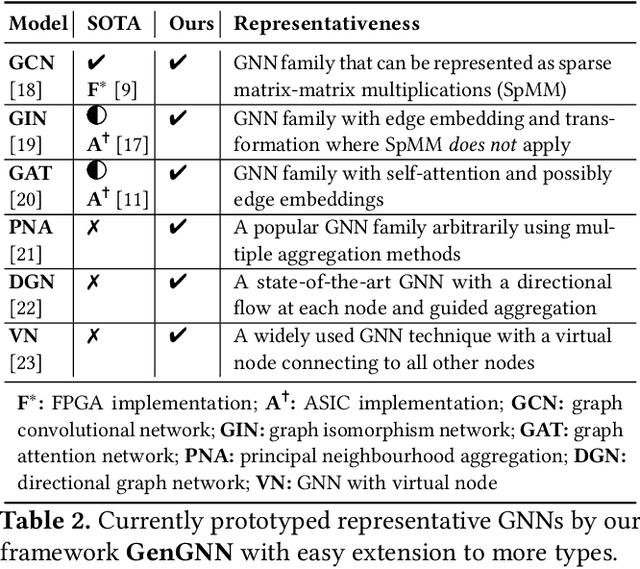

GenGNN: A Generic FPGA Framework for Graph Neural Network Acceleration

Jan 20, 2022

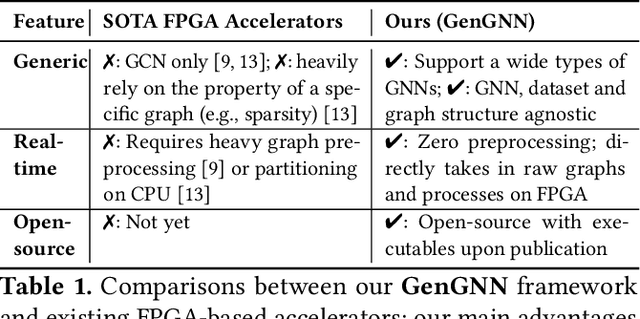

Abstract:Graph neural networks (GNNs) have recently exploded in popularity thanks to their broad applicability to ubiquitous graph-related problems such as quantum chemistry, drug discovery, and high energy physics. However, meeting demand for novel GNN models and fast inference simultaneously is challenging because of the gap between the difficulty in developing efficient FPGA accelerators and the rapid pace of creation of new GNN models. Prior art focuses on the acceleration of specific classes of GNNs but lacks the generality to work across existing models or to extend to new and emerging GNN models. In this work, we propose a generic GNN acceleration framework using High-Level Synthesis (HLS), named GenGNN, with two-fold goals. First, we aim to deliver ultra-fast GNN inference without any graph pre-processing for real-time requirements. Second, we aim to support a diverse set of GNN models with the extensibility to flexibly adapt to new models. The framework features an optimized message-passing structure applicable to all models, combined with a rich library of model-specific components. We verify our implementation on-board on the Xilinx Alveo U50 FPGA and observe a speed-up of up to 25x against CPU (6226R) baseline and 13x against GPU (A6000) baseline. Our HLS code will be open-source on GitHub upon acceptance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge