Yunfei Ge

Humanoid Whole-Body Locomotion on Narrow Terrain via Dynamic Balance and Reinforcement Learning

Feb 24, 2025Abstract:Humans possess delicate dynamic balance mechanisms that enable them to maintain stability across diverse terrains and under extreme conditions. However, despite significant advances recently, existing locomotion algorithms for humanoid robots are still struggle to traverse extreme environments, especially in cases that lack external perception (e.g., vision or LiDAR). This is because current methods often rely on gait-based or perception-condition rewards, lacking effective mechanisms to handle unobservable obstacles and sudden balance loss. To address this challenge, we propose a novel whole-body locomotion algorithm based on dynamic balance and Reinforcement Learning (RL) that enables humanoid robots to traverse extreme terrains, particularly narrow pathways and unexpected obstacles, using only proprioception. Specifically, we introduce a dynamic balance mechanism by leveraging an extended measure of Zero-Moment Point (ZMP)-driven rewards and task-driven rewards in a whole-body actor-critic framework, aiming to achieve coordinated actions of the upper and lower limbs for robust locomotion. Experiments conducted on a full-sized Unitree H1-2 robot verify the ability of our method to maintain balance on extremely narrow terrains and under external disturbances, demonstrating its effectiveness in enhancing the robot's adaptability to complex environments. The videos are given at https://whole-body-loco.github.io.

The Game-Theoretic Symbiosis of Trust and AI in Networked Systems

Nov 19, 2024Abstract:This chapter explores the symbiotic relationship between Artificial Intelligence (AI) and trust in networked systems, focusing on how these two elements reinforce each other in strategic cybersecurity contexts. AI's capabilities in data processing, learning, and real-time response offer unprecedented support for managing trust in dynamic, complex networks. However, the successful integration of AI also hinges on the trustworthiness of AI systems themselves. Using a game-theoretic framework, this chapter presents approaches to trust evaluation, the strategic role of AI in cybersecurity, and governance frameworks that ensure responsible AI deployment. We investigate how trust, when dynamically managed through AI, can form a resilient security ecosystem. By examining trust as both an AI output and an AI requirement, this chapter sets the foundation for a positive feedback loop where AI enhances network security and the trust placed in AI systems fosters their adoption.

ADAPT: A Game-Theoretic and Neuro-Symbolic Framework for Automated Distributed Adaptive Penetration Testing

Oct 31, 2024Abstract:The integration of AI into modern critical infrastructure systems, such as healthcare, has introduced new vulnerabilities that can significantly impact workflow, efficiency, and safety. Additionally, the increased connectivity has made traditional human-driven penetration testing insufficient for assessing risks and developing remediation strategies. Consequently, there is a pressing need for a distributed, adaptive, and efficient automated penetration testing framework that not only identifies vulnerabilities but also provides countermeasures to enhance security posture. This work presents ADAPT, a game-theoretic and neuro-symbolic framework for automated distributed adaptive penetration testing, specifically designed to address the unique cybersecurity challenges of AI-enabled healthcare infrastructure networks. We use a healthcare system case study to illustrate the methodologies within ADAPT. The proposed solution enables a learning-based risk assessment. Numerical experiments are used to demonstrate effective countermeasures against various tactical techniques employed by adversarial AI.

MEGA-PT: A Meta-Game Framework for Agile Penetration Testing

Sep 21, 2024

Abstract:Penetration testing is an essential means of proactive defense in the face of escalating cybersecurity incidents. Traditional manual penetration testing methods are time-consuming, resource-intensive, and prone to human errors. Current trends in automated penetration testing are also impractical, facing significant challenges such as the curse of dimensionality, scalability issues, and lack of adaptability to network changes. To address these issues, we propose MEGA-PT, a meta-game penetration testing framework, featuring micro tactic games for node-level local interactions and a macro strategy process for network-wide attack chains. The micro- and macro-level modeling enables distributed, adaptive, collaborative, and fast penetration testing. MEGA-PT offers agile solutions for various security schemes, including optimal local penetration plans, purple teaming solutions, and risk assessment, providing fundamental principles to guide future automated penetration testing. Our experiments demonstrate the effectiveness and agility of our model by providing improved defense strategies and adaptability to changes at both local and network levels.

Attributing Responsibility in AI-Induced Incidents: A Computational Reflective Equilibrium Framework for Accountability

Apr 25, 2024

Abstract:The pervasive integration of Artificial Intelligence (AI) has introduced complex challenges in the responsibility and accountability in the event of incidents involving AI-enabled systems. The interconnectivity of these systems, ethical concerns of AI-induced incidents, coupled with uncertainties in AI technology and the absence of corresponding regulations, have made traditional responsibility attribution challenging. To this end, this work proposes a Computational Reflective Equilibrium (CRE) approach to establish a coherent and ethically acceptable responsibility attribution framework for all stakeholders. The computational approach provides a structured analysis that overcomes the limitations of conceptual approaches in dealing with dynamic and multifaceted scenarios, showcasing the framework's explainability, coherence, and adaptivity properties in the responsibility attribution process. We examine the pivotal role of the initial activation level associated with claims in equilibrium computation. Using an AI-assisted medical decision-support system as a case study, we illustrate how different initializations lead to diverse responsibility distributions. The framework offers valuable insights into accountability in AI-induced incidents, facilitating the development of a sustainable and resilient system through continuous monitoring, revision, and reflection.

AI Liability Insurance With an Example in AI-Powered E-diagnosis System

Jun 01, 2023

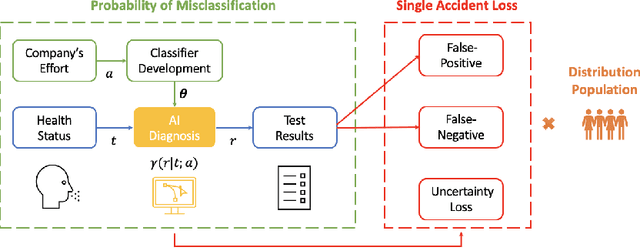

Abstract:Artificial Intelligence (AI) has received an increasing amount of attention in multiple areas. The uncertainties and risks in AI-powered systems have created reluctance in their wild adoption. As an economic solution to compensate for potential damages, AI liability insurance is a promising market to enhance the integration of AI into daily life. In this work, we use an AI-powered E-diagnosis system as an example to study AI liability insurance. We provide a quantitative risk assessment model with evidence-based numerical analysis. We discuss the insurability criteria for AI technologies and suggest necessary adjustments to accommodate the features of AI products. We show that AI liability insurance can act as a regulatory mechanism to incentivize compliant behaviors and serve as a certificate of high-quality AI systems. Furthermore, we suggest premium adjustment to reflect the dynamic evolution of the inherent uncertainty in AI. Moral hazard problems are discussed and suggestions for AI liability insurance are provided.

Scenario-Agnostic Zero-Trust Defense with Explainable Threshold Policy: A Meta-Learning Approach

Mar 06, 2023

Abstract:The increasing connectivity and intricate remote access environment have made traditional perimeter-based network defense vulnerable. Zero trust becomes a promising approach to provide defense policies based on agent-centric trust evaluation. However, the limited observations of the agent's trace bring information asymmetry in the decision-making. To facilitate the human understanding of the policy and the technology adoption, one needs to create a zero-trust defense that is explainable to humans and adaptable to different attack scenarios. To this end, we propose a scenario-agnostic zero-trust defense based on Partially Observable Markov Decision Processes (POMDP) and first-order Meta-Learning using only a handful of sample scenarios. The framework leads to an explainable and generalizable trust-threshold defense policy. To address the distribution shift between empirical security datasets and reality, we extend the model to a robust zero-trust defense minimizing the worst-case loss. We use case studies and real-world attacks to corroborate the results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge