Yufan Liao

Invariant Random Forest: Tree-Based Model Solution for OOD Generalization

Dec 20, 2023

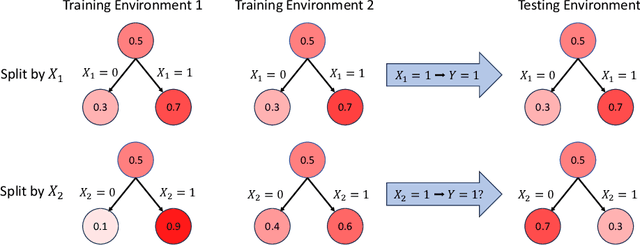

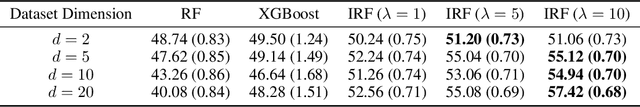

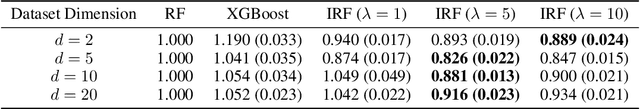

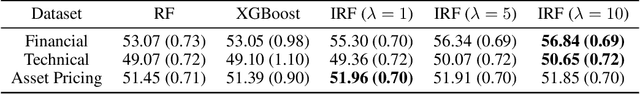

Abstract:Out-Of-Distribution (OOD) generalization is an essential topic in machine learning. However, recent research is only focusing on the corresponding methods for neural networks. This paper introduces a novel and effective solution for OOD generalization of decision tree models, named Invariant Decision Tree (IDT). IDT enforces a penalty term with regard to the unstable/varying behavior of a split across different environments during the growth of the tree. Its ensemble version, the Invariant Random Forest (IRF), is constructed. Our proposed method is motivated by a theoretical result under mild conditions, and validated by numerical tests with both synthetic and real datasets. The superior performance compared to non-OOD tree models implies that considering OOD generalization for tree models is absolutely necessary and should be given more attention.

Decorr: Environment Partitioning for Invariant Learning and OOD Generalization

Nov 18, 2022Abstract:Invariant learning methods try to find an invariant predictor across several environments and have become popular in OOD generalization. However, in situations where environments do not naturally exist in the data, they have to be decided by practitioners manually. Environment partitioning, which splits the whole training dataset into environments by algorithms, will significantly influence the performance of invariant learning and has been left undiscussed. A good environment partitioning method can bring invariant learning to applications with more general settings and improve its performance. We propose to split the dataset into several environments by finding low-correlated data subsets. Theoretical interpretations and algorithm details are both introduced in the paper. Through experiments on both synthetic and real data, we show that our Decorr method can achieve outstanding performance, while some other partitioning methods may lead to bad, even below-ERM results using the same training scheme of IRM.

The Brain-Inspired Decoder for Natural Visual Image Reconstruction

Jul 18, 2022

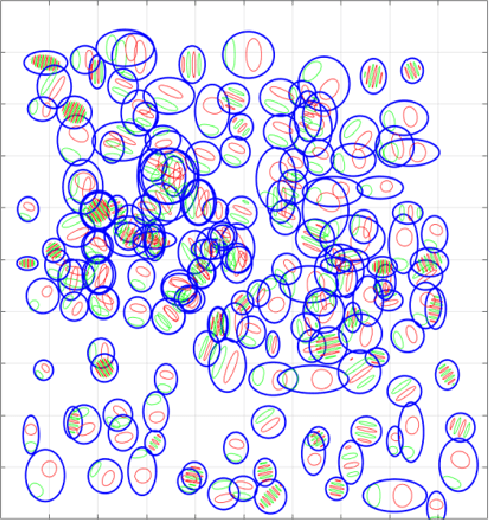

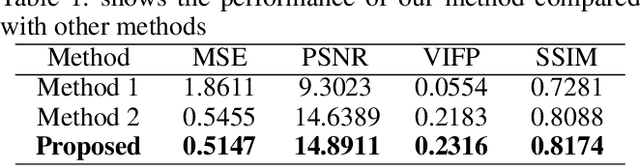

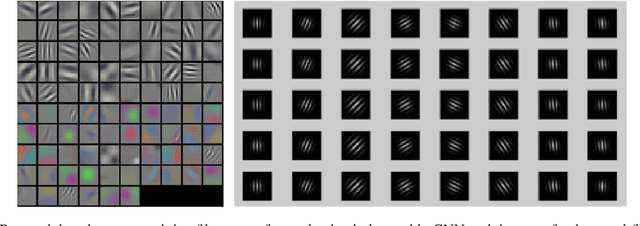

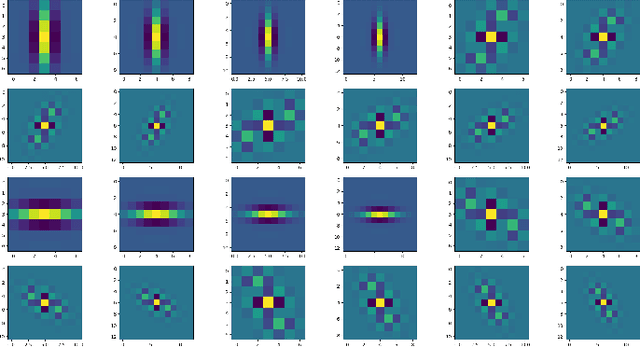

Abstract:Decoding images from brain activity has been a challenge. Owing to the development of deep learning, there are available tools to solve this problem. The decoded image, which aims to map neural spike trains to low-level visual features and high-level semantic information space. Recently, there are a few studies of decoding from spike trains, however, these studies pay less attention to the foundations of neuroscience and there are few studies that merged receptive field into visual image reconstruction. In this paper, we propose a deep learning neural network architecture with biological properties to reconstruct visual image from spike trains. As far as we know, we implemented a method that integrated receptive field property matrix into loss function at the first time. Our model is an end-to-end decoder from neural spike trains to images. We not only merged Gabor filter into auto-encoder which used to generate images but also proposed a loss function with receptive field properties. We evaluated our decoder on two datasets which contain macaque primary visual cortex neural spikes and salamander retina ganglion cells (RGCs) spikes. Our results show that our method can effectively combine receptive field features to reconstruct images, providing a new approach to visual reconstruction based on neural information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge