Yuanxi Ma

Hair Segmentation on Time-of-Flight RGBD Images

Mar 11, 2019

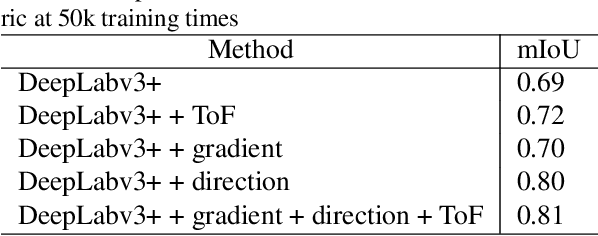

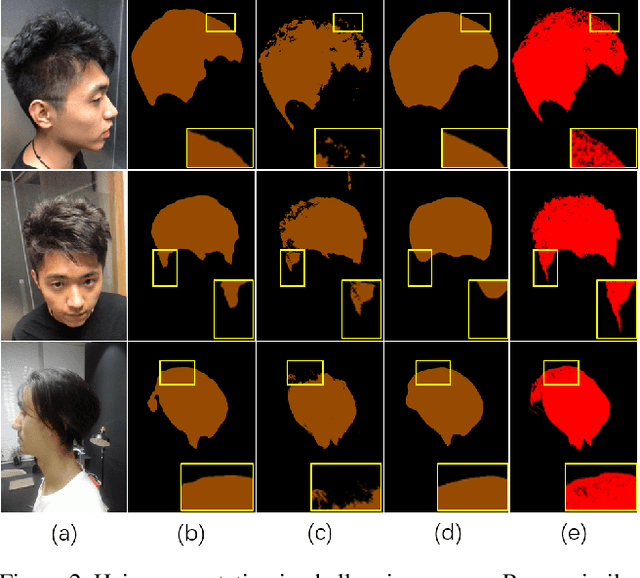

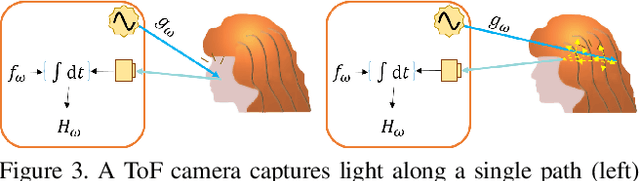

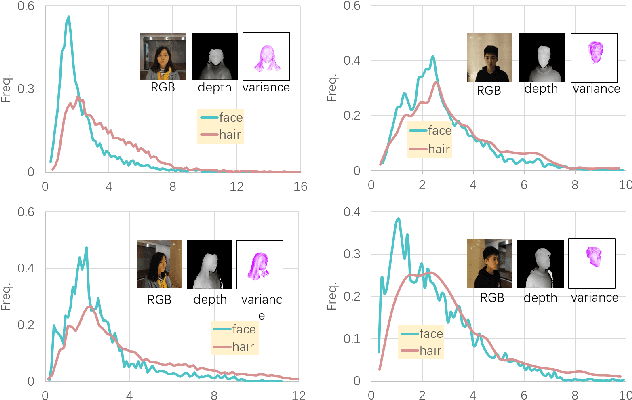

Abstract:Robust segmentation of hair from portrait images remains challenging: hair does not conform to a uniform shape, style or even color; dark hair in particular lacks features. We present a novel computational imaging solution that tackles the problem from both input and processing fronts. We explore using Time-of-Flight (ToF) RGBD sensors on recent mobile devices. We first conduct a comprehensive analysis to show that scattering and inter-reflection cause different noise patterns on hair vs. non-hair regions on ToF images, by changing the light path and/or combining multiple paths. We then develop a deep network based approach that employs both ToF depth map and the RGB gradient maps to produce an initial hair segmentation with labeled hair components. We then refine the result by imposing ToF noise prior under the conditional random field. We collect the first ToF RGBD hair dataset with 20k+ head images captured on 30 human subjects with a variety of hairstyles at different view angles. Comprehensive experiments show that our approach outperforms the RGB based techniques in accuracy and robustness and can handle traditionally challenging cases such as dark hair, similar hair/background, similar hair/foreground, etc.

Semantic See-Through Rendering on Light Fields

Mar 26, 2018

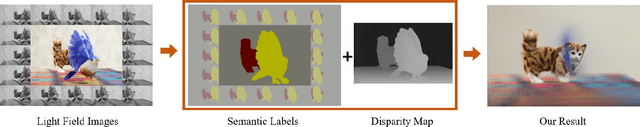

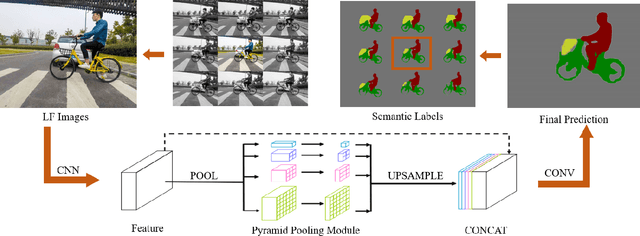

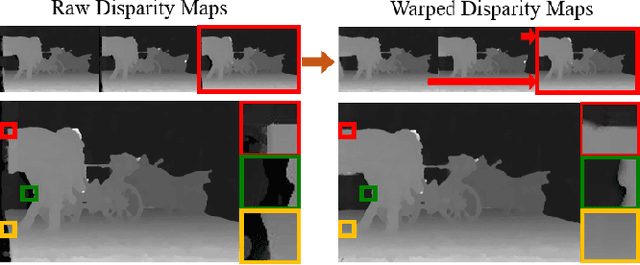

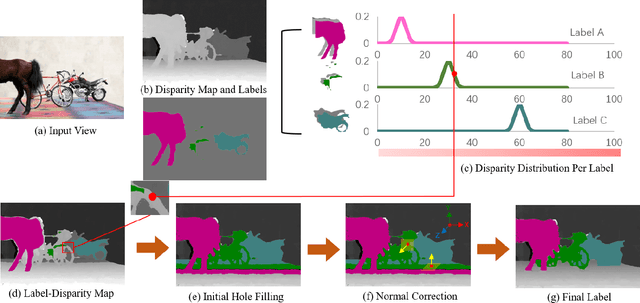

Abstract:We present a novel semantic light field (LF) refocusing technique that can achieve unprecedented see-through quality. Different from prior art, our semantic see-through (SST) differentiates rays in their semantic meaning and depth. Specifically, we combine deep learning and stereo matching to provide each ray a semantic label. We then design tailored weighting schemes for blending the rays. Although simple, our solution can effectively remove foreground residues when focusing on the background. At the same time, SST maintains smooth transitions in varying focal depths. Comprehensive experiments on synthetic and new real indoor and outdoor datasets demonstrate the effectiveness and usefulness of our technique.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge