Yosra Marnissi

Learnable Adaptive Time-Frequency Representation via Differentiable Short-Time Fourier Transform

Jun 26, 2025Abstract:The short-time Fourier transform (STFT) is widely used for analyzing non-stationary signals. However, its performance is highly sensitive to its parameters, and manual or heuristic tuning often yields suboptimal results. To overcome this limitation, we propose a unified differentiable formulation of the STFT that enables gradient-based optimization of its parameters. This approach addresses the limitations of traditional STFT parameter tuning methods, which often rely on computationally intensive discrete searches. It enables fine-tuning of the time-frequency representation (TFR) based on any desired criterion. Moreover, our approach integrates seamlessly with neural networks, allowing joint optimization of the STFT parameters and network weights. The efficacy of the proposed differentiable STFT in enhancing TFRs and improving performance in downstream tasks is demonstrated through experiments on both simulated and real-world data.

Differentiable adaptive short-time Fourier transform with respect to the window length

Jul 26, 2023

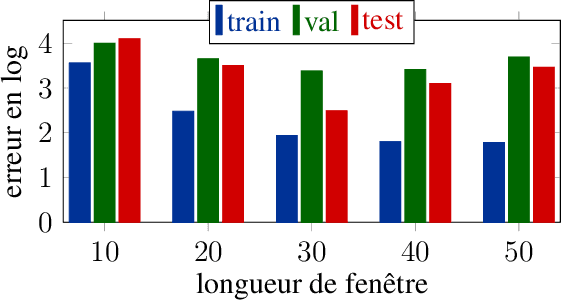

Abstract:This paper presents a gradient-based method for on-the-fly optimization for both per-frame and per-frequency window length of the short-time Fourier transform (STFT), related to previous work in which we developed a differentiable version of STFT by making the window length a continuous parameter. The resulting differentiable adaptive STFT possesses commendable properties, such as the ability to adapt in the same time-frequency representation to both transient and stationary components, while being easily optimized by gradient descent. We validate the performance of our method in vibration analysis.

Differentiable short-time Fourier transform with respect to the hop length

Jul 26, 2023

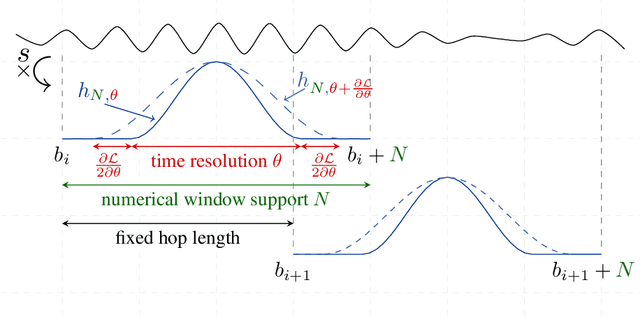

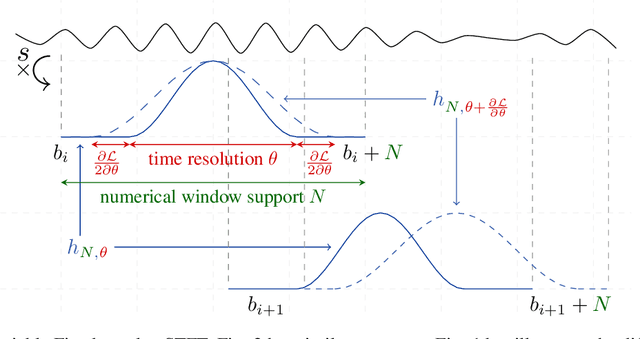

Abstract:In this paper, we propose a differentiable version of the short-time Fourier transform (STFT) that allows for gradient-based optimization of the hop length or the frame temporal position by making these parameters continuous. Our approach provides improved control over the temporal positioning of frames, as the continuous nature of the hop length allows for a more finely-tuned optimization. Furthermore, our contribution enables the use of optimization methods such as gradient descent, which are more computationally efficient than conventional discrete optimization methods. Our differentiable STFT can also be easily integrated into existing algorithms and neural networks. We present a simulated illustration to demonstrate the efficacy of our approach and to garner interest from the research community.

A differentiable short-time Fourier transform with respect to the window length

Aug 25, 2022

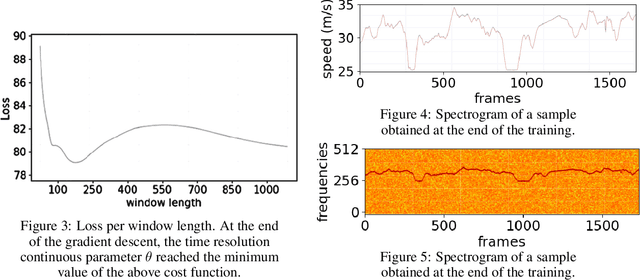

Abstract:In this paper, we revisit the use of spectrograms in neural networks, by making the window length a continuous parameter optimizable by gradient descent instead of an empirically tuned integer-valued hyperparameter. The contribution is mostly theoretical at this point, but plugging the modified STFT into any existing neural network is straightforward. We first define a differentiable version of the STFT in the case where local bins centers are fixed and independent of the window length parameter. We then discuss the more difficult case where the window length affects the position and number of bins. We illustrate the benefits of this new tool on an estimation and a classification problems, showing it can be of interest not only to neural networks but to any STFT-based signal processing algorithm.

A Variational Bayesian Approach for Image Restoration. Application to Image Deblurring with Poisson-Gaussian Noise

Jan 20, 2017

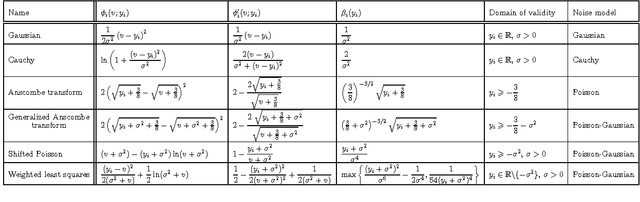

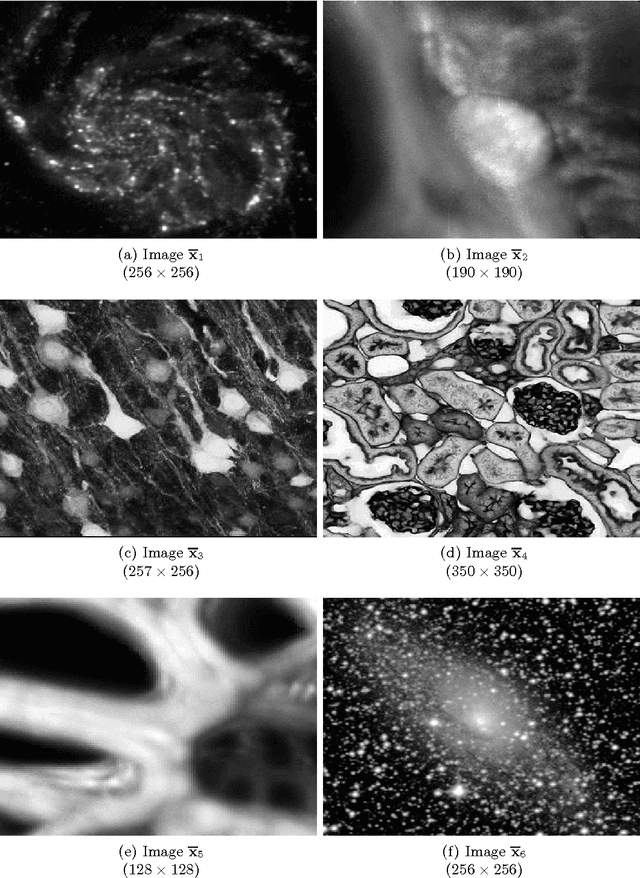

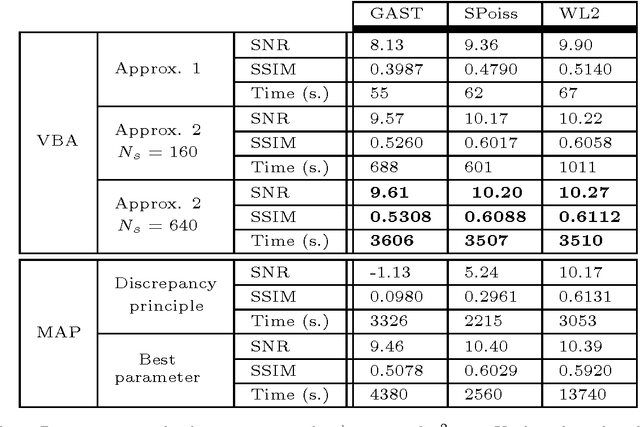

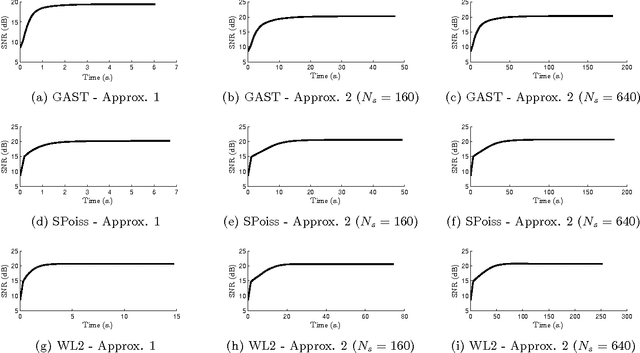

Abstract:In this paper, a methodology is investigated for signal recovery in the presence of non-Gaussian noise. In contrast with regularized minimization approaches often adopted in the literature, in our algorithm the regularization parameter is reliably estimated from the observations. As the posterior density of the unknown parameters is analytically intractable, the estimation problem is derived in a variational Bayesian framework where the goal is to provide a good approximation to the posterior distribution in order to compute posterior mean estimates. Moreover, a majorization technique is employed to circumvent the difficulties raised by the intricate forms of the non-Gaussian likelihood and of the prior density. We demonstrate the potential of the proposed approach through comparisons with state-of-the-art techniques that are specifically tailored to signal recovery in the presence of mixed Poisson-Gaussian noise. Results show that the proposed approach is efficient and achieves performance comparable with other methods where the regularization parameter is manually tuned from the ground truth.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge