Yifeng Guo

ERNIE 5.0 Technical Report

Feb 04, 2026Abstract:In this report, we introduce ERNIE 5.0, a natively autoregressive foundation model desinged for unified multimodal understanding and generation across text, image, video, and audio. All modalities are trained from scratch under a unified next-group-of-tokens prediction objective, based on an ultra-sparse mixture-of-experts (MoE) architecture with modality-agnostic expert routing. To address practical challenges in large-scale deployment under diverse resource constraints, ERNIE 5.0 adopts a novel elastic training paradigm. Within a single pre-training run, the model learns a family of sub-models with varying depths, expert capacities, and routing sparsity, enabling flexible trade-offs among performance, model size, and inference latency in memory- or time-constrained scenarios. Moreover, we systematically address the challenges of scaling reinforcement learning to unified foundation models, thereby guaranteeing efficient and stable post-training under ultra-sparse MoE architectures and diverse multimodal settings. Extensive experiments demonstrate that ERNIE 5.0 achieves strong and balanced performance across multiple modalities. To the best of our knowledge, among publicly disclosed models, ERNIE 5.0 represents the first production-scale realization of a trillion-parameter unified autoregressive model that supports both multimodal understanding and generation. To facilitate further research, we present detailed visualizations of modality-agnostic expert routing in the unified model, alongside comprehensive empirical analysis of elastic training, aiming to offer profound insights to the community.

AI-Driven Prediction of Cancer Pain Episodes: A Hybrid Decision Support Approach

Dec 18, 2025Abstract:Lung cancer patients frequently experience breakthrough pain episodes, with up to 91% requiring timely intervention. To enable proactive pain management, we propose a hybrid machine learning and large language model pipeline that predicts pain episodes within 48 and 72 hours of hospitalization using both structured and unstructured electronic health record data. A retrospective cohort of 266 inpatients was analyzed, with features including demographics, tumor stage, vital signs, and WHO-tiered analgesic use. The machine learning module captured temporal medication trends, while the large language model interpreted ambiguous dosing records and free-text clinical notes. Integrating these modalities improved sensitivity and interpretability. Our framework achieved an accuracy of 0.874 (48h) and 0.917 (72h), with an improvement in sensitivity of 8.6% and 10.4% due to the augmentation of large language model. This hybrid approach offers a clinically interpretable and scalable tool for early pain episode forecasting, with potential to enhance treatment precision and optimize resource allocation in oncology care.

Explainable Recommendation Systems by Generalized Additive Models with Manifest and Latent Interactions

Dec 15, 2020

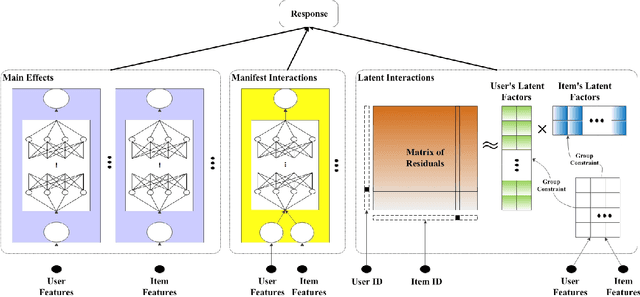

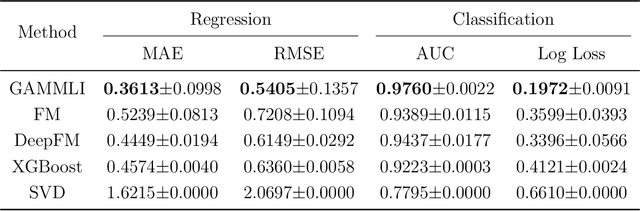

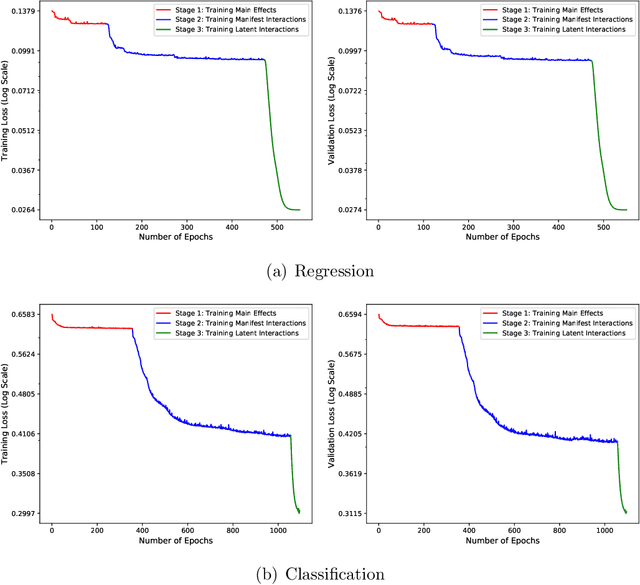

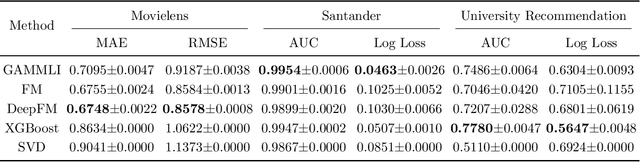

Abstract:In recent years, the field of recommendation systems has attracted increasing attention to developing predictive models that provide explanations of why an item is recommended to a user. The explanations can be either obtained by post-hoc diagnostics after fitting a relatively complex model or embedded into an intrinsically interpretable model. In this paper, we propose the explainable recommendation systems based on a generalized additive model with manifest and latent interactions (GAMMLI). This model architecture is intrinsically interpretable, as it additively consists of the user and item main effects, the manifest user-item interactions based on observed features, and the latent interaction effects from residuals. Unlike conventional collaborative filtering methods, the group effect of users and items are considered in GAMMLI. It is beneficial for enhancing the model interpretability, and can also facilitate the cold-start recommendation problem. A new Python package GAMMLI is developed for efficient model training and visualized interpretation of the results. By numerical experiments based on simulation data and real-world cases, the proposed method is shown to have advantages in both predictive performance and explainable recommendation.

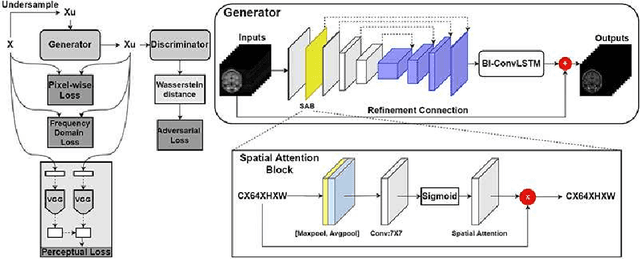

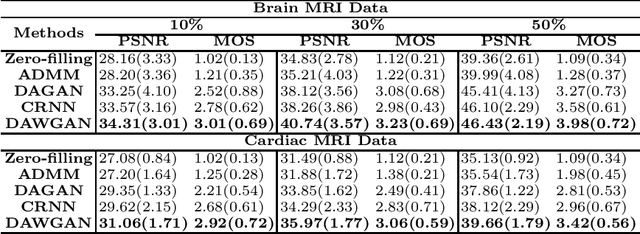

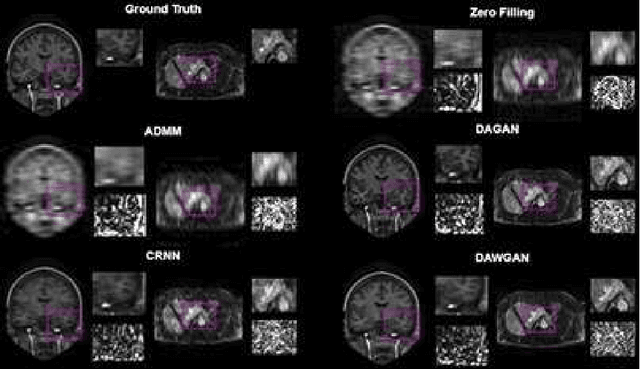

Deep Attentive Wasserstein Generative Adversarial Networks for MRI Reconstruction with Recurrent Context-Awareness

Jun 23, 2020

Abstract:The performance of traditional compressive sensing-based MRI (CS-MRI) reconstruction is affected by its slow iterative procedure and noise-induced artefacts. Although many deep learning-based CS-MRI methods have been proposed to mitigate the problems of traditional methods, they have not been able to achieve more robust results at higher acceleration factors. Most of the deep learning-based CS-MRI methods still can not fully mine the information from the k-space, which leads to unsatisfactory results in the MRI reconstruction. In this study, we propose a new deep learning-based CS-MRI reconstruction method to fully utilise the relationship among sequential MRI slices by coupling Wasserstein Generative Adversarial Networks (WGAN) with Recurrent Neural Networks. Further development of an attentive unit enables our model to reconstruct more accurate anatomical structures for the MRI data. By experimenting on different MRI datasets, we have demonstrated that our method can not only achieve better results compared to the state-of-the-arts but can also effectively reduce residual noise generated during the reconstruction process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge