Yidong Zhang

Balanced Anomaly-guided Ego-graph Diffusion Model for Inductive Graph Anomaly Detection

Feb 05, 2026Abstract:Graph anomaly detection (GAD) is crucial in applications like fraud detection and cybersecurity. Despite recent advancements using graph neural networks (GNNs), two major challenges persist. At the model level, most methods adopt a transductive learning paradigm, which assumes static graph structures, making them unsuitable for dynamic, evolving networks. At the data level, the extreme class imbalance, where anomalous nodes are rare, leads to biased models that fail to generalize to unseen anomalies. These challenges are interdependent: static transductive frameworks limit effective data augmentation, while imbalance exacerbates model distortion in inductive learning settings. To address these challenges, we propose a novel data-centric framework that integrates dynamic graph modeling with balanced anomaly synthesis. Our framework features: (1) a discrete ego-graph diffusion model, which captures the local topology of anomalies to generate ego-graphs aligned with anomalous structural distribution, and (2) a curriculum anomaly augmentation mechanism, which dynamically adjusts synthetic data generation during training, focusing on underrepresented anomaly patterns to improve detection and generalization. Experiments on five datasets demonstrate that the effectiveness of our framework.

A Deep Q-Network Based on Radial Basis Functions for Multi-Echelon Inventory Management

Jan 29, 2024

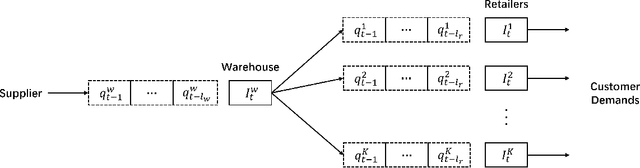

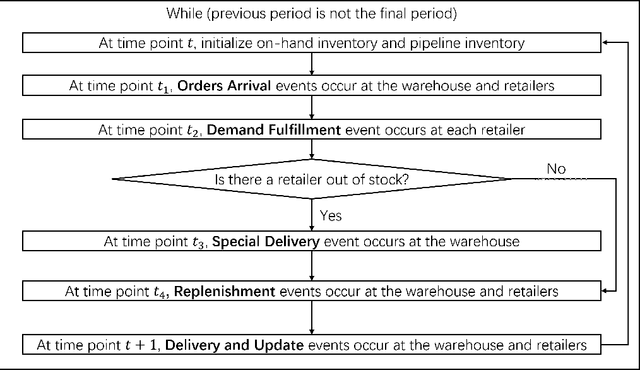

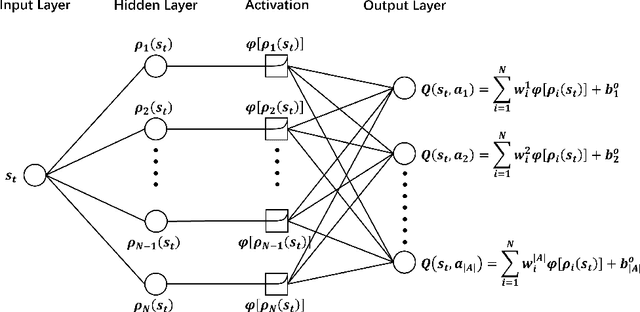

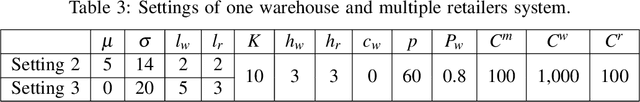

Abstract:This paper addresses a multi-echelon inventory management problem with a complex network topology where deriving optimal ordering decisions is difficult. Deep reinforcement learning (DRL) has recently shown potential in solving such problems, while designing the neural networks in DRL remains a challenge. In order to address this, a DRL model is developed whose Q-network is based on radial basis functions. The approach can be more easily constructed compared to classic DRL models based on neural networks, thus alleviating the computational burden of hyperparameter tuning. Through a series of simulation experiments, the superior performance of this approach is demonstrated compared to the simple base-stock policy, producing a better policy in the multi-echelon system and competitive performance in the serial system where the base-stock policy is optimal. In addition, the approach outperforms current DRL approaches.

Dynamic Pricing on E-commerce Platform with Deep Reinforcement Learning

Dec 05, 2019

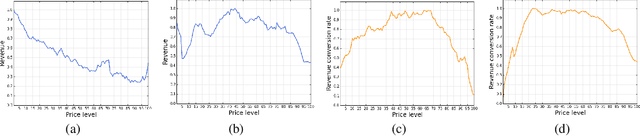

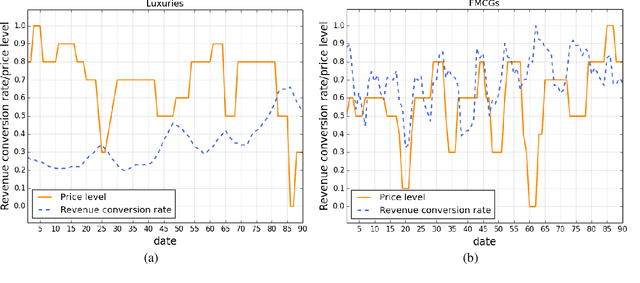

Abstract:In this paper we present an end-to-end framework for addressing the problem of dynamic pricing on E-commerce platform using methods based on deep reinforcement learning (DRL). By using four groups of different business data to represent the states of each time period, we model the dynamic pricing problem as a Markov Decision Process (MDP). Compared with the state-of-the-art DRL-based dynamic pricing algorithms, our approaches make the following three contributions. First, we extend the discrete set problem to the continuous price set. Second, instead of using revenue as the reward function directly, we define a new function named difference of revenue conversion rates (DRCR). Third, the cold-start problem of MDP is tackled by pre-training and evaluation using some carefully chosen historical sales data. Our approaches are evaluated by both offline evaluation method using real dataset of Alibaba Inc., and online field experiments on Tmall.com, a major online shopping website owned by Alibaba Inc.. In particular, experiment results suggest that DRCR is a more appropriate reward function than revenue, which is widely used by current literature. In the end, field experiments, which last for months on 1000 stock keeping units (SKUs) of products demonstrate that continuous price sets have better performance than discrete sets and show that our approaches significantly outperformed the manual pricing by operation experts.

Context-Based Dynamic Pricing with Online Clustering

Feb 17, 2019

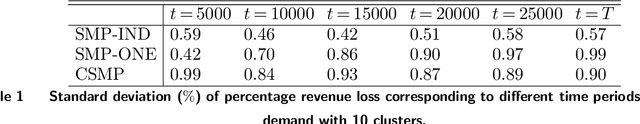

Abstract:We consider a context-based dynamic pricing problem of online products which have low sales. Sales data from Alibaba, a major global online retailer, illustrate the prevalence of low-sale products. For these products, existing single-product dynamic pricing algorithms do not work well due to insufficient data samples. To address this challenge, we propose pricing policies that concurrently perform clustering over products and set individual pricing decisions on the fly. By clustering data and identifying products that have similar demand patterns, we utilize sales data from products within the same cluster to improve demand estimation and allow for better pricing decisions. We evaluate the algorithms using the regret, and the result shows that when product demand functions come from multiple clusters, our algorithms significantly outperform traditional single-product pricing policies. Numerical experiments using a real dataset from Alibaba demonstrate that the proposed policies, compared with several benchmark policies, increase the revenue. The results show that online clustering is an effective approach to tackling dynamic pricing problems associated with low-sale products. Our algorithms were further implemented in a field study at Alibaba with 40 products for 30 consecutive days, and compared to the products which use business-as-usual pricing policy of Alibaba. The results from the field experiment show that the overall revenue increased by 10.14%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge