Yan Kyaw Tun

Resource-Efficient Beam Prediction in mmWave Communications with Multimodal Realistic Simulation Framework

Apr 07, 2025Abstract:Beamforming is a key technology in millimeter-wave (mmWave) communications that improves signal transmission by optimizing directionality and intensity. However, conventional channel estimation methods, such as pilot signals or beam sweeping, often fail to adapt to rapidly changing communication environments. To address this limitation, multimodal sensing-aided beam prediction has gained significant attention, using various sensing data from devices such as LiDAR, radar, GPS, and RGB images to predict user locations or network conditions. Despite its promising potential, the adoption of multimodal sensing-aided beam prediction is hindered by high computational complexity, high costs, and limited datasets. Thus, in this paper, a resource-efficient learning approach is proposed to transfer knowledge from a multimodal network to a monomodal (radar-only) network based on cross-modal relational knowledge distillation (CRKD), while reducing computational overhead and preserving predictive accuracy. To enable multimodal learning with realistic data, a novel multimodal simulation framework is developed while integrating sensor data generated from the autonomous driving simulator CARLA with MATLAB-based mmWave channel modeling, and reflecting real-world conditions. The proposed CRKD achieves its objective by distilling relational information across different feature spaces, which enhances beam prediction performance without relying on expensive sensor data. Simulation results demonstrate that CRKD efficiently distills multimodal knowledge, allowing a radar-only model to achieve $94.62\%$ of the teacher performance. In particular, this is achieved with just $10\%$ of the teacher network's parameters, thereby significantly reducing computational complexity and dependence on multimodal sensor data.

Towards Satellite Non-IID Imagery: A Spectral Clustering-Assisted Federated Learning Approach

Oct 17, 2024Abstract:Low Earth orbit (LEO) satellites are capable of gathering abundant Earth observation data (EOD) to enable different Internet of Things (IoT) applications. However, to accomplish an effective EOD processing mechanism, it is imperative to investigate: 1) the challenge of processing the observed data without transmitting those large-size data to the ground because the connection between the satellites and the ground stations is intermittent, and 2) the challenge of processing the non-independent and identically distributed (non-IID) satellite data. In this paper, to cope with those challenges, we propose an orbit-based spectral clustering-assisted clustered federated self-knowledge distillation (OSC-FSKD) approach for each orbit of an LEO satellite constellation, which retains the advantage of FL that the observed data does not need to be sent to the ground. Specifically, we introduce normalized Laplacian-based spectral clustering (NLSC) into federated learning (FL) to create clustered FL in each round to address the challenge resulting from non-IID data. Particularly, NLSC is adopted to dynamically group clients into several clusters based on cosine similarities calculated by model updates. In addition, self-knowledge distillation is utilized to construct each local client, where the most recent updated local model is used to guide current local model training. Experiments demonstrate that the observation accuracy obtained by the proposed method is separately 1.01x, 2.15x, 1.10x, and 1.03x higher than that of pFedSD, FedProx, FedAU, and FedALA approaches using the SAT4 dataset. The proposed method also shows superiority when using other datasets.

Enhancing Spectrum Efficiency in 6G Satellite Networks: A GAIL-Powered Policy Learning via Asynchronous Federated Inverse Reinforcement Learning

Sep 27, 2024Abstract:In this paper, a novel generative adversarial imitation learning (GAIL)-powered policy learning approach is proposed for optimizing beamforming, spectrum allocation, and remote user equipment (RUE) association in NTNs. Traditional reinforcement learning (RL) methods for wireless network optimization often rely on manually designed reward functions, which can require extensive parameter tuning. To overcome these limitations, we employ inverse RL (IRL), specifically leveraging the GAIL framework, to automatically learn reward functions without manual design. We augment this framework with an asynchronous federated learning approach, enabling decentralized multi-satellite systems to collaboratively derive optimal policies. The proposed method aims to maximize spectrum efficiency (SE) while meeting minimum information rate requirements for RUEs. To address the non-convex, NP-hard nature of this problem, we combine the many-to-one matching theory with a multi-agent asynchronous federated IRL (MA-AFIRL) framework. This allows agents to learn through asynchronous environmental interactions, improving training efficiency and scalability. The expert policy is generated using the Whale optimization algorithm (WOA), providing data to train the automatic reward function within GAIL. Simulation results show that the proposed MA-AFIRL method outperforms traditional RL approaches, achieving a $14.6\%$ improvement in convergence and reward value. The novel GAIL-driven policy learning establishes a novel benchmark for 6G NTN optimization.

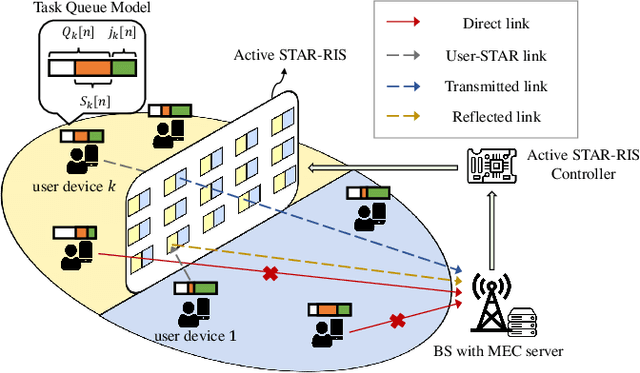

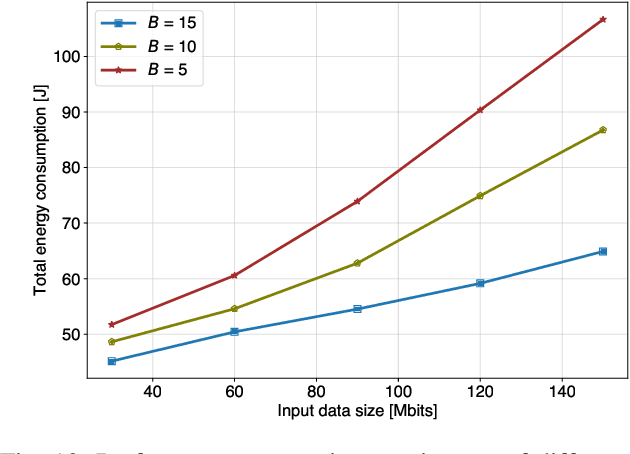

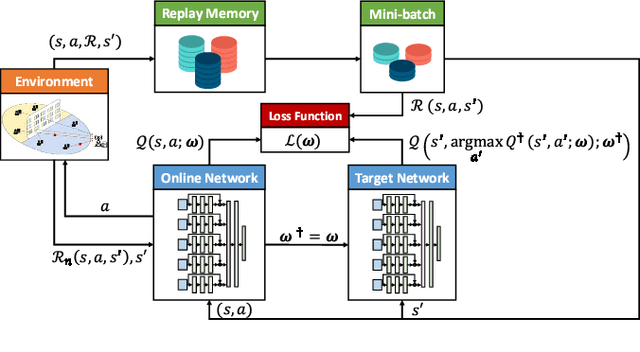

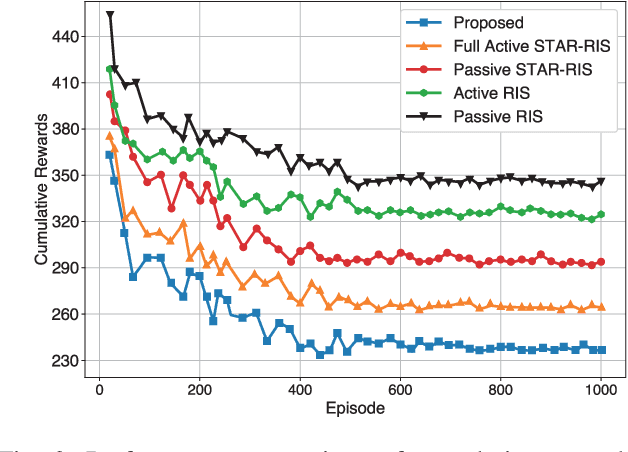

Active STAR-RIS Empowered Edge System for Enhanced Energy Efficiency and Task Management

Aug 23, 2024

Abstract:The proliferation of data-intensive and low-latency applications has driven the development of multi-access edge computing (MEC) as a viable solution to meet the increasing demands for high-performance computing and storage capabilities at the network edge. Despite the benefits of MEC, challenges such as obstructions cause non-line-of-sight (NLoS) communication to persist. Reconfigurable intelligent surfaces (RISs) and the more advanced simultaneously transmitting and reflecting (STAR)-RISs have emerged to address these challenges; however, practical limitations and multiplicative fading effects hinder their efficacy. We propose an active STAR-RIS-assisted MEC system to overcome these obstacles, leveraging the advantages of active STAR-RIS. The main contributions consist of formulating an optimization problem to minimize energy consumption with task queue stability by jointly optimizing the partial task offloading, amplitude, phase shift coefficients, amplification coefficients, transmit power of the base station (BS), and admitted tasks. Furthermore, we decompose the non-convex problem into manageable sub-problems, employing sequential fractional programming for transmit power control, convex optimization technique for task offloading, and Lyapunov optimization with double deep Q-network (DDQN) for joint amplitude, phase shift, amplification, and task admission. Extensive performance evaluations demonstrate the superiority of the proposed system over benchmark schemes, highlighting its potential for enhancing MEC system performance. Numerical results indicate that our proposed system outperforms the conventional STAR-RIS-assisted by 18.64\% and the conventional RIS-assisted system by 30.43\%, respectively.

Semantic Enabled 6G LEO Satellite Communication for Earth Observation: A Resource-Constrained Network Optimization

Jul 31, 2024

Abstract:Earth observation satellites generate large amounts of real-time data for monitoring and managing time-critical events such as disaster relief missions. This presents a major challenge for satellite-to-ground communications operating under limited bandwidth capacities. This paper explores semantic communication (SC) as a potential alternative to traditional communication methods. The rationality for adopting SC is its inherent ability to reduce communication costs and make spectrum efficient for 6G non-terrestrial networks (6G-NTNs). We focus on the critical satellite imagery downlink communications latency optimization for Earth observation through SC techniques. We formulate the latency minimization problem with SC quality-of-service (SC-QoS) constraints and address this problem with a meta-heuristic discrete whale optimization algorithm (DWOA) and a one-to-one matching game. The proposed approach for captured image processing and transmission includes the integration of joint semantic and channel encoding to ensure downlink sum-rate optimization and latency minimization. Empirical results from experiments demonstrate the efficiency of the proposed framework for latency optimization while preserving high-quality data transmission when compared to baselines.

Design Optimization of NOMA Aided Multi-STAR-RIS for Indoor Environments: A Convex Approximation Imitated Reinforcement Learning Approach

Jun 19, 2024

Abstract:Sixth-generation (6G) networks leverage simultaneously transmitting and reflecting reconfigurable intelligent surfaces (STAR-RISs) to overcome the limitations of traditional RISs. STAR-RISs offer 360-degree full-space coverage and optimized transmission and reflection for enhanced network performance and dynamic control of the indoor propagation environment. However, deploying STAR-RISs indoors presents challenges in interference mitigation, power consumption, and real-time configuration. In this work, a novel network architecture utilizing multiple access points (APs) and STAR-RISs is proposed for indoor communication. An optimization problem encompassing user assignment, access point beamforming, and STAR-RIS phase control for reflection and transmission is formulated. The inherent complexity of the formulated problem necessitates a decomposition approach for an efficient solution. This involves tackling different sub-problems with specialized techniques: a many-to-one matching algorithm is employed to assign users to appropriate access points, optimizing resource allocation. To facilitate efficient resource management, access points are grouped using a correlation-based K-means clustering algorithm. Multi-agent deep reinforcement learning (MADRL) is leveraged to optimize the control of the STAR-RIS. Within the proposed MADRL framework, a novel approach is introduced where each decision variable acts as an independent agent, enabling collaborative learning and decision-making. Additionally, the proposed MADRL approach incorporates convex approximation (CA). This technique utilizes suboptimal solutions from successive convex approximation (SCA) to accelerate policy learning for the agents, thereby leading to faster environment adaptation and convergence. Simulations demonstrate significant network utility improvements compared to baseline approaches.

Data Service Maximization in Integrated Terrestrial-Non-Terrestrial 6G Networks: A Deep Reinforcement Learning Approach

May 30, 2024Abstract:Integrating terrestrial and non-terrestrial networks has emerged as a promising paradigm to fulfill the constantly growing demand for connectivity, low transmission delay, and quality of services (QoS). This integration brings together the strengths of terrestrial and non-terrestrial networks, such as the reliability of terrestrial networks, broad coverage, and service continuity of non-terrestrial networks like low earth orbit (LEO) satellites. In this work, we study a data service maximization problem in an integrated terrestrial-non-terrestrial network (I-TNT) where the ground base stations (GBSs) and LEO satellites cooperatively serve the coexisting aerial users (AUs) and ground users (GUs). Then, by considering the spectrum scarcity, interference, and QoS requirements of the users, we jointly optimize the user association, AUE's trajectory, and power allocation. To tackle the formulated mixed-integer non-convex problem, we disintegrate it into two subproblems: 1) user association problem and 2) trajectory and power allocation problem. Since the user association problem is a binary integer programming problem, we use the standard convex optimization method to solve it. Meanwhile, the trajectory and power allocation problem is solved by the deep deterministic policy gradient (DDPG) method to cope with the problem's non-convexity and dynamic network environments. Then, the two subproblems are alternately solved by the proposed iterative algorithm. By comparing with the baselines in the existing literature, extensive simulations are conducted to evaluate the performance of the proposed framework.

Joint UAV Deployment and Resource Allocation in THz-Assisted MEC-Enabled Integrated Space-Air-Ground Networks

Jan 21, 2024Abstract:Multi-access edge computing (MEC)-enabled integrated space-air-ground (SAG) networks have drawn much attention recently, as they can provide communication and computing services to wireless devices in areas that lack terrestrial base stations (TBSs). Leveraging the ample bandwidth in the terahertz (THz) spectrum, in this paper, we propose MEC-enabled integrated SAG networks with collaboration among unmanned aerial vehicles (UAVs). We then formulate the problem of minimizing the energy consumption of devices and UAVs in the proposed MEC-enabled integrated SAG networks by optimizing tasks offloading decisions, THz sub-bands assignment, transmit power control, and UAVs deployment. The formulated problem is a mixed-integer nonlinear programming (MILP) problem with a non-convex structure, which is challenging to solve. We thus propose a block coordinate descent (BCD) approach to decompose the problem into four sub-problems: 1) device task offloading decision problem, 2) THz sub-band assignment and power control problem, 3) UAV deployment problem, and 4) UAV task offloading decision problem. We then propose to use a matching game, concave-convex procedure (CCP) method, successive convex approximation (SCA), and block successive upper-bound minimization (BSUM) approaches for solving the individual subproblems. Finally, extensive simulations are performed to demonstrate the effectiveness of our proposed algorithm.

Aerial STAR-RIS Empowered MEC: A DRL Approach for Energy Minimization

Dec 14, 2023

Abstract:Multi-access Edge Computing (MEC) addresses computational and battery limitations in devices by allowing them to offload computation tasks. To overcome the difficulties in establishing line-of-sight connections, integrating unmanned aerial vehicles (UAVs) has proven beneficial, offering enhanced data exchange, rapid deployment, and mobility. The utilization of reconfigurable intelligent surfaces (RIS), specifically simultaneously transmitting and reflecting RIS (STAR-RIS) technology, further extends coverage capabilities and introduces flexibility in MEC. This study explores the integration of UAV and STAR-RIS to facilitate communication between IoT devices and an MEC server. The formulated problem aims to minimize energy consumption for IoT devices and aerial STAR-RIS by jointly optimizing task offloading, aerial STAR-RIS trajectory, amplitude and phase shift coefficients, and transmit power. Given the non-convexity of the problem and the dynamic environment, solving it directly within a polynomial time frame is challenging. Therefore, deep reinforcement learning (DRL), particularly proximal policy optimization (PPO), is introduced for its sample efficiency and stability. Simulation results illustrate the effectiveness of the proposed system compared to benchmark schemes in the literature.

Joint User Pairing and Beamforming Design of Multi-STAR-RISs-Aided NOMA in the Indoor Environment via Multi-Agent Reinforcement Learning

Nov 17, 2023

Abstract:The development of 6G/B5G wireless networks, which have requirements that go beyond current 5G networks, is gaining interest from academia and industry. However, to increase 6G/B5G network quality, conventional cellular networks that rely on terrestrial base stations are constrained geographically and economically. Meanwhile, NOMA allows multiple users to share the same resources, which improves the spectral efficiency of the system and has the advantage of supporting a larger number of users. Additionally, by intelligently manipulating the phase and amplitude of both the reflected and transmitted signals, STAR-RISs can achieve improved coverage, increased spectral efficiency, and enhanced communication reliability. However, STAR-RISs must simultaneously optimize the amplitude and phase shift corresponding to reflection and transmission, which makes the existing terrestrial networks more complicated and is considered a major challenging issue. Motivated by the above, we study the joint user pairing for NOMA and beamforming design of Multi-STAR-RISs in an indoor environment. Then, we formulate the optimization problem with the objective of maximizing the total throughput of MUs by jointly optimizing the decoding order, user pairing, active beamforming, and passive beamforming. However, the formulated problem is a MINLP. To address this challenge, we first introduce the decoding order for NOMA networks. Next, we decompose the original problem into two subproblems, namely: 1) MU pairing and 2) Beamforming optimization under the optimal decoding order. For the first subproblem, we employ correlation-based K-means clustering to solve the user pairing problem. Then, to jointly deal with beamforming vector optimizations, we propose MAPPO, which can make quick decisions in the given environment owing to its low complexity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge