Xuefeng Xian

Sequential Recommendation with Probabilistic Logical Reasoning

Apr 22, 2023

Abstract:Deep learning and symbolic learning are two frequently employed methods in Sequential Recommendation (SR). Recent neural-symbolic SR models demonstrate their potential to enable SR to be equipped with concurrent perception and cognition capacities. However, neural-symbolic SR remains a challenging problem due to open issues like representing users and items in logical reasoning. In this paper, we combine the Deep Neural Network (DNN) SR models with logical reasoning and propose a general framework named Sequential Recommendation with Probabilistic Logical Reasoning (short for SR-PLR). This framework allows SR-PLR to benefit from both similarity matching and logical reasoning by disentangling feature embedding and logic embedding in the DNN and probabilistic logic network. To better capture the uncertainty and evolution of user tastes, SR-PLR embeds users and items with a probabilistic method and conducts probabilistic logical reasoning on users' interaction patterns. Then the feature and logic representations learned from the DNN and logic network are concatenated to make the prediction. Finally, experiments on various sequential recommendation models demonstrate the effectiveness of the SR-PLR.

Learnable Model Augmentation Self-Supervised Learning for Sequential Recommendation

Apr 21, 2022

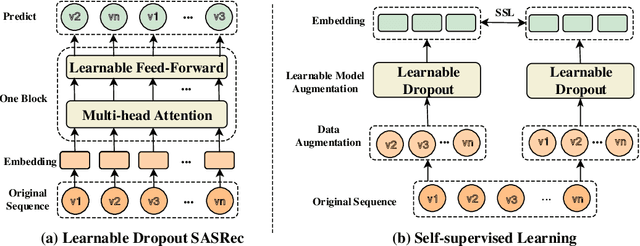

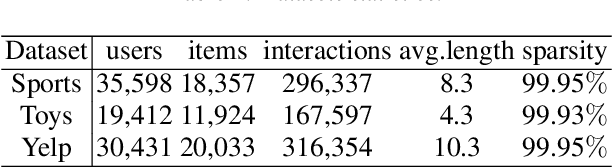

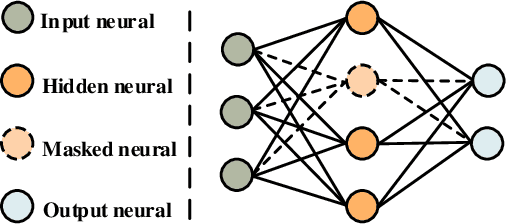

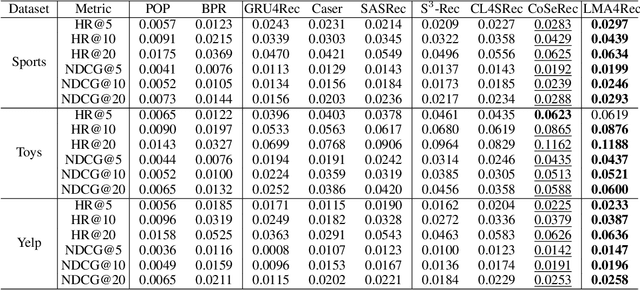

Abstract:Sequential Recommendation aims to predict the next item based on user behaviour. Recently, Self-Supervised Learning (SSL) has been proposed to improve recommendation performance. However, most of existing SSL methods use a uniform data augmentation scheme, which loses the sequence correlation of an original sequence. To this end, in this paper, we propose a Learnable Model Augmentation self-supervised learning for sequential Recommendation (LMA4Rec). Specifically, LMA4Rec first takes model augmentation as a supplementary method for data augmentation to generate views. Then, LMA4Rec uses learnable Bernoulli dropout to implement model augmentation learnable operations. Next, self-supervised learning is used between the contrastive views to extract self-supervised signals from an original sequence. Finally, experiments on three public datasets show that the LMA4Rec method effectively improves sequential recommendation performance compared with baseline methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge