Xuan Su

Dual Diffusion Implicit Bridges for Image-to-Image Translation

Mar 16, 2022

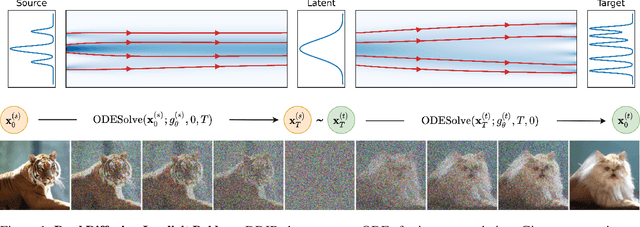

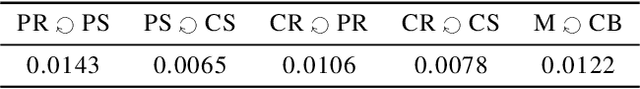

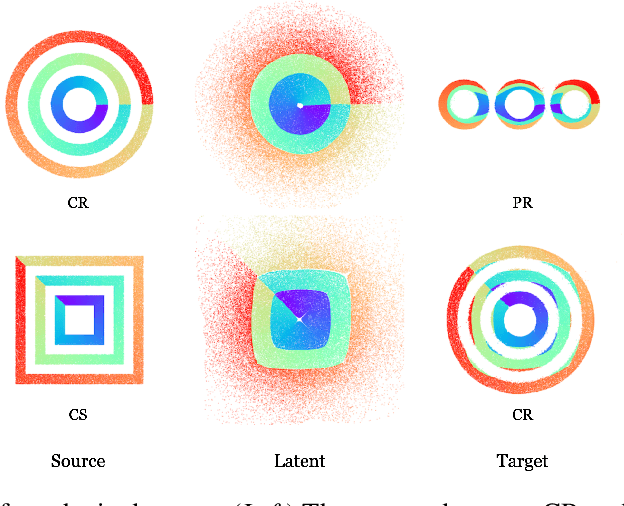

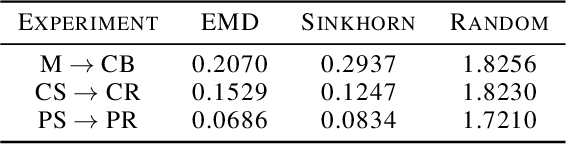

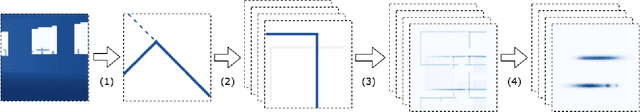

Abstract:Common image-to-image translation methods rely on joint training over data from both source and target domains. This excludes cases where domain data is private (e.g., in a federated setting), and often means that a new model has to be trained for a new pair of domains. We present Dual Diffusion Implicit Bridges (DDIBs), an image translation method based on diffusion models, that circumvents training on domain pairs. DDIBs allow translations between arbitrary pairs of source-target domains, given independently trained diffusion models on the respective domains. Image translation with DDIBs is a two-step process: DDIBs first obtain latent encodings for source images with the source diffusion model, and next decode such encodings using the target model to construct target images. Moreover, DDIBs enable cycle-consistency by default and is theoretically connected to optimal transport. Experimentally, we apply DDIBs on a variety of synthetic and high-resolution image datasets, demonstrating their utility in example-guided color transfer, image-to-image translation as well as their connections to optimal transport methods.

Multiplicative Gaussian Particle Filter

Feb 29, 2020

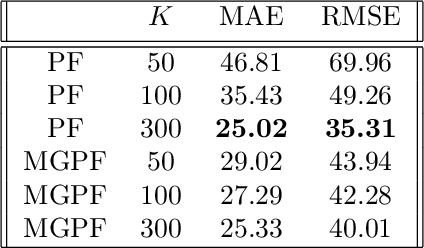

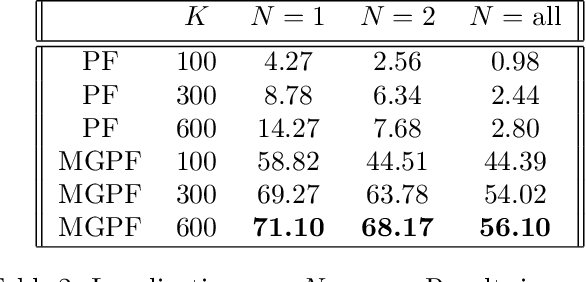

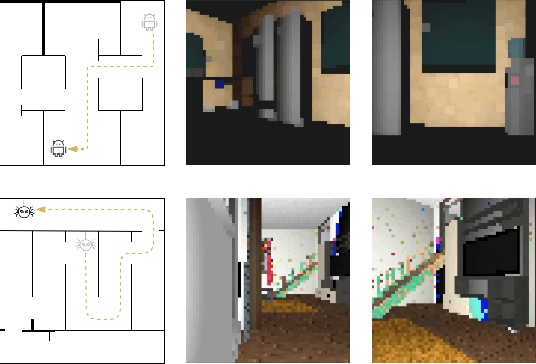

Abstract:We propose a new sampling-based approach for approximate inference in filtering problems. Instead of approximating conditional distributions with a finite set of states, as done in particle filters, our approach approximates the distribution with a weighted sum of functions from a set of continuous functions. Central to the approach is the use of sampling to approximate multiplications in the Bayes filter. We provide theoretical analysis, giving conditions for sampling to give good approximation. We next specialize to the case of weighted sums of Gaussians, and show how properties of Gaussians enable closed-form transition and efficient multiplication. Lastly, we conduct preliminary experiments on a robot localization problem and compare performance with the particle filter, to demonstrate the potential of the proposed method.

Neural Multi-Task Learning for Citation Function and Provenance

Nov 18, 2018

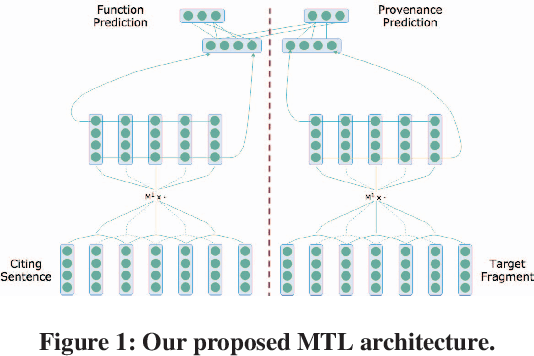

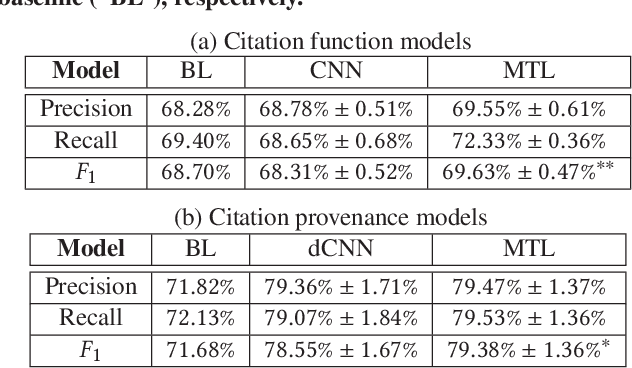

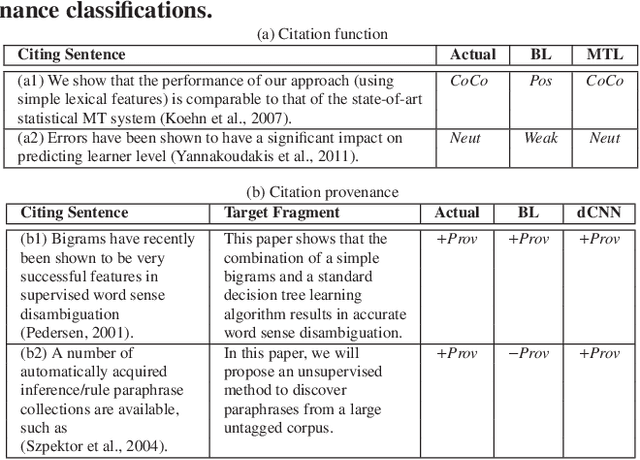

Abstract:Citation function and provenance are two cornerstone tasks in citation analysis. Given a citation, the former task determines its rhetorical role, while the latter locates the text in the cited paper that contains the relevant cited information. We hypothesize that these two tasks are synergistically related, and build a model that validates this claim. For both tasks, we show that a single-layer convolutional neural network (CNN) is able to surpass the performance of existing state-of-the-art baselines. More importantly, we show that the two tasks are indeed synergistic: by training both tasks in one go using multi-task learning, we demonstrate additional performance gains in both tasks. Altogether, our contributions outperform the current state-of-the-arts by ~2% and ~7%, with statistical significance for citation function and citation provenance prediction tasks, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge