Xingang Li

Image2CADSeq: Computer-Aided Design Sequence and Knowledge Inference from Product Images

Jan 09, 2025Abstract:Computer-aided design (CAD) tools empower designers to design and modify 3D models through a series of CAD operations, commonly referred to as a CAD sequence. In scenarios where digital CAD files are not accessible, reverse engineering (RE) has been used to reconstruct 3D CAD models. Recent advances have seen the rise of data-driven approaches for RE, with a primary focus on converting 3D data, such as point clouds, into 3D models in boundary representation (B-rep) format. However, obtaining 3D data poses significant challenges, and B-rep models do not reveal knowledge about the 3D modeling process of designs. To this end, our research introduces a novel data-driven approach with an Image2CADSeq neural network model. This model aims to reverse engineer CAD models by processing images as input and generating CAD sequences. These sequences can then be translated into B-rep models using a solid modeling kernel. Unlike B-rep models, CAD sequences offer enhanced flexibility to modify individual steps of model creation, providing a deeper understanding of the construction process of CAD models. To quantitatively and rigorously evaluate the predictive performance of the Image2CADSeq model, we have developed a multi-level evaluation framework for model assessment. The model was trained on a specially synthesized dataset, and various network architectures were explored to optimize the performance. The experimental and validation results show great potential for the model in generating CAD sequences from 2D image data.

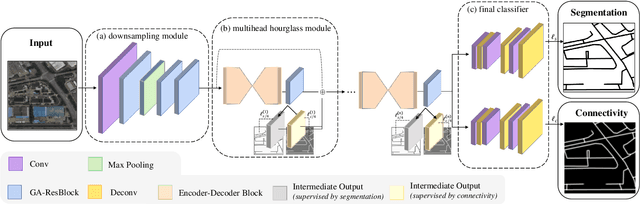

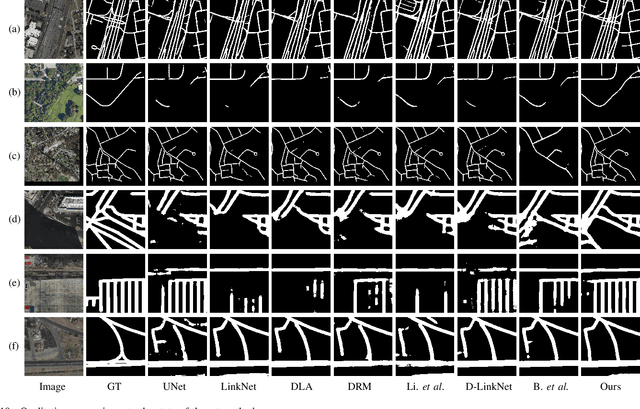

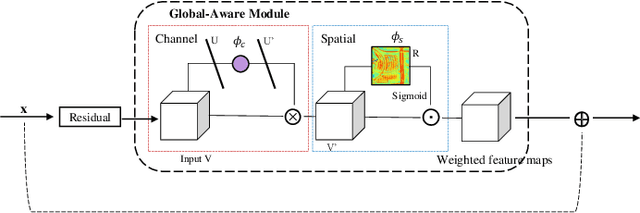

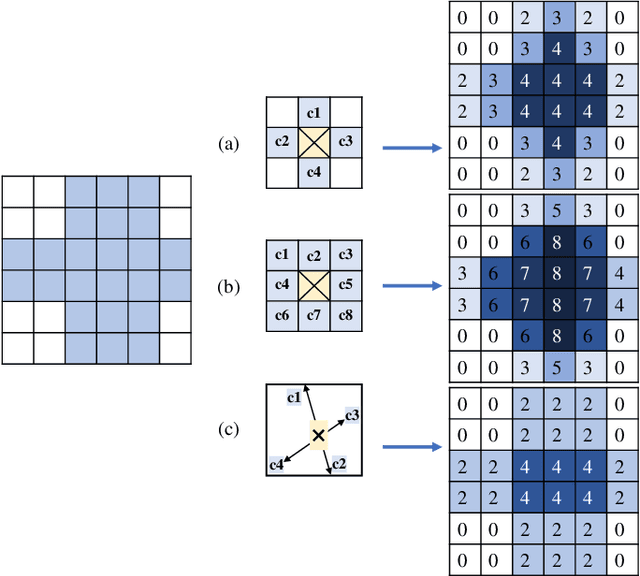

Fine-Grained Extraction of Road Networks via Joint Learning of Connectivity and Segmentation

Dec 07, 2023

Abstract:Road network extraction from satellite images is widely applicated in intelligent traffic management and autonomous driving fields. The high-resolution remote sensing images contain complex road areas and distracted background, which make it a challenge for road extraction. In this study, we present a stacked multitask network for end-to-end segmenting roads while preserving connectivity correctness. In the network, a global-aware module is introduced to enhance pixel-level road feature representation and eliminate background distraction from overhead images; a road-direction-related connectivity task is added to ensure that the network preserves the graph-level relationships of the road segments. We also develop a stacked multihead structure to jointly learn and effectively utilize the mutual information between connectivity learning and segmentation learning. We evaluate the performance of the proposed network on three public remote sensing datasets. The experimental results demonstrate that the network outperforms the state-of-the-art methods in terms of road segmentation accuracy and connectivity maintenance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge