Xiaoxiao Cheng

Restoring Heterogeneity in LLM-based Social Simulation: An Audience Segmentation Approach

Apr 08, 2026Abstract:Large Language Models (LLMs) are increasingly used to simulate social attitudes and behaviors, offering scalable "silicon samples" that can approximate human data. However, current simulation practice often collapses diversity into an "average persona," masking subgroup variation that is central to social reality. This study introduces audience segmentation as a systematic approach for restoring heterogeneity in LLM-based social simulation. Using U.S. climate-opinion survey data, we compare six segmentation configurations across two open-weight LLMs (Llama 3.1-70B and Mixtral 8x22B), varying segmentation identifier granularity, parsimony, and selection logic (theory-driven, data-driven, and instrument-based). We evaluate simulation performance with a three-dimensional evaluation framework covering distributional, structural, and predictive fidelity. Results show that increasing identifier granularity does not produce consistent improvement: moderate enrichment can improve performance, but further expansion does not reliably help and can worsen structural and predictive fidelity. Across parsimony comparisons, compact configurations often match or outperform more comprehensive alternatives, especially in structural and predictive fidelity, while distributional fidelity remains metric dependent. Identifier selection logic determines which fidelity dimension benefits most: instrument-based selection best preserves distributional shape, whereas data-driven selection best recovers between-group structure and identifier-outcome associations. Overall, no single configuration dominates all dimensions, and performance gains in one dimension can coincide with losses in another. These findings position audience segmentation as a core methodological approach for valid LLM-based social simulation and highlight the need for heterogeneity-aware evaluation and variance-preserving modeling strategies.

Interacting humans and robots can improve sensory prediction by adapting their viscoelasticity

Oct 17, 2024

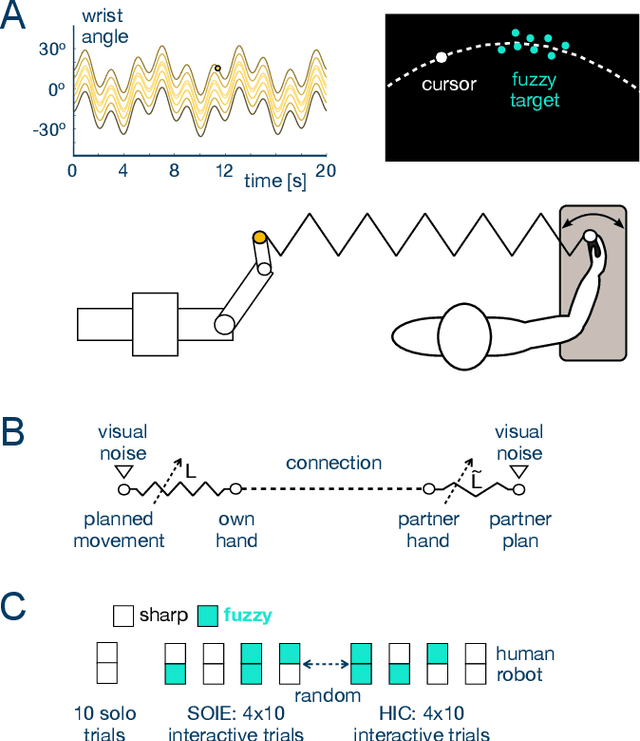

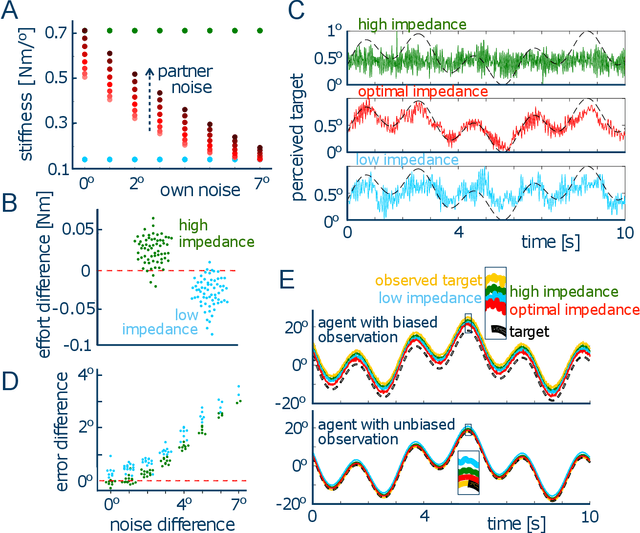

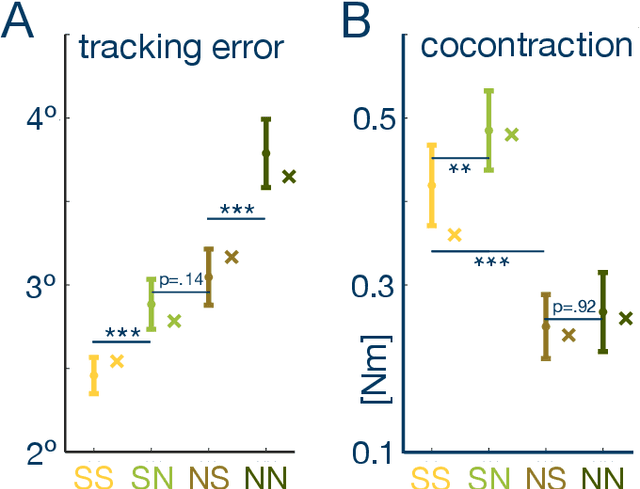

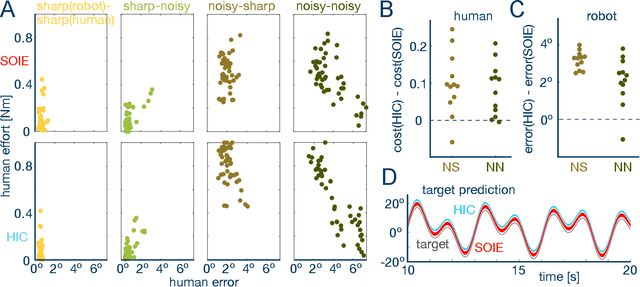

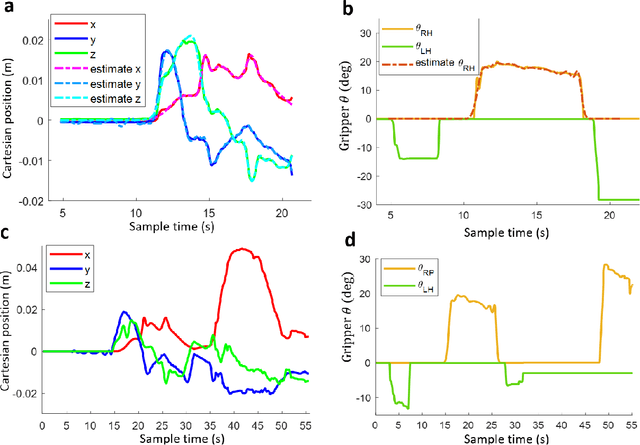

Abstract:To manipulate objects or dance together, humans and robots exchange energy and haptic information. While the exchange of energy in human-robot interaction has been extensively investigated, the underlying exchange of haptic information is not well understood. Here, we develop a computational model of the mechanical and sensory interactions between agents that can tune their viscoelasticity while considering their sensory and motor noise. The resulting stochastic-optimal-information-and-effort (SOIE) controller predicts how the exchange of haptic information and the performance can be improved by adjusting viscoelasticity. This controller was first implemented on a robot-robot experiment with a tracking task which showed its superior performance when compared to either stiff or compliant control. Importantly, the optimal controller also predicts how connected humans alter their muscle activation to improve haptic communication, with differentiated viscoelasticity adjustment to their own sensing noise and haptic perturbations. A human-robot experiment then illustrated the applicability of this optimal control strategy for robots, yielding improved tracking performance and effective haptic communication as the robot adjusted its viscoelasticity according to its own and the user's noise characteristics. The proposed SOIE controller may thus be used to improve haptic communication and collaboration of humans and robots.

Haptic feedback of front car motion can improve driving control

Jul 29, 2024

Abstract:This study investigates the role of haptic feedback in a car-following scenario, where information about the motion of the front vehicle is provided through a virtual elastic connection with it. Using a robotic interface in a simulated driving environment, we examined the impact of varying levels of such haptic feedback on the driver's ability to follow the road while avoiding obstacles. The results of an experiment with 15 subjects indicate that haptic feedback from the front car's motion can significantly improve driving control (i.e., reduce motion jerk and deviation from the road) and reduce mental load (evaluated via questionnaire). This suggests that haptic communication, as observed between physically interacting humans, can be leveraged to improve safety and efficiency in automated driving systems, warranting further testing in real driving scenarios.

Open-Set Object Recognition Using Mechanical Properties During Interaction

Nov 02, 2023

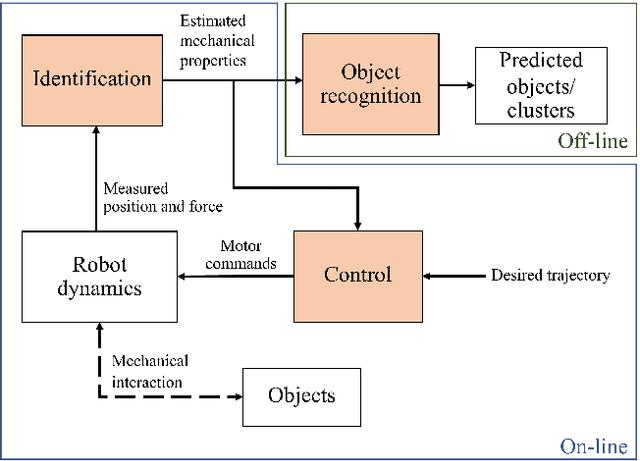

Abstract:while most of the tactile robots are operated in close-set conditions, it is challenging for them to operate in open-set conditions where test objects are beyond the robots' knowledge. We proposed an open-set recognition framework using mechanical properties to recongise known objects and incrementally label novel objects. The main contribution is a clustering algorithm that exploits knowledge of known objects to estimate cluster centre and sizes, unlike a typical algorithm that randomly selects them. The framework is validated with the mechanical properties estimated from a real object during interaction. The results show that the framework could recognise objects better than alternative methods contributed by the novelty detector. Importantly, our clustering algorithm yields better clustering performance than other methods. Furthermore, the hyperparameters studies show that cluster size is important to clustering results and needed to be tuned properly.

Mechanical features based object recognition

Oct 14, 2022

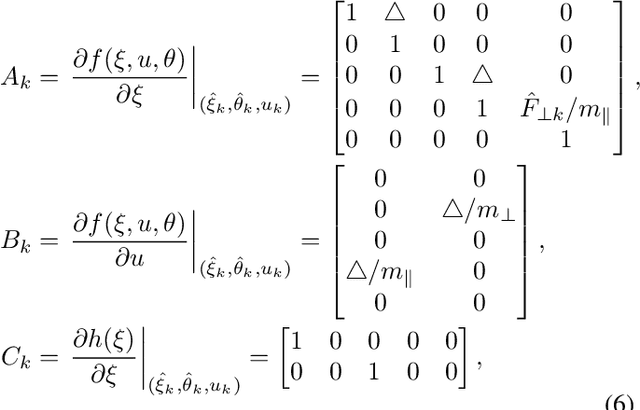

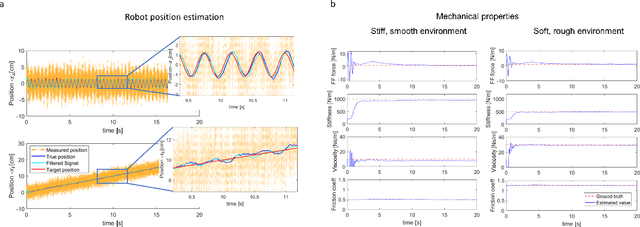

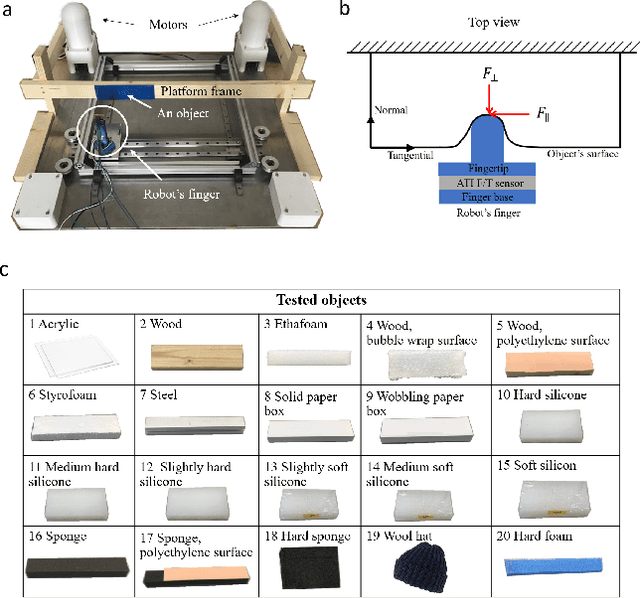

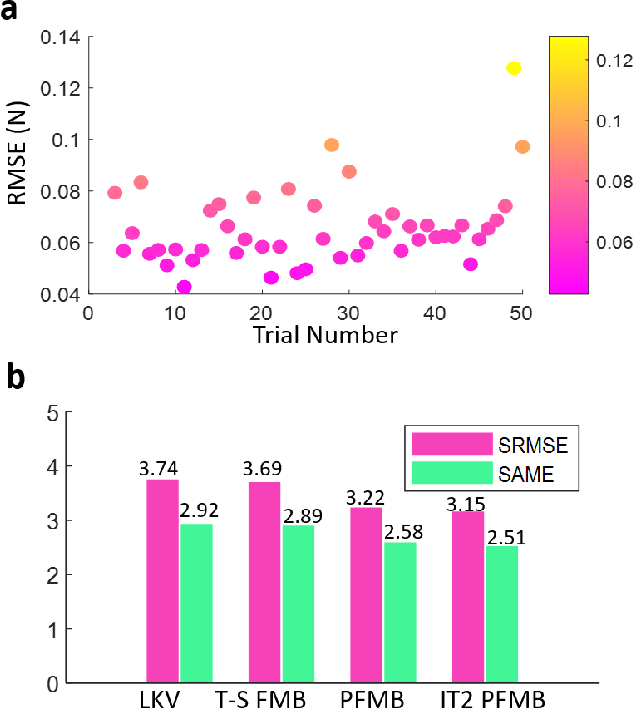

Abstract:Current robotic haptic object recognition relies on statistical measures derived from movement dependent interaction signals such as force, vibration or position. Mechanical properties that can be identified from these signals are intrinsic object properties that may yield a more robust object representation. Therefore, this paper proposes an object recognition framework using multiple representative mechanical properties: the coefficient of restitution, stiffness, viscosity and friction coefficient. These mechanical properties are identified in real-time using a dual Kalman filter, then used to classify objects. The proposed framework was tested with a robot identifying 20 objects through haptic exploration. The results demonstrate the technique's effectiveness and efficiency, and that all four mechanical properties are required for best recognition yielding a rate of 98.18 $\pm$ 0.424 %. Clustering with Gaussian mixture models further shows that using these mechanical properties results in superior recognition as compared to using statistical parameters of the interaction signals.

Dynamic neuronal networks efficiently achieve classification in robotic interactions with real-world objects

Oct 12, 2022

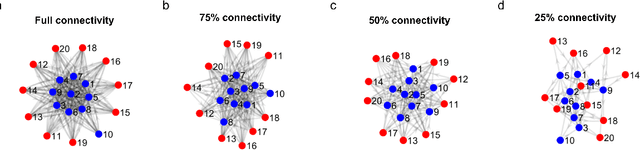

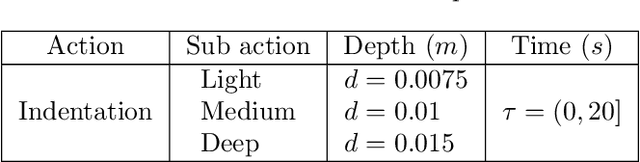

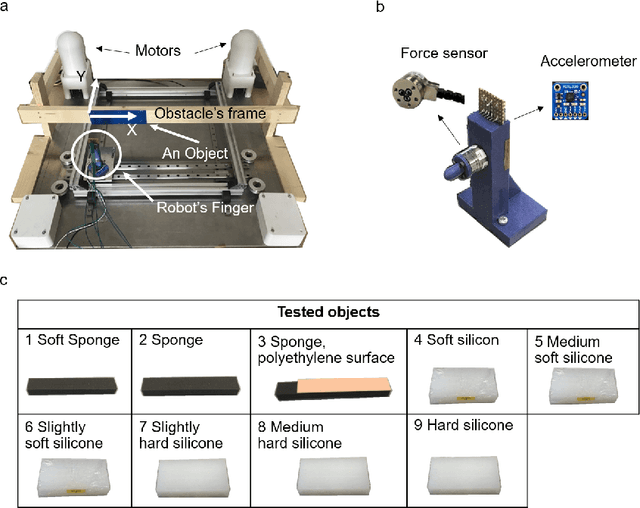

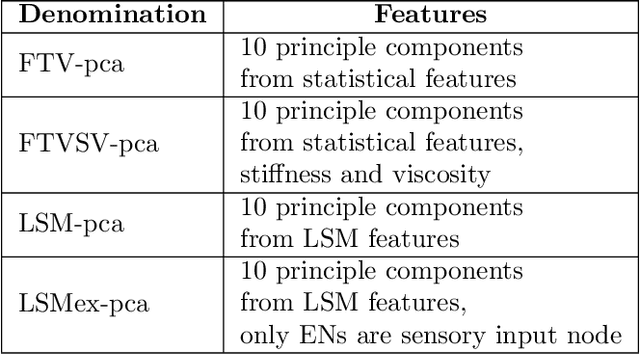

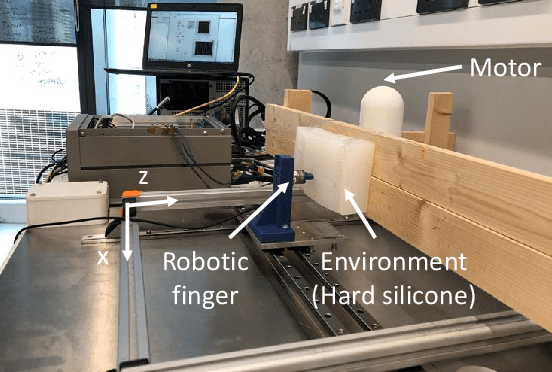

Abstract:Biological cortical networks are potentially fully recurrent networks without any distinct output layer, where recognition may instead rely on the distribution of activity across its neurons. Because such biological networks can have rich dynamics, they are well-designed to cope with dynamical interactions of the types that occur in nature, while traditional machine learning networks may struggle to make sense of such data. Here we connected a simple model neuronal network (based on the 'linear summation neuron model' featuring biologically realistic dynamics (LSM), consisting of 10 of excitatory and 10 inhibitory neurons, randomly connected) to a robot finger with multiple types of force sensors when interacting with materials of different levels of compliance. Scope: to explore the performance of the network on classification accuracy. Therefore, we compared the performance of the network output with principal component analysis of statistical features of the sensory data as well as its mechanical properties. Remarkably, even though the LSM was a very small and untrained network, and merely designed to provide rich internal network dynamics while the neuron model itself was highly simplified, we found that the LSM outperformed these other statistical approaches in terms of accuracy.

Shared Control for Bimanual Telesurgery with Optimized Robotic Partner

Jul 12, 2021

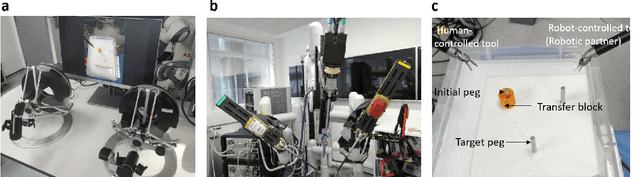

Abstract:Traditional telesurgery relies on the surgeon's full control of the robot on the patient's side, which tends to increase surgeon fatigue and may reduce the efficiency of the operation. This paper introduces a Robotic Partner (RP) to facilitate intuitive bimanual telesurgery, aiming at reducing the surgeon workload and enhancing surgeon-assisted capability. An interval type-2 polynomial fuzzy-model-based learning algorithm is employed to extract expert domain knowledge from surgeons and reflect environmental interaction information. Based on this, a bimanual shared control is developed to interact with the other robot teleoperated by the surgeon, understanding their control and providing assistance. As prior information of the environment model is not required, it reduces reliance on force sensors in control design. Experimental results on the DaVinci Surgical System show that the RP could assist peg-transfer tasks and reduce the surgeon's workload by 51\% in force-sensor-free scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge