Xian Yao Ng

Real2Sim or Sim2Real: Robotics Visual Insertion using Deep Reinforcement Learning and Real2Sim Policy Adaptation

Jun 06, 2022

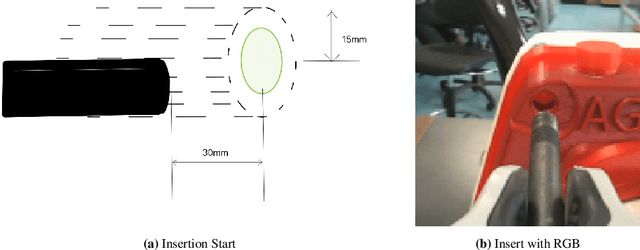

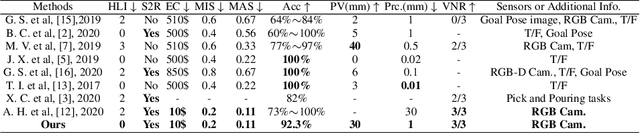

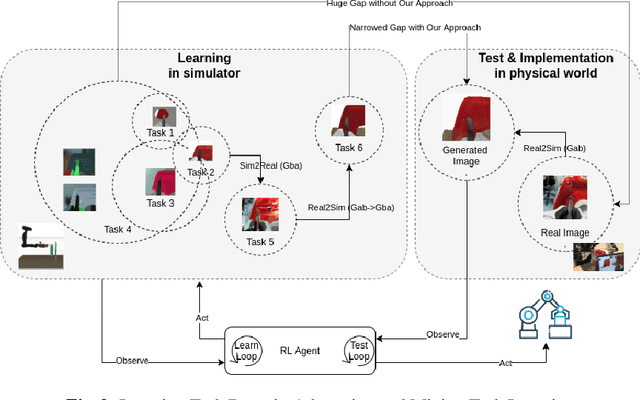

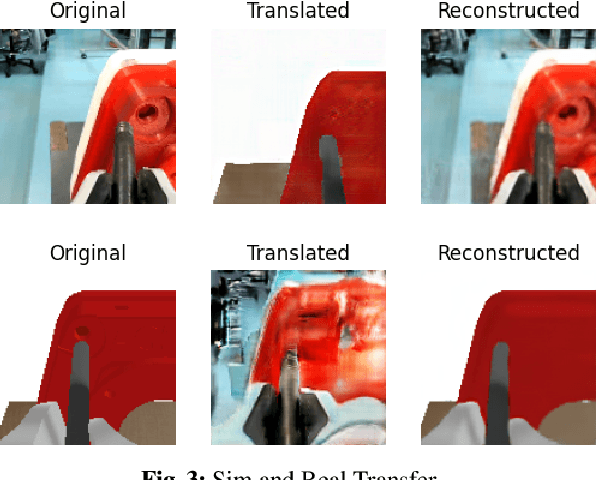

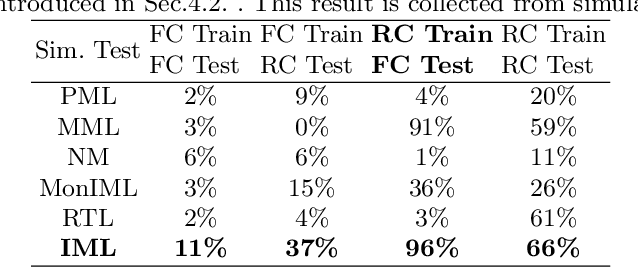

Abstract:Reinforcement learning has shown a wide usage in robotics tasks, such as insertion and grasping. However, without a practical sim2real strategy, the policy trained in simulation could fail on the real task. There are also wide researches in the sim2real strategies, but most of those methods rely on heavy image rendering, domain randomization training, or tuning. In this work, we solve the insertion task using a pure visual reinforcement learning solution with minimum infrastructure requirement. We also propose a novel sim2real strategy, Real2Sim, which provides a novel and easier solution in policy adaptation. We discuss the advantage of Real2Sim compared with Sim2Real.

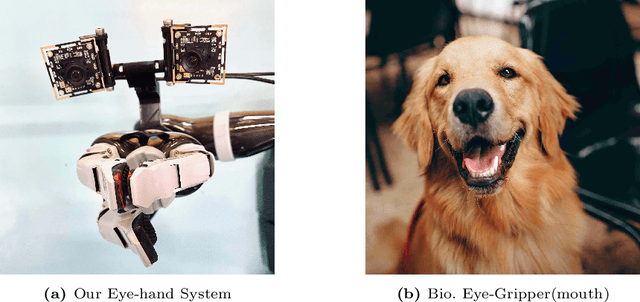

Economical Precise Manipulation and Auto Eye-Hand Coordination with Binocular Visual Reinforcement Learning

May 12, 2022

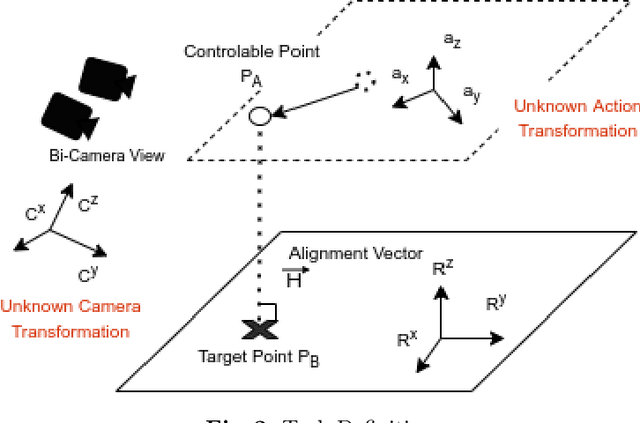

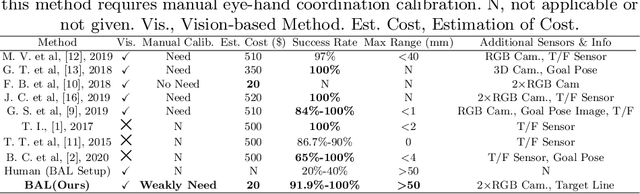

Abstract:Precision robotic manipulation tasks (insertion, screwing, precisely pick, precisely place) are required in many scenarios. Previous methods achieved good performance on such manipulation tasks. However, such methods typically require tedious calibration or expensive sensors. 3D/RGB-D cameras and torque/force sensors add to the cost of the robotic application and may not always be economical. In this work, we aim to solve these but using only weak-calibrated and low-cost webcams. We propose Binocular Alignment Learning (BAL), which could automatically learn the eye-hand coordination and points alignment capabilities to solve the four tasks. Our work focuses on working with unknown eye-hand coordination and proposes different ways of performing eye-in-hand camera calibration automatically. The algorithm was trained in simulation and used a practical pipeline to achieve sim2real and test it on the real robot. Our method achieves a competitively good result with minimal cost on the four tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge