Wubing Chen

BET: Explaining Deep Reinforcement Learning through The Error-Prone Decisions

Jan 14, 2024Abstract:Despite the impressive capabilities of Deep Reinforcement Learning (DRL) agents in many challenging scenarios, their black-box decision-making process significantly limits their deployment in safety-sensitive domains. Several previous self-interpretable works focus on revealing the critical states of the agent's decision. However, they cannot pinpoint the error-prone states. To address this issue, we propose a novel self-interpretable structure, named Backbone Extract Tree (BET), to better explain the agent's behavior by identify the error-prone states. At a high level, BET hypothesizes that states in which the agent consistently executes uniform decisions exhibit a reduced propensity for errors. To effectively model this phenomenon, BET expresses these states within neighborhoods, each defined by a curated set of representative states. Therefore, states positioned at a greater distance from these representative benchmarks are more prone to error. We evaluate BET in various popular RL environments and show its superiority over existing self-interpretable models in terms of explanation fidelity. Furthermore, we demonstrate a use case for providing explanations for the agents in StarCraft II, a sophisticated multi-agent cooperative game. To the best of our knowledge, we are the first to explain such a complex scenarios using a fully transparent structure.

Fidelity-Induced Interpretable Policy Extraction for Reinforcement Learning

Sep 12, 2023Abstract:Deep Reinforcement Learning (DRL) has achieved remarkable success in sequential decision-making problems. However, existing DRL agents make decisions in an opaque fashion, hindering the user from establishing trust and scrutinizing weaknesses of the agents. While recent research has developed Interpretable Policy Extraction (IPE) methods for explaining how an agent takes actions, their explanations are often inconsistent with the agent's behavior and thus, frequently fail to explain. To tackle this issue, we propose a novel method, Fidelity-Induced Policy Extraction (FIPE). Specifically, we start by analyzing the optimization mechanism of existing IPE methods, elaborating on the issue of ignoring consistency while increasing cumulative rewards. We then design a fidelity-induced mechanism by integrate a fidelity measurement into the reinforcement learning feedback. We conduct experiments in the complex control environment of StarCraft II, an arena typically avoided by current IPE methods. The experiment results demonstrate that FIPE outperforms the baselines in terms of interaction performance and consistency, meanwhile easy to understand.

Learning Credit Assignment for Cooperative Reinforcement Learning

Oct 10, 2022

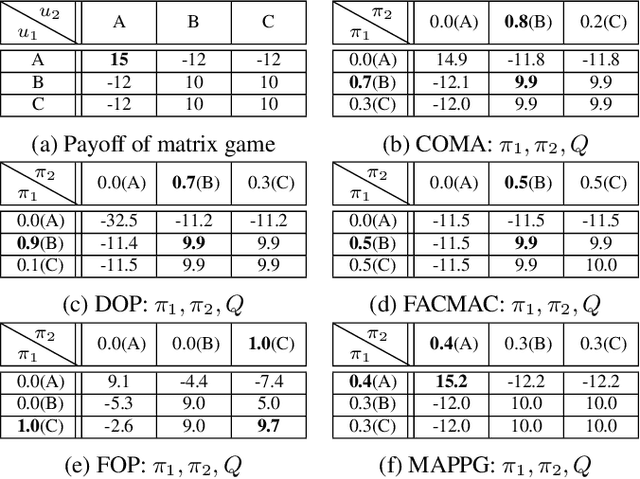

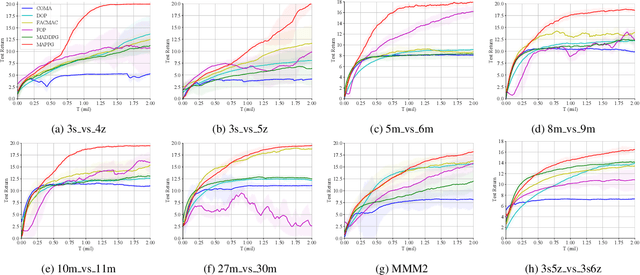

Abstract:Cooperative multi-agent policy gradient (MAPG) algorithms have recently attracted wide attention and are regarded as a general scheme for the multi-agent system. Credit assignment plays an important role in MAPG and can induce cooperation among multiple agents. However, most MAPG algorithms cannot achieve good credit assignment because of the game-theoretic pathology known as \textit{centralized-decentralized mismatch}. To address this issue, this paper presents a novel method, \textit{\underline{M}ulti-\underline{A}gent \underline{P}olarization \underline{P}olicy \underline{G}radient} (MAPPG). MAPPG takes a simple but efficient polarization function to transform the optimal consistency of joint and individual actions into easily realized constraints, thus enabling efficient credit assignment in MAPG. Theoretically, we prove that individual policies of MAPPG can converge to the global optimum. Empirically, we evaluate MAPPG on the well-known matrix game and differential game, and verify that MAPPG can converge to the global optimum for both discrete and continuous action spaces. We also evaluate MAPPG on a set of StarCraft II micromanagement tasks and demonstrate that MAPPG outperforms the state-of-the-art MAPG algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge