Won Kyung Do

TensorTouch: Calibration of Tactile Sensors for High Resolution Stress Tensor and Deformation for Dexterous Manipulation

Jun 09, 2025Abstract:Advanced dexterous manipulation involving multiple simultaneous contacts across different surfaces, like pinching coins from ground or manipulating intertwined objects, remains challenging for robotic systems. Such tasks exceed the capabilities of vision and proprioception alone, requiring high-resolution tactile sensing with calibrated physical metrics. Raw optical tactile sensor images, while information-rich, lack interpretability and cross-sensor transferability, limiting their real-world utility. TensorTouch addresses this challenge by integrating finite element analysis with deep learning to extract comprehensive contact information from optical tactile sensors, including stress tensors, deformation fields, and force distributions at pixel-level resolution. The TensorTouch framework achieves sub-millimeter position accuracy and precise force estimation while supporting large sensor deformations crucial for manipulating soft objects. Experimental validation demonstrates 90% success in selectively grasping one of two strings based on detected motion, enabling new contact-rich manipulation capabilities previously inaccessible to robotic systems.

Touch-GS: Visual-Tactile Supervised 3D Gaussian Splatting

Mar 18, 2024Abstract:In this work, we propose a novel method to supervise 3D Gaussian Splatting (3DGS) scenes using optical tactile sensors. Optical tactile sensors have become widespread in their use in robotics for manipulation and object representation; however, raw optical tactile sensor data is unsuitable to directly supervise a 3DGS scene. Our representation leverages a Gaussian Process Implicit Surface to implicitly represent the object, combining many touches into a unified representation with uncertainty. We merge this model with a monocular depth estimation network, which is aligned in a two stage process, coarsely aligning with a depth camera and then finely adjusting to match our touch data. For every training image, our method produces a corresponding fused depth and uncertainty map. Utilizing this additional information, we propose a new loss function, variance weighted depth supervised loss, for training the 3DGS scene model. We leverage the DenseTact optical tactile sensor and RealSense RGB-D camera to show that combining touch and vision in this manner leads to quantitatively and qualitatively better results than vision or touch alone in a few-view scene syntheses on opaque as well as on reflective and transparent objects. Please see our project page at http://armlabstanford.github.io/touch-gs

DenseTact-Mini: An Optical Tactile Sensor for Grasping Multi-Scale Objects From Flat Surfaces

Sep 16, 2023Abstract:Dexterous manipulation, especially of small daily objects, continues to pose complex challenges in robotics. This paper introduces the DenseTact-Mini, an optical tactile sensor with a soft, rounded, smooth gel surface and compact design equipped with a synthetic fingernail. We propose three distinct grasping strategies: tap grasping using adhesion forces such as electrostatic and van der Waals, fingernail grasping leveraging rolling/sliding contact between the object and fingernail, and fingertip grasping with two soft fingertips. Through comprehensive evaluations, the DenseTact-Mini demonstrates a lifting success rate exceeding 90.2% when grasping various objects, spanning items from 1mm basil seeds and small paperclips to items nearly 15mm. This work demonstrates the potential of soft optical tactile sensors for dexterous manipulation and grasping.

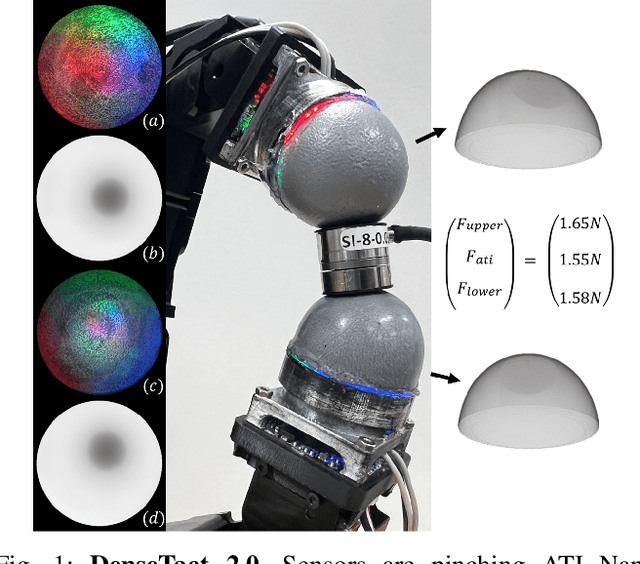

Inter-finger Small Object Manipulation with DenseTact Optical Tactile Sensor

Aug 31, 2023Abstract:The ability to grasp and manipulate small objects in cluttered environments remains a significant challenge. This paper introduces a novel approach that utilizes a tactile sensor-equipped gripper with eight degrees of freedom to overcome these limitations. We employ DenseTact 2.0 for the gripper, enabling precise control and improved grasp success rates, particularly for small objects ranging from 5mm to 25mm. Our integrated strategy incorporates the robot arm, gripper, and sensor to manipulate and orient small objects for subsequent classification effectively. We contribute a specialized dataset designed for classifying these objects based on tactile sensor output and a new control algorithm for in-hand orientation tasks. Our system demonstrates 88% of successful grasp and successfully classified small objects in cluttered scenarios.

Embedded Object Detection and Mapping in Soft Materials Using Optical Tactile Sensing

Aug 21, 2023Abstract:In this paper, we present a methodology that uses an optical tactile sensor for efficient tactile exploration of embedded objects within soft materials. The methodology consists of an exploration phase, where a probabilistic estimate of the location of the embedded objects is built using a Bayesian approach. The exploration phase is then followed by a mapping phase which exploits the probabilistic map to reconstruct the underlying topography of the workspace by sampling in more detail regions where there is expected to be embedded objects. To demonstrate the effectiveness of the method, we tested our approach on an experimental setup that consists of a series of quartz beads located underneath a polyethylene foam that prevents direct observation of the configuration and requires the use of tactile exploration to recover the location of the beads. We show the performance of our methodology using ten different configurations of the beads where the proposed approach is able to approximate the underlying configuration. We benchmark our results against a random sampling policy.

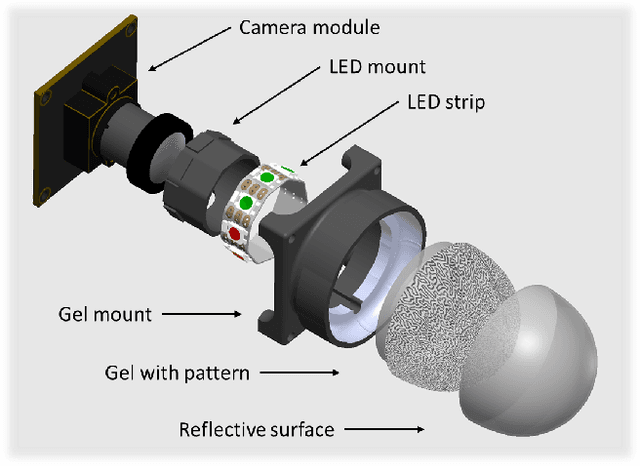

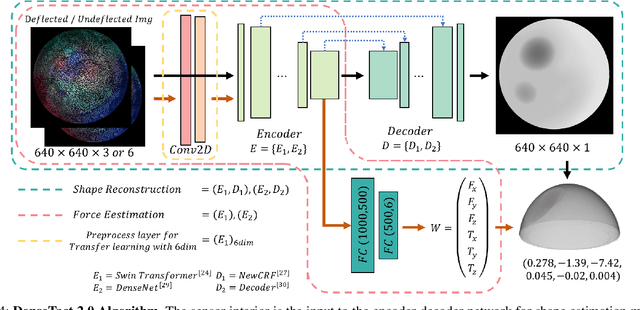

DenseTact 2.0: Optical Tactile Sensor for Shape and Force Reconstruction

Sep 21, 2022

Abstract:Collaborative robots stand to have an immense impact both on human welfare in domestic service applications and industrial superiority in advanced manufacturing requires dexterous assembly. The outstanding challenge is providing robotic fingertips with a physical design that makes them adept at performing dexterous tasks that require high-resolution, calibrated shape reconstruction and force sensing. In this work, we present DenseTact 2.0, an optical-tactile sensor capable of visualizing the deformed surface of a soft fingertip and using that image in a neural network to perform both calibrated shape reconstruction and 6-axis wrench estimation. We demonstrate the sensor accuracy of 0.3633mm per pixel for shape reconstruction, 0.410N for forces, 0.387mmNm for torques, and the ability to calibrate new fingers through transfer learning, achieving comparable performance that trained more than four times faster with only 12% of the dataset size.

DenseTact: Optical Tactile Sensor for Dense Shape Reconstruction

Jan 04, 2022

Abstract:Increasing the performance of tactile sensing in robots enables versatile, in-hand manipulation. Vision-based tactile sensors have been widely used as rich tactile feedback has been shown to be correlated with increased performance in manipulation tasks. Existing tactile sensor solutions with high resolution have limitations that include low accuracy, expensive components, or lack of scalability. In this paper, an inexpensive, scalable, and compact tactile sensor with high-resolution surface deformation modeling for surface reconstruction of the 3D sensor surface is proposed. By measuring the image from the fisheye camera, it is shown that the sensor can successfully estimate the surface deformation in real-time (1.8ms) by using deep convolutional neural networks. This sensor in its design and sensing abilities represents a significant step toward better object in-hand localization, classification, and surface estimation all enabled by high-resolution shape reconstruction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge