Weiyu Lan

COCO-CN for Cross-Lingual Image Tagging, Captioning and Retrieval

May 22, 2018

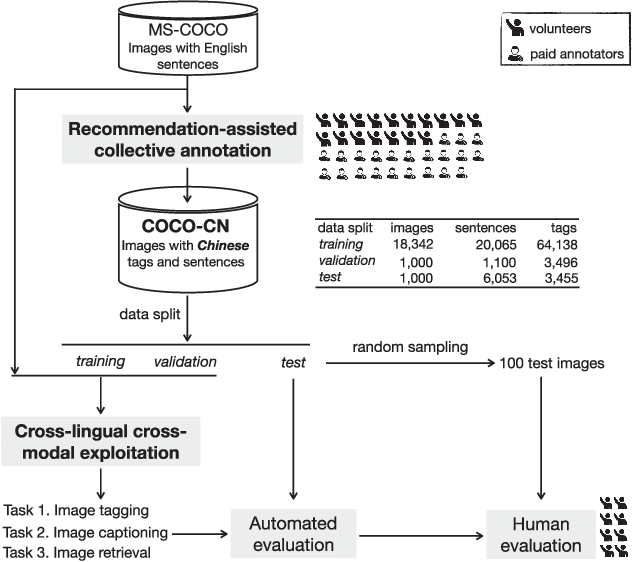

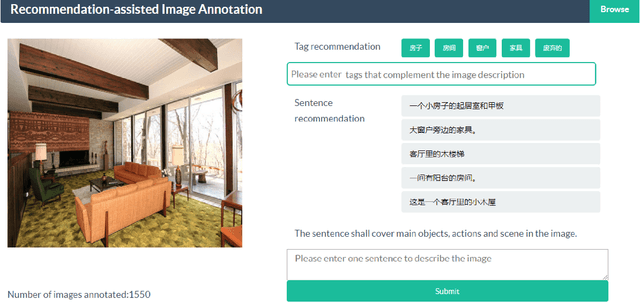

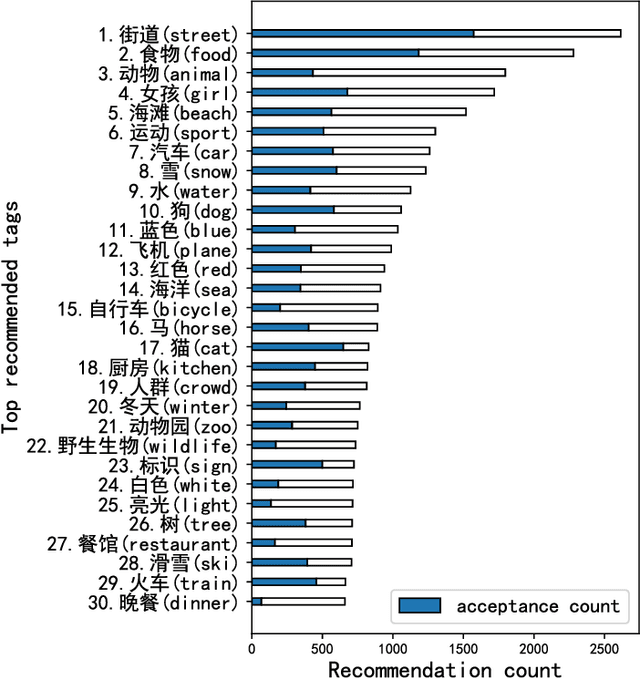

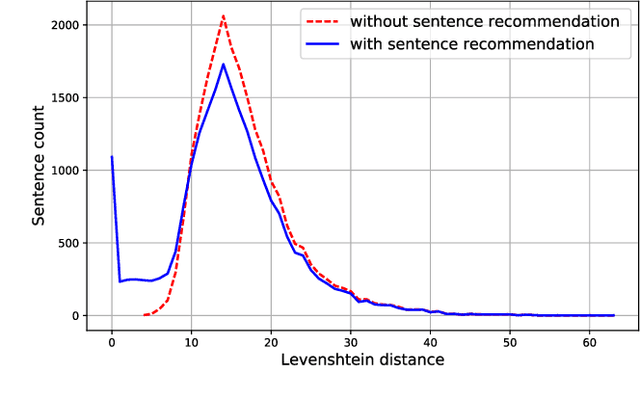

Abstract:This paper contributes to cross-lingual image annotation and retrieval in terms of data and methods. We propose COCO-CN, a novel dataset enriching MS-COCO with manually written Chinese sentences and tags. For more effective annotation acquisition, we develop a recommendation-assisted collective annotation system, automatically providing an annotator with several tags and sentences deemed to be relevant with respect to the pictorial content. Having 20,342 images annotated with 27,218 Chinese sentences and 70,993 tags, COCO-CN is currently the largest Chinese-English dataset applicable for cross-lingual image tagging, captioning and retrieval. We develop methods per task for effectively learning from cross-lingual resources. Extensive experiments on the multiple tasks justify the viability of our dataset and methods.

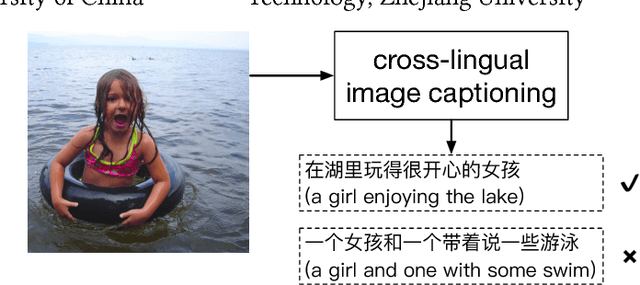

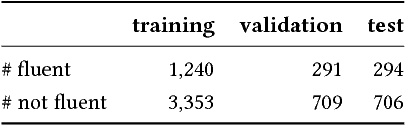

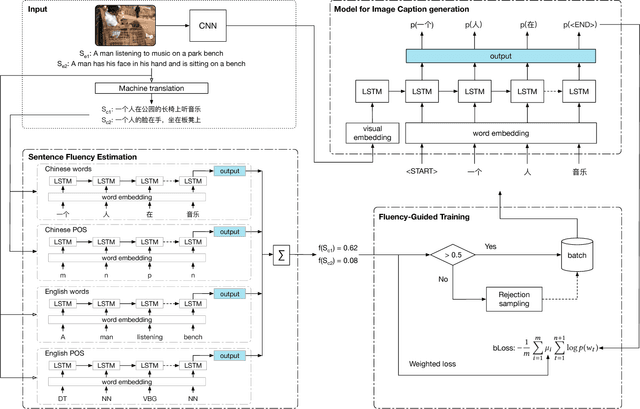

Fluency-Guided Cross-Lingual Image Captioning

Aug 15, 2017

Abstract:Image captioning has so far been explored mostly in English, as most available datasets are in this language. However, the application of image captioning should not be restricted by language. Only few studies have been conducted for image captioning in a cross-lingual setting. Different from these works that manually build a dataset for a target language, we aim to learn a cross-lingual captioning model fully from machine-translated sentences. To conquer the lack of fluency in the translated sentences, we propose in this paper a fluency-guided learning framework. The framework comprises a module to automatically estimate the fluency of the sentences and another module to utilize the estimated fluency scores to effectively train an image captioning model for the target language. As experiments on two bilingual (English-Chinese) datasets show, our approach improves both fluency and relevance of the generated captions in Chinese, but without using any manually written sentences from the target language.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge