Vicente Dominguez

Do Better ImageNet Models Transfer Better for Image Recommendation?

Sep 25, 2018

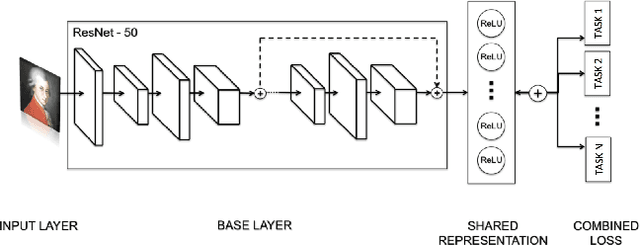

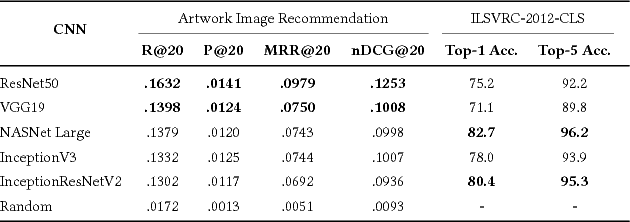

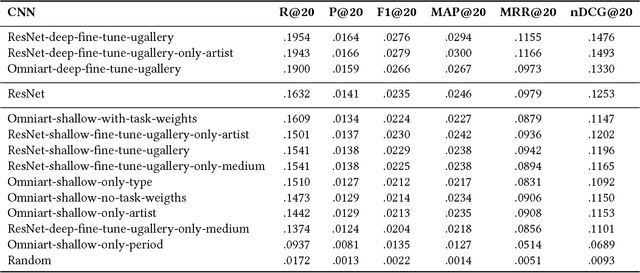

Abstract:Visual embeddings from Convolutional Neural Networks (CNN) trained on the ImageNet dataset for the ILSVRC challenge have shown consistently good performance for transfer learning and are widely used in several tasks, including image recommendation. However, some important questions have not yet been answered in order to use these embeddings for a larger scope of recommendation domains: a) Do CNNs that perform better in ImageNet are also better for transfer learning in content-based image recommendation?, b) Does fine-tuning help to improve performance? and c) Which is the best way to perform the fine-tuning? In this paper we compare several CNN models pre-trained with ImageNet to evaluate their transfer learning performance to an artwork image recommendation task. Our results indicate that models with better performance in the ImageNet challenge do not always imply better transfer learning for recommendation tasks (e.g. NASNet vs. ResNet). Our results also show that fine-tuning can be helpful even with a small dataset, but not every fine-tuning works. Our results can inform other researchers and practitioners on how to train their CNNs for better transfer learning towards image recommendation systems.

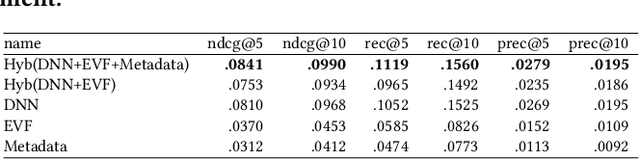

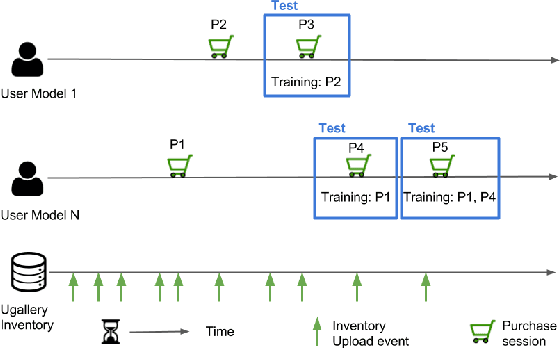

Exploring Content-based Artwork Recommendation with Metadata and Visual Features

Oct 23, 2017

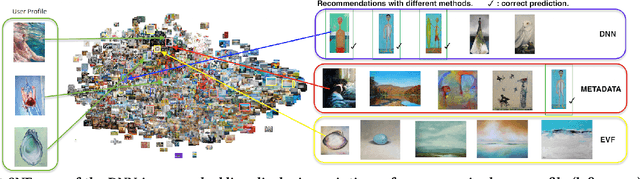

Abstract:Compared to other areas, artwork recommendation has received little attention, despite the continuous growth of the artwork market. Previous research has relied on ratings and metadata to make artwork recommendations, as well as visual features extracted with deep neural networks (DNN). However, these features have no direct interpretation to explicit visual features (e.g. brightness, texture) which might hinder explainability and user-acceptance. In this work, we study the impact of artwork metadata as well as visual features (DNN-based and attractiveness-based) for physical artwork recommendation, using images and transaction data from the UGallery online artwork store. Our results indicate that: (i) visual features perform better than manually curated data, (ii) DNN-based visual features perform better than attractiveness-based ones, and (iii) a hybrid approach improves the performance further. Our research can inform the development of new artwork recommenders relying on diverse content data.

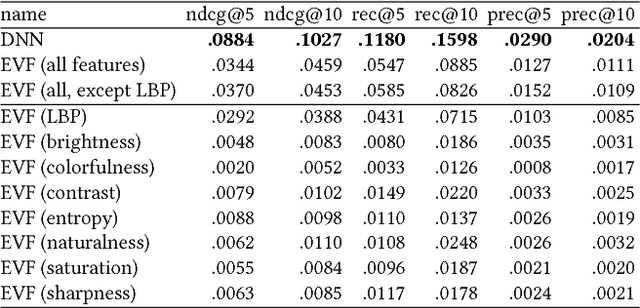

Comparing Neural and Attractiveness-based Visual Features for Artwork Recommendation

Jul 21, 2017

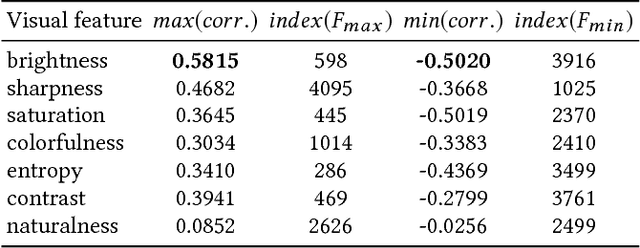

Abstract:Advances in image processing and computer vision in the latest years have brought about the use of visual features in artwork recommendation. Recent works have shown that visual features obtained from pre-trained deep neural networks (DNNs) perform very well for recommending digital art. Other recent works have shown that explicit visual features (EVF) based on attractiveness can perform well in preference prediction tasks, but no previous work has compared DNN features versus specific attractiveness-based visual features (e.g. brightness, texture) in terms of recommendation performance. In this work, we study and compare the performance of DNN and EVF features for the purpose of physical artwork recommendation using transactional data from UGallery, an online store of physical paintings. In addition, we perform an exploratory analysis to understand if DNN embedded features have some relation with certain EVF. Our results show that DNN features outperform EVF, that certain EVF features are more suited for physical artwork recommendation and, finally, we show evidence that certain neurons in the DNN might be partially encoding visual features such as brightness, providing an opportunity for explaining recommendations based on visual neural models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge