V. K. Viekash

Paced-Curriculum Distillation with Prediction and Label Uncertainty for Image Segmentation

Feb 02, 2023Abstract:Purpose: In curriculum learning, the idea is to train on easier samples first and gradually increase the difficulty, while in self-paced learning, a pacing function defines the speed to adapt the training progress. While both methods heavily rely on the ability to score the difficulty of data samples, an optimal scoring function is still under exploration. Methodology: Distillation is a knowledge transfer approach where a teacher network guides a student network by feeding a sequence of random samples. We argue that guiding student networks with an efficient curriculum strategy can improve model generalization and robustness. For this purpose, we design an uncertainty-based paced curriculum learning in self distillation for medical image segmentation. We fuse the prediction uncertainty and annotation boundary uncertainty to develop a novel paced-curriculum distillation (PCD). We utilize the teacher model to obtain prediction uncertainty and spatially varying label smoothing with Gaussian kernel to generate segmentation boundary uncertainty from the annotation. We also investigate the robustness of our method by applying various types and severity of image perturbation and corruption. Results: The proposed technique is validated on two medical datasets of breast ultrasound image segmentation and robotassisted surgical scene segmentation and achieved significantly better performance in terms of segmentation and robustness. Conclusion: P-CD improves the performance and obtains better generalization and robustness over the dataset shift. While curriculum learning requires extensive tuning of hyper-parameters for pacing function, the level of performance improvement suppresses this limitation.

FAZSeg: A New User-Friendly Software for Quantification of the Foveal Avascular Zone

Nov 22, 2021

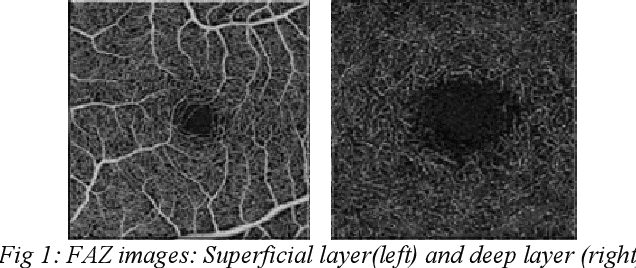

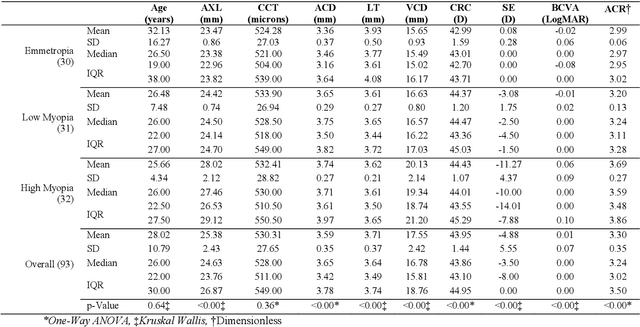

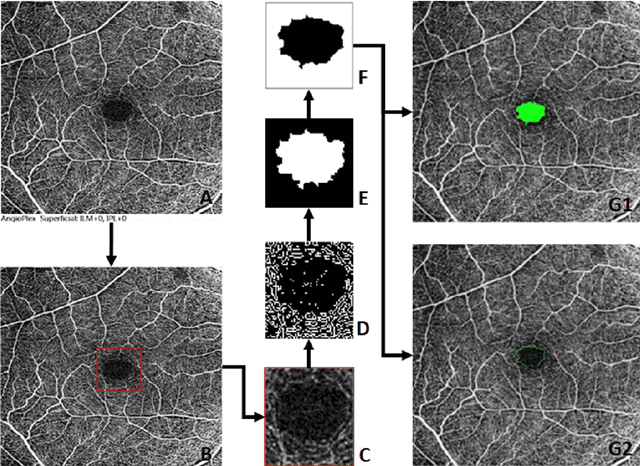

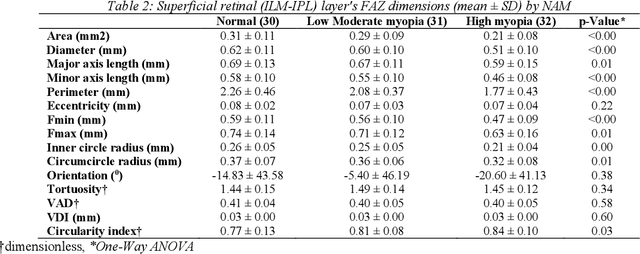

Abstract:Various ocular diseases and high myopia influence the anatomical reference point Foveal Avascular Zone (FAZ) dimensions. Therefore, it is important to segment and quantify the FAZs dimensions accurately. To the best of our knowledge, there is no automated tool or algorithms available to segment the FAZ's deep retinal layer. The paper describes a new open-access software with a user-friendly Graphical User Interface (GUI) and compares the results with the ground truth (manual segmentation).

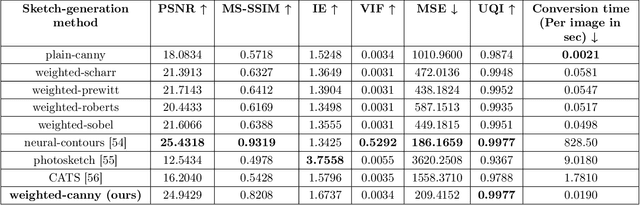

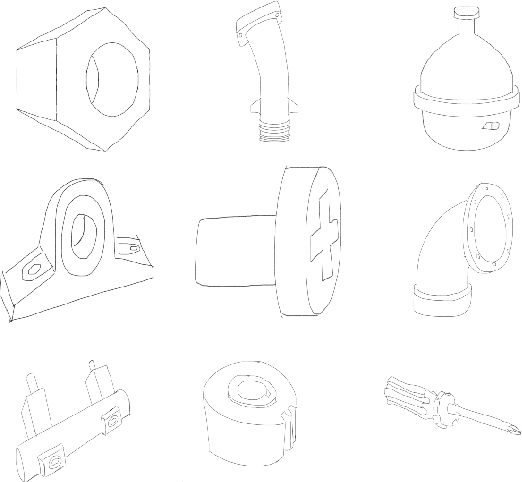

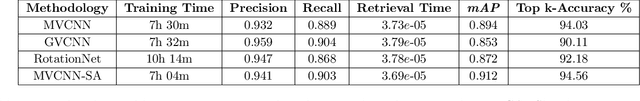

'CADSketchNet' -- An Annotated Sketch dataset for 3D CAD Model Retrieval with Deep Neural Networks

Jul 20, 2021

Abstract:Ongoing advancements in the fields of 3D modelling and digital archiving have led to an outburst in the amount of data stored digitally. Consequently, several retrieval systems have been developed depending on the type of data stored in these databases. However, unlike text data or images, performing a search for 3D models is non-trivial. Among 3D models, retrieving 3D Engineering/CAD models or mechanical components is even more challenging due to the presence of holes, volumetric features, presence of sharp edges etc., which make CAD a domain unto itself. The research work presented in this paper aims at developing a dataset suitable for building a retrieval system for 3D CAD models based on deep learning. 3D CAD models from the available CAD databases are collected, and a dataset of computer-generated sketch data, termed 'CADSketchNet', has been prepared. Additionally, hand-drawn sketches of the components are also added to CADSketchNet. Using the sketch images from this dataset, the paper also aims at evaluating the performance of various retrieval system or a search engine for 3D CAD models that accepts a sketch image as the input query. Many experimental models are constructed and tested on CADSketchNet. These experiments, along with the model architecture, choice of similarity metrics are reported along with the search results.

* Computers & Graphics Journal, Special Section on 3DOR 2021

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge