Tushar M. Athawale

REV-INR: Regularized Evidential Implicit Neural Representation for Uncertainty-Aware Volume Visualization

Jan 25, 2026Abstract:Applications of Implicit Neural Representations (INRs) have emerged as a promising deep learning approach for compactly representing large volumetric datasets. These models can act as surrogates for volume data, enabling efficient storage and on-demand reconstruction via model predictions. However, conventional deterministic INRs only provide value predictions without insights into the model's prediction uncertainty or the impact of inherent noisiness in the data. This limitation can lead to unreliable data interpretation and visualization due to prediction inaccuracies in the reconstructed volume. Identifying erroneous results extracted from model-predicted data may be infeasible, as raw data may be unavailable due to its large size. To address this challenge, we introduce REV-INR, Regularized Evidential Implicit Neural Representation, which learns to predict data values accurately along with the associated coordinate-level data uncertainty and model uncertainty using only a single forward pass of the trained REV-INR during inference. By comprehensively comparing and contrasting REV-INR with existing well-established deep uncertainty estimation methods, we show that REV-INR achieves the best volume reconstruction quality with robust data (aleatoric) and model (epistemic) uncertainty estimates using the fastest inference time. Consequently, we demonstrate that REV-INR facilitates assessment of the reliability and trustworthiness of the extracted isosurfaces and volume visualization results, enabling analyses to be solely driven by model-predicted data.

An Entropy-Based Test and Development Framework for Uncertainty Modeling in Level-Set Visualizations

Sep 13, 2024

Abstract:We present a simple comparative framework for testing and developing uncertainty modeling in uncertain marching cubes implementations. The selection of a model to represent the probability distribution of uncertain values directly influences the memory use, run time, and accuracy of an uncertainty visualization algorithm. We use an entropy calculation directly on ensemble data to establish an expected result and then compare the entropy from various probability models, including uniform, Gaussian, histogram, and quantile models. Our results verify that models matching the distribution of the ensemble indeed match the entropy. We further show that fewer bins in nonparametric histogram models are more effective whereas large numbers of bins in quantile models approach data accuracy.

Uncertainty-Informed Volume Visualization using Implicit Neural Representation

Aug 12, 2024

Abstract:The increasing adoption of Deep Neural Networks (DNNs) has led to their application in many challenging scientific visualization tasks. While advanced DNNs offer impressive generalization capabilities, understanding factors such as model prediction quality, robustness, and uncertainty is crucial. These insights can enable domain scientists to make informed decisions about their data. However, DNNs inherently lack ability to estimate prediction uncertainty, necessitating new research to construct robust uncertainty-aware visualization techniques tailored for various visualization tasks. In this work, we propose uncertainty-aware implicit neural representations to model scalar field data sets effectively and comprehensively study the efficacy and benefits of estimated uncertainty information for volume visualization tasks. We evaluate the effectiveness of two principled deep uncertainty estimation techniques: (1) Deep Ensemble and (2) Monte Carlo Dropout (MCDropout). These techniques enable uncertainty-informed volume visualization in scalar field data sets. Our extensive exploration across multiple data sets demonstrates that uncertainty-aware models produce informative volume visualization results. Moreover, integrating prediction uncertainty enhances the trustworthiness of our DNN model, making it suitable for robustly analyzing and visualizing real-world scientific volumetric data sets.

Accelerated Probabilistic Marching Cubes by Deep Learning for Time-Varying Scalar Ensembles

Jul 15, 2022

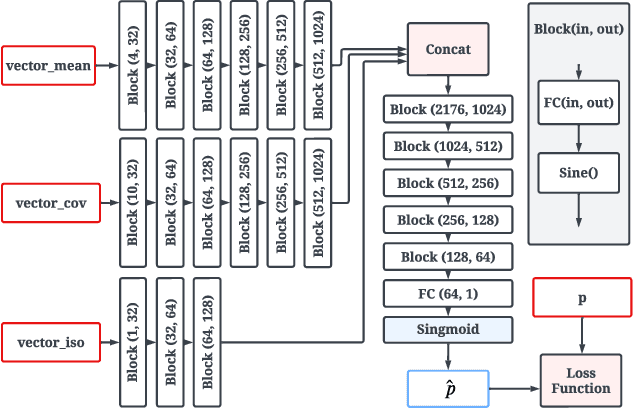

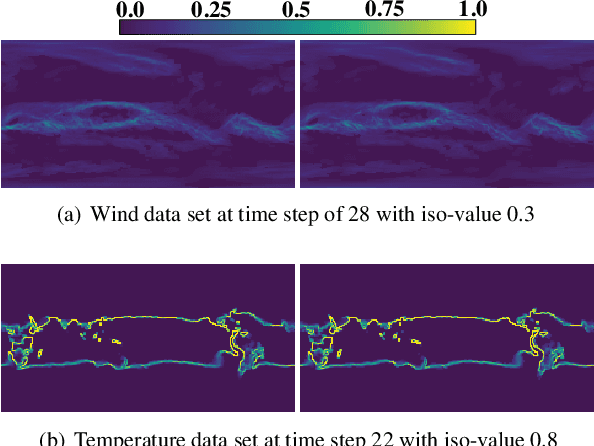

Abstract:Visualizing the uncertainty of ensemble simulations is challenging due to the large size and multivariate and temporal features of ensemble data sets. One popular approach to studying the uncertainty of ensembles is analyzing the positional uncertainty of the level sets. Probabilistic marching cubes is a technique that performs Monte Carlo sampling of multivariate Gaussian noise distributions for positional uncertainty visualization of level sets. However, the technique suffers from high computational time, making interactive visualization and analysis impossible to achieve. This paper introduces a deep-learning-based approach to learning the level-set uncertainty for two-dimensional ensemble data with a multivariate Gaussian noise assumption. We train the model using the first few time steps from time-varying ensemble data in our workflow. We demonstrate that our trained model accurately infers uncertainty in level sets for new time steps and is up to 170X faster than that of the original probabilistic model with serial computation and 10X faster than that of the original parallel computation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge