Thanapon Noraset

Some Insights of Construction of Feature Graph to Learn Pairwise Feature Interactions with Graph Neural Networks

Feb 19, 2025Abstract:Feature interaction is crucial in predictive machine learning models, as it captures the relationships between features that influence model performance. In this work, we focus on pairwise interactions and investigate their importance in constructing feature graphs for Graph Neural Networks (GNNs). Rather than proposing new methods, we leverage existing GNN models and tools to explore the relationship between feature graph structures and their effectiveness in modeling interactions. Through experiments on synthesized datasets, we uncover that edges between interacting features are important for enabling GNNs to model feature interactions effectively. We also observe that including non-interaction edges can act as noise, degrading model performance. Furthermore, we provide theoretical support for sparse feature graph selection using the Minimum Description Length (MDL) principle. We prove that feature graphs retaining only necessary interaction edges yield a more efficient and interpretable representation than complete graphs, aligning with Occam's Razor. Our findings offer both theoretical insights and practical guidelines for designing feature graphs that improve the performance and interpretability of GNN models.

Multi-sense Definition Modeling using Word Sense Decompositions

Sep 19, 2019

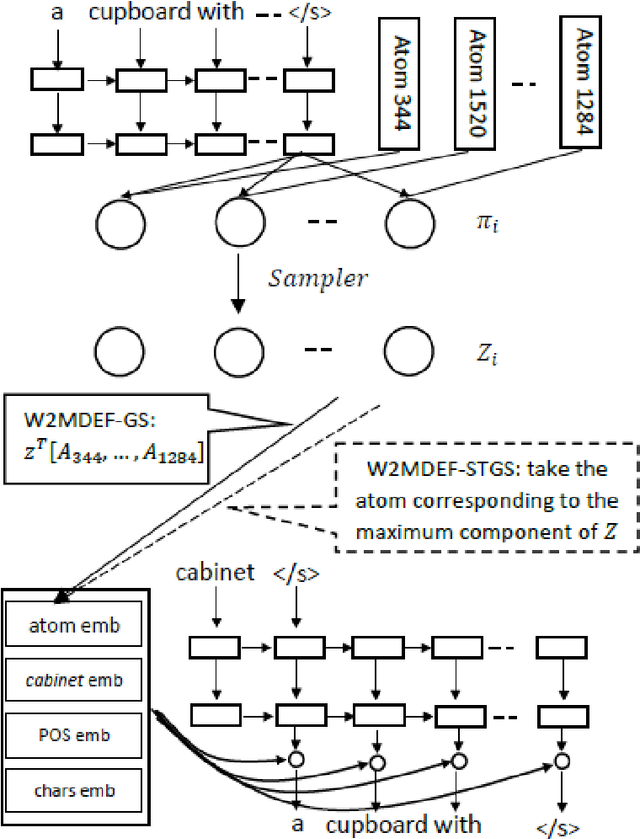

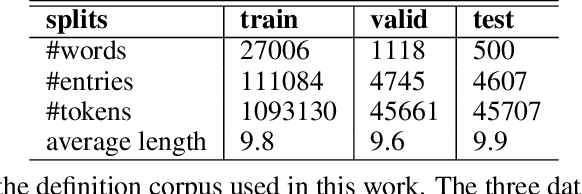

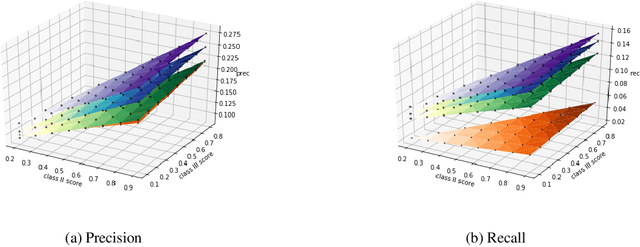

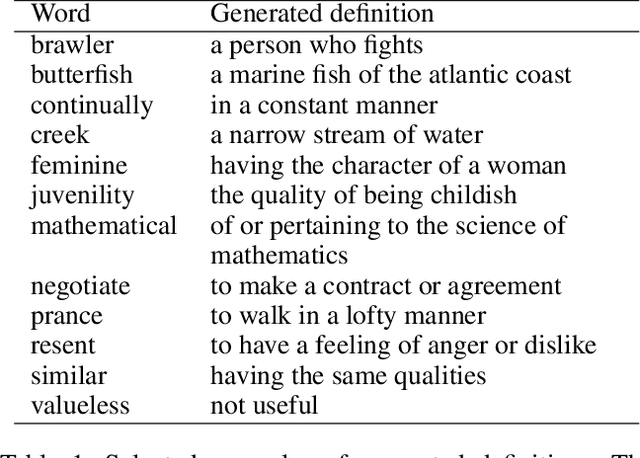

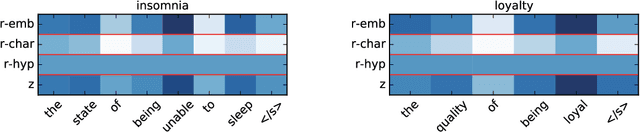

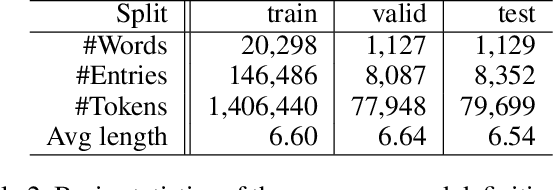

Abstract:Word embeddings capture syntactic and semantic information about words. Definition modeling aims to make the semantic content in each embedding explicit, by outputting a natural language definition based on the embedding. However, existing definition models are limited in their ability to generate accurate definitions for different senses of the same word. In this paper, we introduce a new method that enables definition modeling for multiple senses. We show how a Gumble-Softmax approach outperforms baselines at matching sense-specific embeddings to definitions during training. In experiments, our multi-sense definition model improves recall over a state-of-the-art single-sense definition model by a factor of three, without harming precision.

Definition Modeling: Learning to define word embeddings in natural language

Dec 01, 2016

Abstract:Distributed representations of words have been shown to capture lexical semantics, as demonstrated by their effectiveness in word similarity and analogical relation tasks. But, these tasks only evaluate lexical semantics indirectly. In this paper, we study whether it is possible to utilize distributed representations to generate dictionary definitions of words, as a more direct and transparent representation of the embeddings' semantics. We introduce definition modeling, the task of generating a definition for a given word and its embedding. We present several definition model architectures based on recurrent neural networks, and experiment with the models over multiple data sets. Our results show that a model that controls dependencies between the word being defined and the definition words performs significantly better, and that a character-level convolution layer designed to leverage morphology can complement word-level embeddings. Finally, an error analysis suggests that the errors made by a definition model may provide insight into the shortcomings of word embeddings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge