Taha Rajabzadeh

Controlling the Latent Space of GANs through Reinforcement Learning: A Case Study on Task-based Image-to-Image Translation

Jul 26, 2023

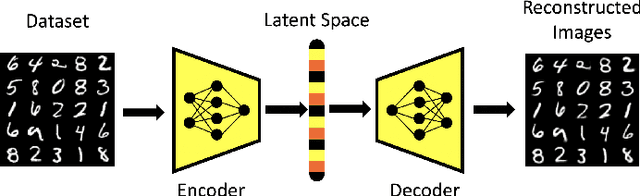

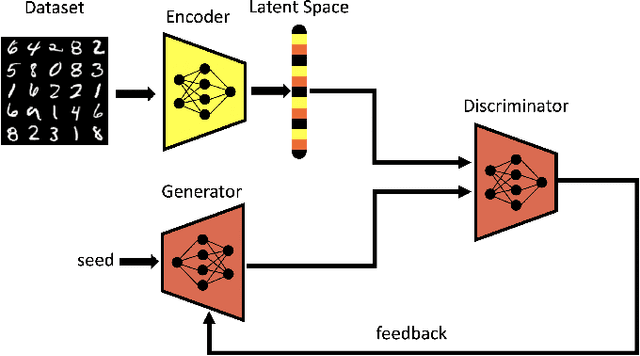

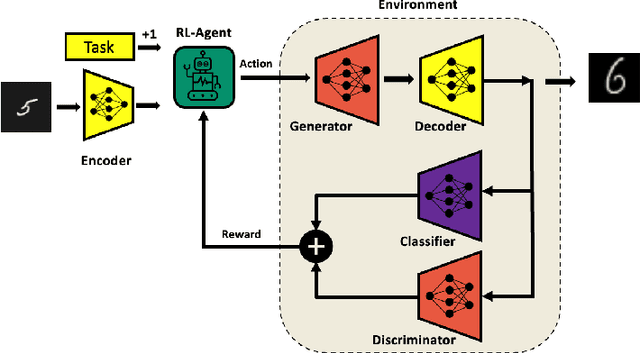

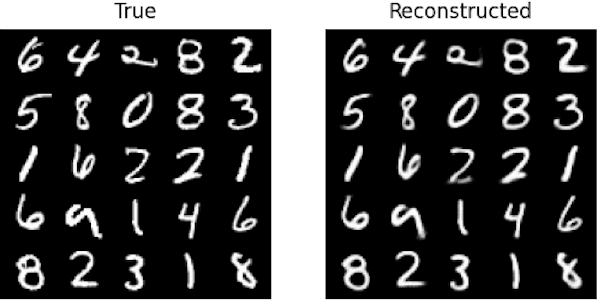

Abstract:Generative Adversarial Networks (GAN) have emerged as a formidable AI tool to generate realistic outputs based on training datasets. However, the challenge of exerting control over the generation process of GANs remains a significant hurdle. In this paper, we propose a novel methodology to address this issue by integrating a reinforcement learning (RL) agent with a latent-space GAN (l-GAN), thereby facilitating the generation of desired outputs. More specifically, we have developed an actor-critic RL agent with a meticulously designed reward policy, enabling it to acquire proficiency in navigating the latent space of the l-GAN and generating outputs based on specified tasks. To substantiate the efficacy of our approach, we have conducted a series of experiments employing the MNIST dataset, including arithmetic addition as an illustrative task. The outcomes of these experiments serve to validate our methodology. Our pioneering integration of an RL agent with a GAN model represents a novel advancement, holding great potential for enhancing generative networks in the future.

Multi-Environment Meta-Learning in Stochastic Linear Bandits

May 12, 2022

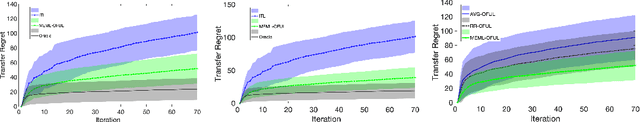

Abstract:In this work we investigate meta-learning (or learning-to-learn) approaches in multi-task linear stochastic bandit problems that can originate from multiple environments. Inspired by the work of [1] on meta-learning in a sequence of linear bandit problems whose parameters are sampled from a single distribution (i.e., a single environment), here we consider the feasibility of meta-learning when task parameters are drawn from a mixture distribution instead. For this problem, we propose a regularized version of the OFUL algorithm that, when trained on tasks with labeled environments, achieves low regret on a new task without requiring knowledge of the environment from which the new task originates. Specifically, our regret bound for the new algorithm captures the effect of environment misclassification and highlights the benefits over learning each task separately or meta-learning without recognition of the distinct mixture components.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge