Stephanie Cairns

Bias Mitigation of Face Recognition Models Through Calibration

Jun 07, 2021

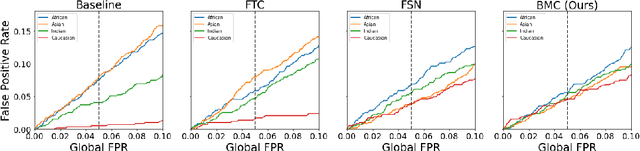

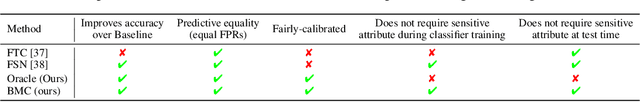

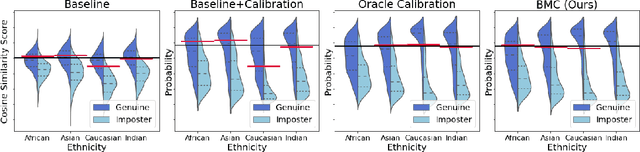

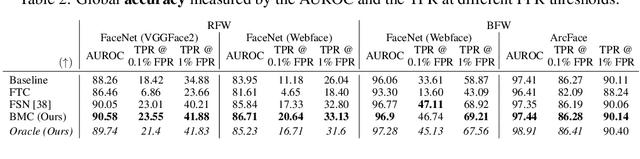

Abstract:Face recognition models suffer from bias: for example, the probability of a false positive (incorrect face match) strongly depends on sensitive attributes like ethnicity. As a result, these models may disproportionately and negatively impact minority groups when used in law enforcement. In this work, we introduce the Bias Mitigation Calibration (BMC) method, which (i) increases model accuracy (improving the state-of-the-art), (ii) produces fairly-calibrated probabilities, (iii) significantly reduces the gap in the false positive rates, and (iv) does not require knowledge of the sensitive attribute.

Volume-preserving Neural Networks: A Solution to the Vanishing Gradient Problem

Nov 22, 2019

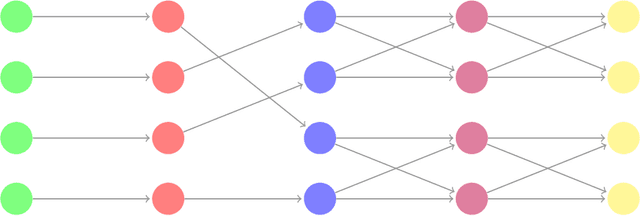

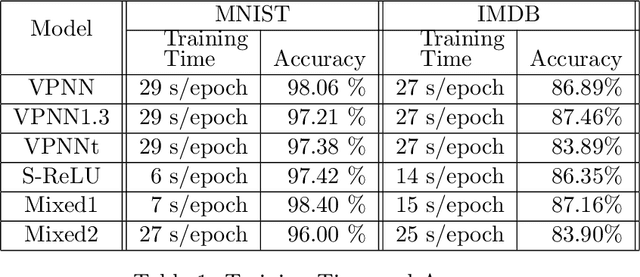

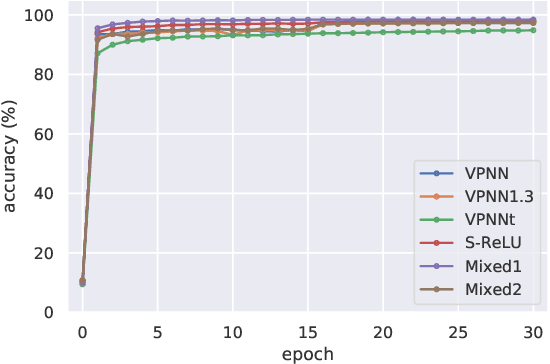

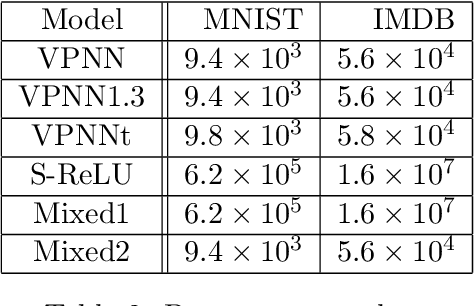

Abstract:We propose a novel approach to addressing the vanishing (or exploding) gradient problem in deep neural networks. We construct a new architecture for deep neural networks where all layers (except the output layer) of the network are a combination of rotation, permutation, diagonal, and activation sublayers which are all volume preserving. This control on the volume forces the gradient (on average) to maintain equilibrium and not explode or vanish. Volume-preserving neural networks train reliably, quickly and accurately and the learning rate is consistent across layers in deep volume-preserving neural networks. To demonstrate this we apply our volume-preserving neural network model to two standard datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge