Steffen Holter

Deconstructing Human-AI Collaboration: Agency, Interaction, and Adaptation

Apr 18, 2024Abstract:As full AI-based automation remains out of reach in most real-world applications, the focus has instead shifted to leveraging the strengths of both human and AI agents, creating effective collaborative systems. The rapid advances in this area have yielded increasingly more complex systems and frameworks, while the nuance of their characterization has gotten more vague. Similarly, the existing conceptual models no longer capture the elaborate processes of these systems nor describe the entire scope of their collaboration paradigms. In this paper, we propose a new unified set of dimensions through which to analyze and describe human-AI systems. Our conceptual model is centered around three high-level aspects - agency, interaction, and adaptation - and is developed through a multi-step process. Firstly, an initial design space is proposed by surveying the literature and consolidating existing definitions and conceptual frameworks. Secondly, this model is iteratively refined and validated by conducting semi-structured interviews with nine researchers in this field. Lastly, to illustrate the applicability of our design space, we utilize it to provide a structured description of selected human-AI systems.

AdViCE: Aggregated Visual Counterfactual Explanations for Machine Learning Model Validation

Sep 12, 2021

Abstract:Rapid improvements in the performance of machine learning models have pushed them to the forefront of data-driven decision-making. Meanwhile, the increased integration of these models into various application domains has further highlighted the need for greater interpretability and transparency. To identify problems such as bias, overfitting, and incorrect correlations, data scientists require tools that explain the mechanisms with which these model decisions are made. In this paper we introduce AdViCE, a visual analytics tool that aims to guide users in black-box model debugging and validation. The solution rests on two main visual user interface innovations: (1) an interactive visualization design that enables the comparison of decisions on user-defined data subsets; (2) an algorithm and visual design to compute and visualize counterfactual explanations - explanations that depict model outcomes when data features are perturbed from their original values. We provide a demonstration of the tool through a use case that showcases the capabilities and potential limitations of the proposed approach.

ViCE: Visual Counterfactual Explanations for Machine Learning Models

Mar 05, 2020

Abstract:The continued improvements in the predictive accuracy of machine learning models have allowed for their widespread practical application. Yet, many decisions made with seemingly accurate models still require verification by domain experts. In addition, end-users of a model also want to understand the reasons behind specific decisions. Thus, the need for interpretability is increasingly paramount. In this paper we present an interactive visual analytics tool, ViCE, that generates counterfactual explanations to contextualize and evaluate model decisions. Each sample is assessed to identify the minimal set of changes needed to flip the model's output. These explanations aim to provide end-users with personalized actionable insights with which to understand, and possibly contest or improve, automated decisions. The results are effectively displayed in a visual interface where counterfactual explanations are highlighted and interactive methods are provided for users to explore the data and model. The functionality of the tool is demonstrated by its application to a home equity line of credit dataset.

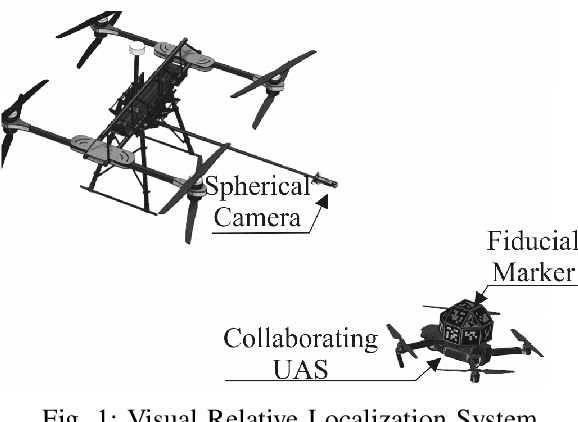

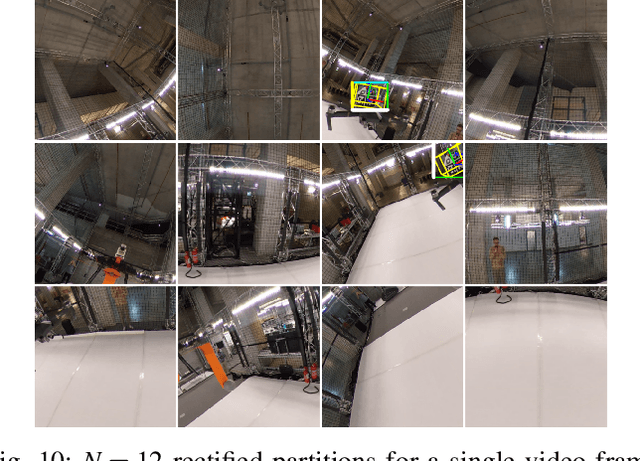

Relative Visual Localization for Unmanned Aerial Systems

Mar 04, 2020

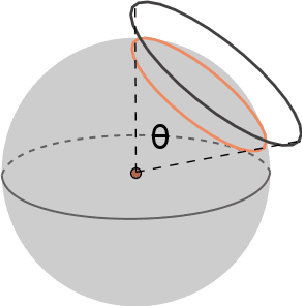

Abstract:Cooperative Unmanned Aerial Systems (UASs) in GPS-denied environments demand an accurate pose-localization system to ensure efficient operation. In this paper we present a novel visual relative localization system capable of monitoring a 360$^o$ Field-of-View (FoV) in the immediate surroundings of the UAS using a spherical camera. Collaborating UASs carry a set of fiducial markers which are detected by the camera-system. The spherical image is partitioned and rectified into a set of square images. An algorithm is proposed to select the number of images that balances the computational load while maintaining a minimum tracking-accuracy level. The developed system tracks UASs in the vicinity of the spherical camera and experimental studies using two UASs are offered to validate the performance of the relative visual localization against that of a motion capture system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge