Spyros Lalis

Green or Fast? Learning to Balance Cold Starts and Idle Carbon in Serverless Computing

Feb 27, 2026Abstract:Serverless computing simplifies cloud deployment but introduces new challenges in managing service latency and carbon emissions. Reducing cold-start latency requires retaining warm function instances, while minimizing carbon emissions favors reclaiming idle resources. This balance is further complicated by time-varying grid carbon intensity and varying workload patterns, under which static keep-alive policies are inefficient. We present LACE-RL, a latency-aware and carbon-efficient management framework that formulates serverless pod retention as a sequential decision problem. LACE-RL uses deep reinforcement learning to dynamically tune keep-alive durations, jointly modeling cold-start probability, function-specific latency costs, and real-time carbon intensity. Using the Huawei Public Cloud Trace, we show that LACE-RL reduces cold starts by 51.69% and idle keep-alive carbon emissions by 77.08% compared to Huawei's static policy, while achieving better latency-carbon trade-offs than state-of-the-art heuristic and single-objective baselines, approaching Oracle performance.

Black-box Adversarial Attacks on CNN-based SLAM Algorithms

May 30, 2025Abstract:Continuous advancements in deep learning have led to significant progress in feature detection, resulting in enhanced accuracy in tasks like Simultaneous Localization and Mapping (SLAM). Nevertheless, the vulnerability of deep neural networks to adversarial attacks remains a challenge for their reliable deployment in applications, such as navigation of autonomous agents. Even though CNN-based SLAM algorithms are a growing area of research there is a notable absence of a comprehensive presentation and examination of adversarial attacks targeting CNN-based feature detectors, as part of a SLAM system. Our work introduces black-box adversarial perturbations applied to the RGB images fed into the GCN-SLAM algorithm. Our findings on the TUM dataset [30] reveal that even attacks of moderate scale can lead to tracking failure in as many as 76% of the frames. Moreover, our experiments highlight the catastrophic impact of attacking depth instead of RGB input images on the SLAM system.

Should I Stay or Should I Go: A Learning Approach for Drone-based Sensing Applications

Sep 07, 2024

Abstract:Multicopter drones are becoming a key platform in several application domains, enabling precise on-the-spot sensing and/or actuation. We focus on the case where the drone must process the sensor data in order to decide, depending on the outcome, whether it needs to perform some additional action, e.g., more accurate sensing or some form of actuation. On the one hand, waiting for the computation to complete may waste time, if it turns out that no further action is needed. On the other hand, if the drone starts moving toward the next point of interest before the computation ends, it may need to return back to the previous point, if some action needs to be taken. In this paper, we propose a learning approach that enables the drone to take informed decisions about whether to wait for the result of the computation (or not), based on past experience gathered from previous missions. Through an extensive evaluation, we show that the proposed approach, when properly configured, outperforms several static policies, up to 25.8%, over a wide variety of different scenarios where the probability of some action being required at a given point of interest remains stable as well as for scenarios where this probability varies in time.

* 9 pages, 9 figures

MP-SL: Multihop Parallel Split Learning

Jan 31, 2024Abstract:Federated Learning (FL) stands out as a widely adopted protocol facilitating the training of Machine Learning (ML) models while maintaining decentralized data. However, challenges arise when dealing with a heterogeneous set of participating devices, causing delays in the training process, particularly among devices with limited resources. Moreover, the task of training ML models with a vast number of parameters demands computing and memory resources beyond the capabilities of small devices, such as mobile and Internet of Things (IoT) devices. To address these issues, techniques like Parallel Split Learning (SL) have been introduced, allowing multiple resource-constrained devices to actively participate in collaborative training processes with assistance from resourceful compute nodes. Nonetheless, a drawback of Parallel SL is the substantial memory allocation required at the compute nodes, for instance training VGG-19 with 100 participants needs 80 GB. In this paper, we introduce Multihop Parallel SL (MP-SL), a modular and extensible ML as a Service (MLaaS) framework designed to facilitate the involvement of resource-constrained devices in collaborative and distributed ML model training. Notably, to alleviate memory demands per compute node, MP-SL supports multihop Parallel SL-based training. This involves splitting the model into multiple parts and utilizing multiple compute nodes in a pipelined manner. Extensive experimentation validates MP-SL's capability to handle system heterogeneity, demonstrating that the multihop configuration proves more efficient than horizontally scaled one-hop Parallel SL setups, especially in scenarios involving more cost-effective compute nodes.

Flexible Computation Offloading at the Edge for Autonomous Drones with Uncertain Flight Times

Oct 18, 2023

Abstract:An ever increasing number of applications can employ aerial unmanned vehicles, or so-called drones, to perform different sensing and possibly also actuation tasks from the air. In some cases, the data that is captured at a given point has to be processed before moving to the next one. Drones can exploit nearby edge servers to offload the computation instead of performing it locally. However, doing this in a naive way can be suboptimal if servers have limited computing resources and drones have limited energy resources. In this paper, we propose a protocol and resource reservation scheme for each drone and edge server to decide, in a dynamic and fully decentralized way, whether to offload the computation and respectively whether to accept such an offloading requests, with the objective to evenly reduce the drones' mission times. We evaluate our approach through extensive simulation experiments, showing that it can significantly reduce the mission times compared to a no-offloading scenario by up to 26.2%, while outperforming an offloading schedule that has been computed offline by up to 7.4% as well as a purely opportunistic approach by up to 23.9%.

* 9 pages, 3 figures, preprint accepted in the 19th International Conference on Distributed Computing in Smart Systems and the Internet of Things (DCOSS-IoT)

IPLS : A Framework for Decentralized Federated Learning

Jan 06, 2021

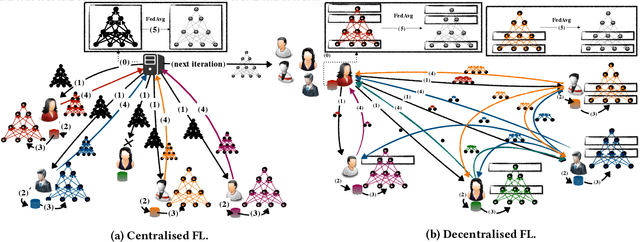

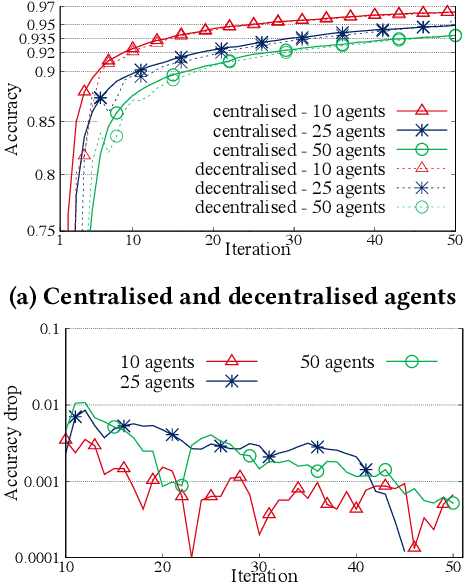

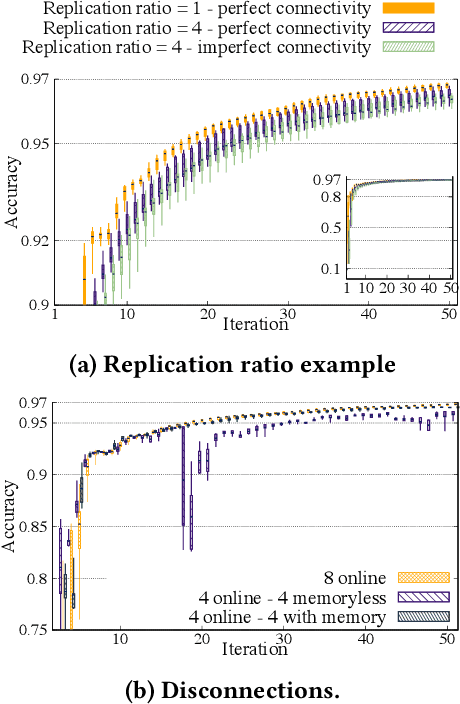

Abstract:The proliferation of resourceful mobile devices that store rich, multidimensional and privacy-sensitive user data motivate the design of federated learning (FL), a machine-learning (ML) paradigm that enables mobile devices to produce an ML model without sharing their data. However, the majority of the existing FL frameworks rely on centralized entities. In this work, we introduce IPLS, a fully decentralized federated learning framework that is partially based on the interplanetary file system (IPFS). By using IPLS and connecting into the corresponding private IPFS network, any party can initiate the training process of an ML model or join an ongoing training process that has already been started by another party. IPLS scales with the number of participants, is robust against intermittent connectivity and dynamic participant departures/arrivals, requires minimal resources, and guarantees that the accuracy of the trained model quickly converges to that of a centralized FL framework with an accuracy drop of less than one per thousand.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge