Sidharth Pancholi

Advancing Brain-Computer Interface System Performance in Hand Trajectory Estimation with NeuroKinect

Aug 16, 2023

Abstract:Brain-computer interface (BCI) technology enables direct communication between the brain and external devices, allowing individuals to control their environment using brain signals. However, existing BCI approaches face three critical challenges that hinder their practicality and effectiveness: a) time-consuming preprocessing algorithms, b) inappropriate loss function utilization, and c) less intuitive hyperparameter settings. To address these limitations, we present \textit{NeuroKinect}, an innovative deep-learning model for accurate reconstruction of hand kinematics using electroencephalography (EEG) signals. \textit{NeuroKinect} model is trained on the Grasp and Lift (GAL) tasks data with minimal preprocessing pipelines, subsequently improving the computational efficiency. A notable improvement introduced by \textit{NeuroKinect} is the utilization of a novel loss function, denoted as $\mathcal{L}_{\text{Stat}}$. This loss function addresses the discrepancy between correlation and mean square error in hand kinematics prediction. Furthermore, our study emphasizes the scientific intuition behind parameter selection to enhance accuracy. We analyze the spatial and temporal dynamics of the motor movement task by employing event-related potential and brain source localization (BSL) results. This approach provides valuable insights into the optimal parameter selection, improving the overall performance and accuracy of the \textit{NeuroKinect} model. Our model demonstrates strong correlations between predicted and actual hand movements, with mean Pearson correlation coefficients of 0.92 ($\pm$0.015), 0.93 ($\pm$0.019), and 0.83 ($\pm$0.018) for the X, Y, and Z dimensions. The precision of \textit{NeuroKinect} is evidenced by low mean squared errors (MSE) of 0.016 ($\pm$0.001), 0.015 ($\pm$0.002), and 0.017 ($\pm$0.005) for the X, Y, and Z dimensions, respectively.

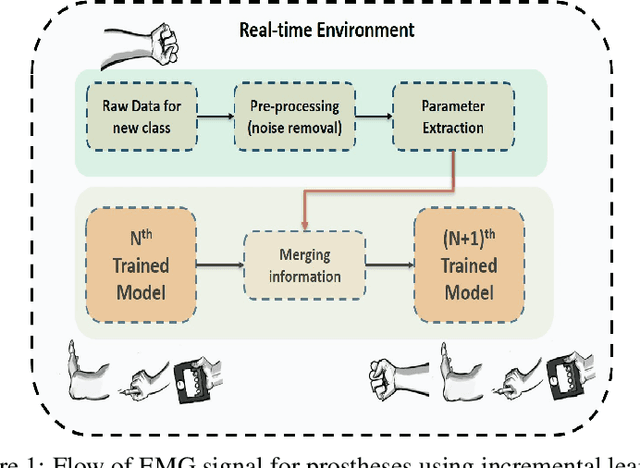

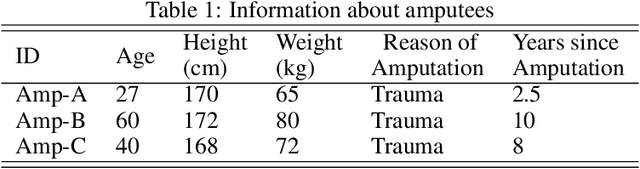

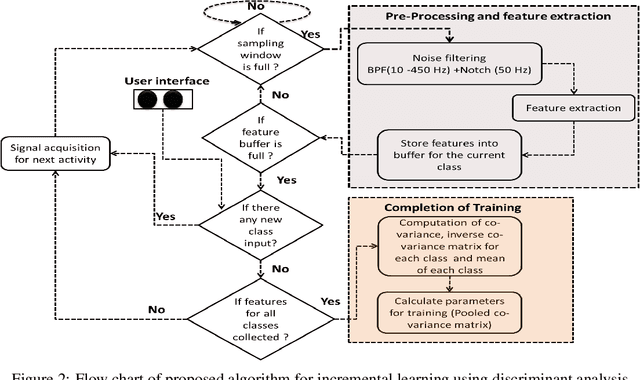

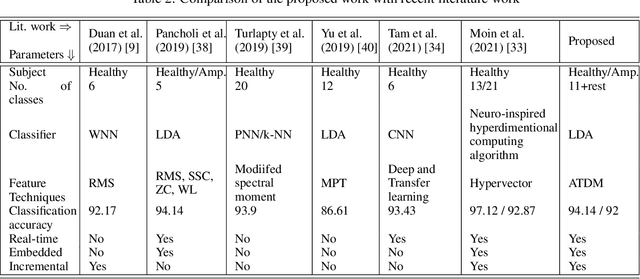

Novel Time Domain Based Upper-Limb Prosthesis Control using Incremental Learning Approach

Aug 25, 2021

Abstract:The upper limb of the body is a vital for various kind of activities for human. The complete or partial loss of the upper limb would lead to a significant impact on daily activities of the amputees. EMG carries important information of human physique which helps to decode the various functionalities of human arm. EMG signal based bionics and prosthesis have gained huge research attention over the past decade. Conventional EMG-PR based prosthesis struggles to give accurate performance due to off-line training used and incapability to compensate for electrode position shift and change in arm position. This work proposes online training and incremental learning based system for upper limb prosthetic application. This system consists of ADS1298 as AFE (analog front end) and a 32 bit arm cortex-m4 processor for DSP (digital signal processing). The system has been tested for both intact and amputated subjects. Time derivative moment based features have been implemented and utilized for effective pattern classification. Initially, system have been trained for four classes using the on-line training process later on the number of classes have been incremented on user demand till eleven, and system performance has been evaluated. The system yielded a completion rate of 100% for healthy and amputated subjects when four motions have been considered. Further 94.33% and 92% completion rate have been showcased by the system when the number of classes increased to eleven for healthy and amputees respectively. The motion efficacy test is also evaluated for all the subjects. The highest efficacy rate of 91.23% and 88.64% are observed for intact and amputated subjects respectively.

A Visual Domain Transfer Learning Approach for Heartbeat Sound Classification

Jul 28, 2021

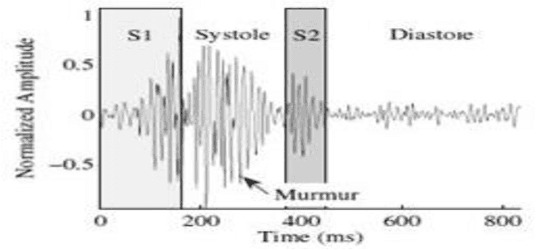

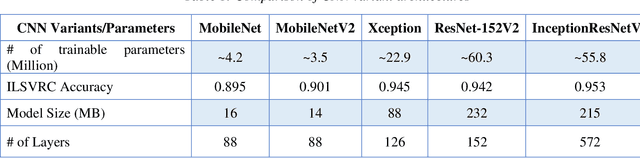

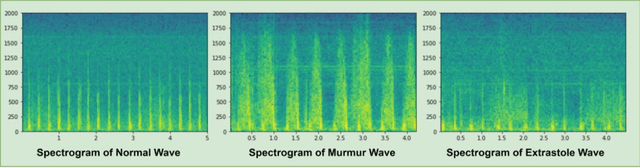

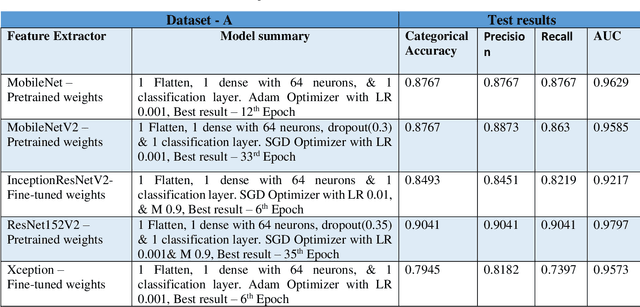

Abstract:Heart disease is the most common reason for human mortality that causes almost one-third of deaths throughout the world. Detecting the disease early increases the chances of survival of the patient and there are several ways a sign of heart disease can be detected early. This research proposes to convert cleansed and normalized heart sound into visual mel scale spectrograms and then using visual domain transfer learning approaches to automatically extract features and categorize between heart sounds. Some of the previous studies found that the spectrogram of various types of heart sounds is visually distinguishable to human eyes, which motivated this study to experiment on visual domain classification approaches for automated heart sound classification. It will use convolution neural network-based architectures i.e. ResNet, MobileNetV2, etc as the automated feature extractors from spectrograms. These well-accepted models in the image domain showed to learn generalized feature representations of cardiac sounds collected from different environments with varying amplitude and noise levels. Model evaluation criteria used were categorical accuracy, precision, recall, and AUROC as the chosen dataset is unbalanced. The proposed approach has been implemented on datasets A and B of the PASCAL heart sound collection and resulted in ~ 90% categorical accuracy and AUROC of ~0.97 for both sets.

Classification of Upper Arm Movements from EEG signals using Machine Learning with ICA Analysis

Jul 18, 2021

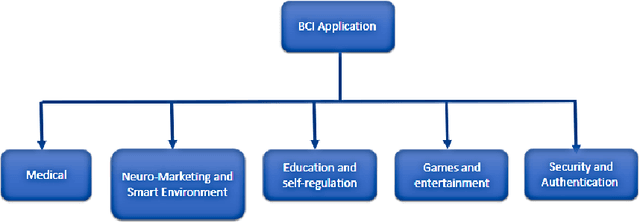

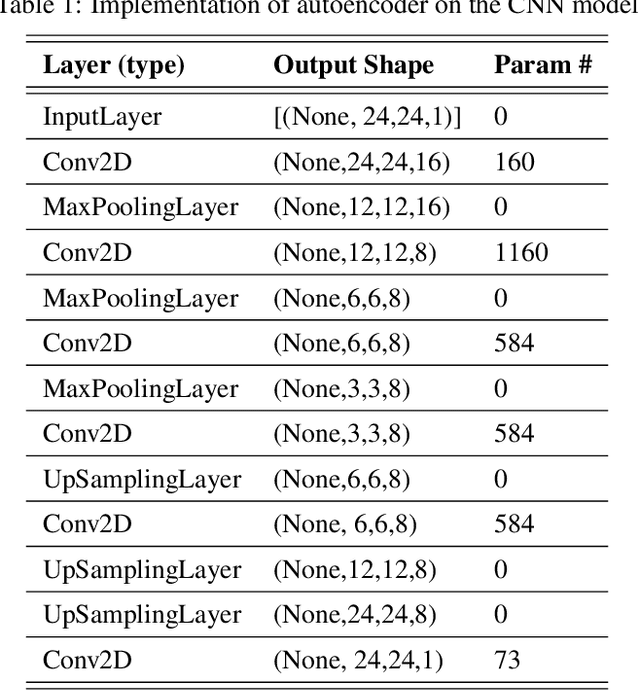

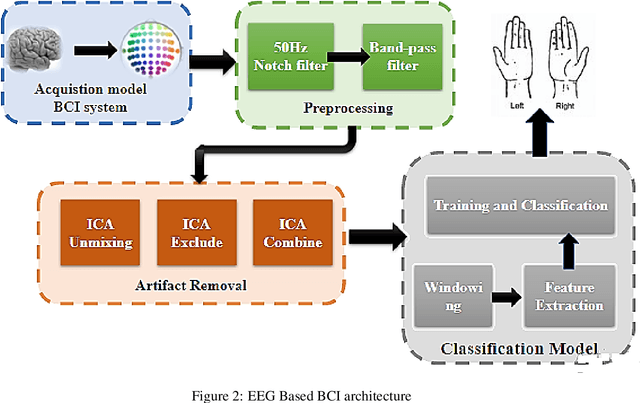

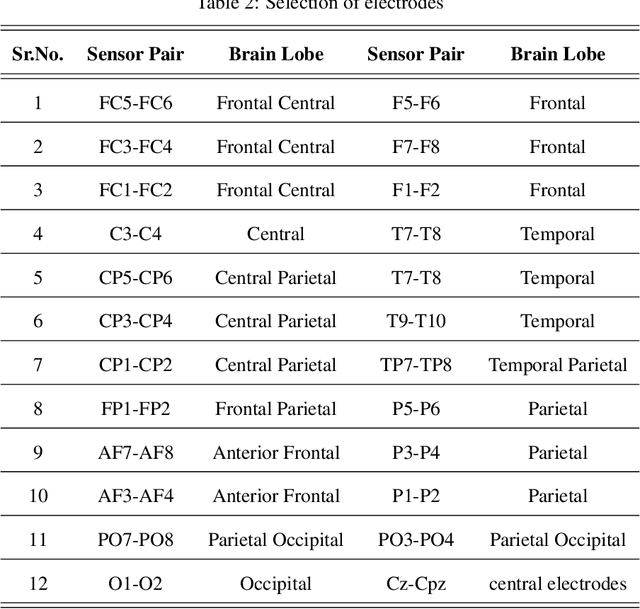

Abstract:The Brain-Computer Interface system is a profoundly developing area of experimentation for Motor activities which plays vital role in decoding cognitive activities. Classification of Cognitive-Motor Imagery activities from EEG signals is a critical task. Hence proposed a unique algorithm for classifying left/right-hand movements by utilizing Multi-layer Perceptron Neural Network. Handcrafted statistical Time domain and Power spectral density frequency domain features were extracted and obtained a combined accuracy of 96.02%. Results were compared with the deep learning framework. In addition to accuracy, Precision, F1-Score, and recall was considered as the performance metrics. The intervention of unwanted signals contaminates the EEG signals which influence the performance of the algorithm. Therefore, a novel approach was approached to remove the artifacts using Independent Components Analysis which boosted the performance. Following the selection of appropriate feature vectors that provided acceptable accuracy. The same method was used on all nine subjects. As a result, intra-subject accuracy was obtained for 9 subjects 94.72%. The results show that the proposed approach would be useful to classify the upper limb movements accurately.

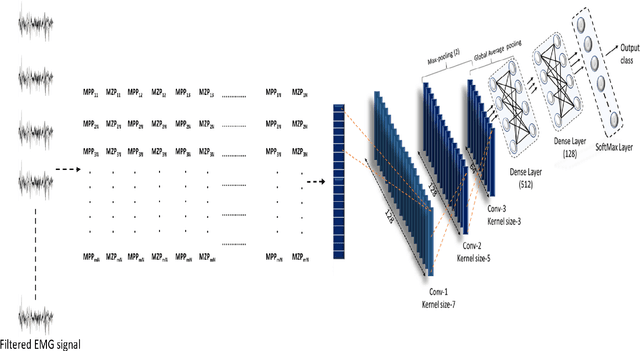

A Robust and Accurate Deep Learning based Pattern Recognition Framework for Upper Limb Prosthesis using sEMG

Jun 11, 2021

Abstract:In EMG based pattern recognition (EMG-PR), deep learning-based techniques have become more prominent for their self-regulating capability to extract discriminant features from large data-sets. Moreover, the performance of traditional machine learning-based methods show limitation to categorize over a certain number of classes and degrades over a period of time. In this paper, an accurate, robust, and fast convolutional neural network-based framework for EMG pattern identification is presented. To assess the performance of the proposed system, five publicly available and benchmark data-sets of upper limb activities were used. This data-set contains 49 to 52 upper limb motions (NinaPro DB1, NinaPro DB2, and NinaPro DB3), Data with force variation, and data with arm position variation for intact and amputated subjects. The classification accuracies of 91.11% (53 classes), 89.45% (49 classes), 81.67% (49 classes of amputees), 95.67% (6 classes with force variation), and 99.11% (8 classes with arm position variation) have been observed during the testing and validation. The performance of the proposed system is compared with the state of art techniques in the literature. The findings demonstrate that classification accuracy and time complexity have improved significantly. Keras, TensorFlow's high-level API for constructing deep learning models, was used for signal pre-processing and deep-learning-based algorithms. The suggested method was run on an Intel 3.5GHz Core i7, 7th Gen CPU with 8GB DDR4 RAM.

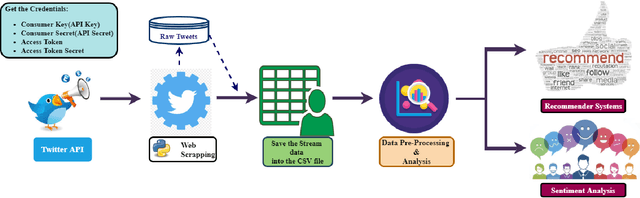

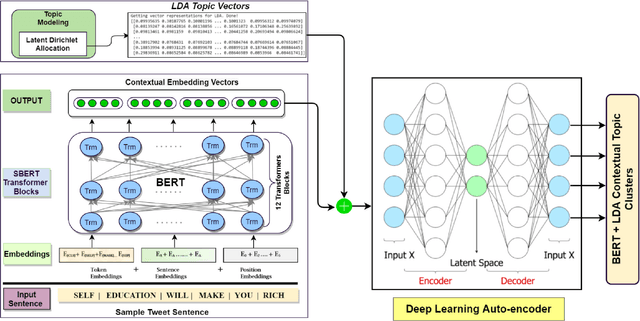

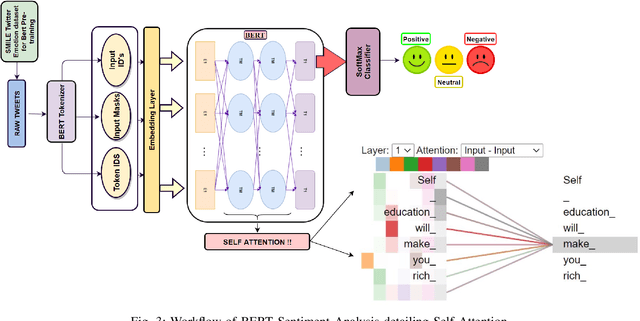

T-BERT -- Model for Sentiment Analysis of Micro-blogs Integrating Topic Model and BERT

Jun 02, 2021

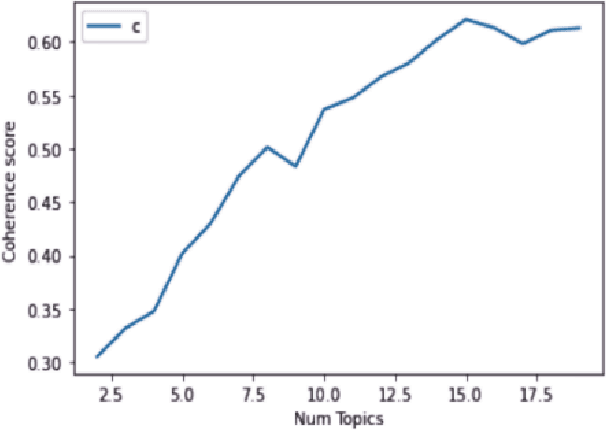

Abstract:Sentiment analysis (SA) has become an extensive research area in recent years impacting diverse fields including ecommerce, consumer business, and politics, driven by increasing adoption and usage of social media platforms. It is challenging to extract topics and sentiments from unsupervised short texts emerging in such contexts, as they may contain figurative words, strident data, and co-existence of many possible meanings for a single word or phrase, all contributing to obtaining incorrect topics. Most prior research is based on a specific theme/rhetoric/focused-content on a clean dataset. In the work reported here, the effectiveness of BERT(Bidirectional Encoder Representations from Transformers) in sentiment classification tasks from a raw live dataset taken from a popular microblogging platform is demonstrated. A novel T-BERT framework is proposed to show the enhanced performance obtainable by combining latent topics with contextual BERT embeddings. Numerical experiments were conducted on an ensemble with about 42000 datasets using NimbleBox.ai platform with a hardware configuration consisting of Nvidia Tesla K80(CUDA), 4 core CPU, 15GB RAM running on an isolated Google Cloud Platform instance. The empirical results show that the model improves in performance while adding topics to BERT and an accuracy rate of 90.81% on sentiment classification using BERT with the proposed approach.

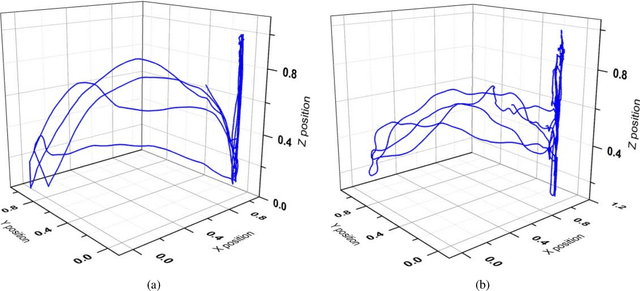

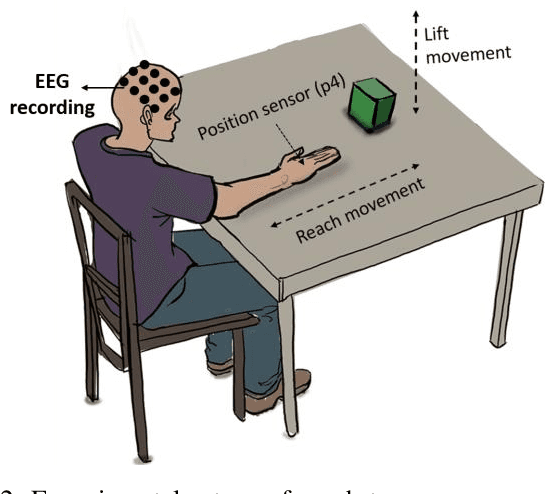

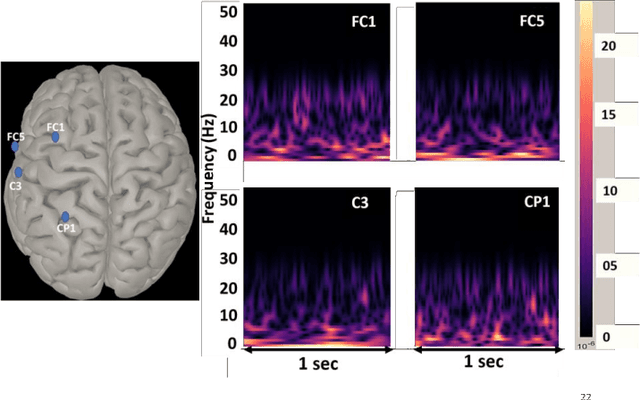

Source Aware Deep Learning Framework for Hand Kinematic Reconstruction using EEG Signal

Apr 05, 2021

Abstract:The ability to reconstruct the kinematic parameters of hand movement using non-invasive electroencephalography (EEG) is essential for strength and endurance augmentation using exosuit/exoskeleton. For system development, the conventional classification based brain computer interface (BCI) controls external devices by providing discrete control signals to the actuator. A continuous kinematic reconstruction from EEG signal is better suited for practical BCI applications. The state-of-the-art multi-variable linear regression (mLR) method provides a continuous estimate of hand kinematics, achieving maximum correlation of upto 0.67 between the measured and the estimated hand trajectory. In this work, three novel source aware deep learning models are proposed for motion trajectory prediction (MTP). In particular, multi layer perceptron (MLP), convolutional neural network - long short term memory (CNN-LSTM), and wavelet packet decomposition (WPD) CNN-LSTM are presented. Additional novelty of the work includes utilization of brain source localization (using sLORETA) for the reliable decoding of motor intention mapping (channel selection) and accurate EEG time segment selection. Performance of the proposed models are compared with the traditionally utilised mLR technique on the real grasp and lift (GAL) dataset. Effectiveness of the proposed framework is established using the Pearson correlation coefficient and trajectory analysis. A significant improvement in the correlation coefficient is observed when compared with state-of-the-art mLR model. Our work bridges the gap between the control and the actuator block, enabling real time BCI implementation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge