SiQi Zhou

University of Toronto Institute for Aerospace Studies, Vector Institute for Artificial Intelligence

Learning to Fly -- a Gym Environment with PyBullet Physics for Reinforcement Learning of Multi-agent Quadcopter Control

Mar 04, 2021

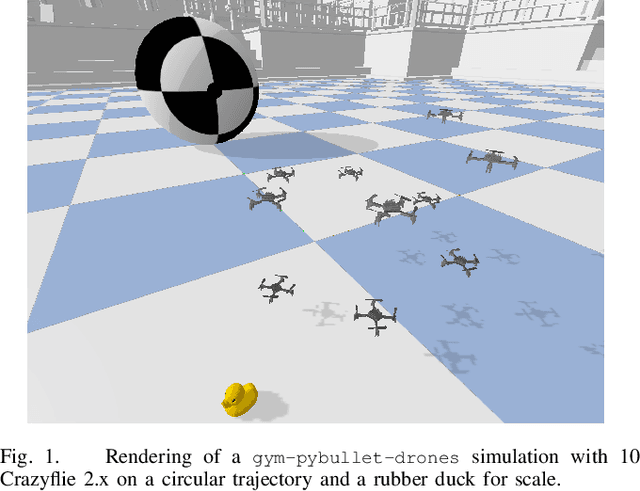

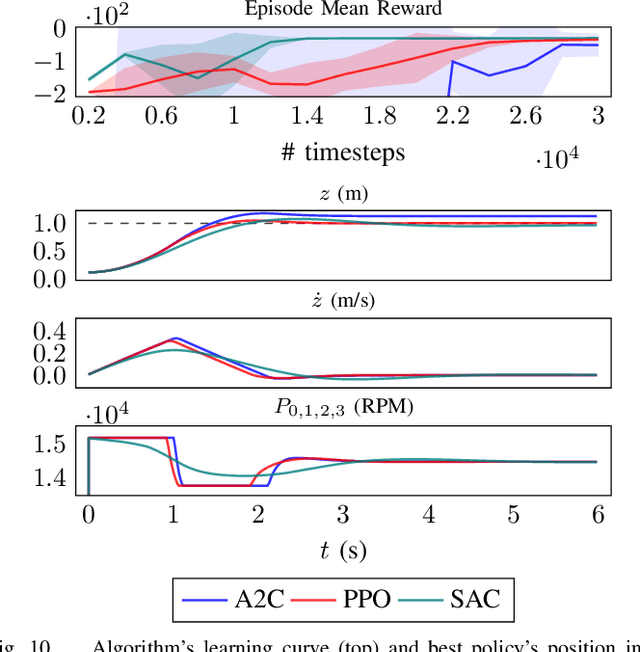

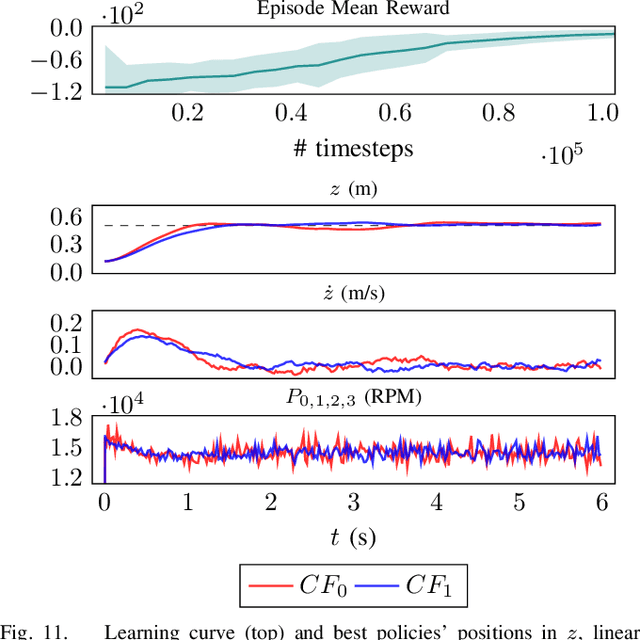

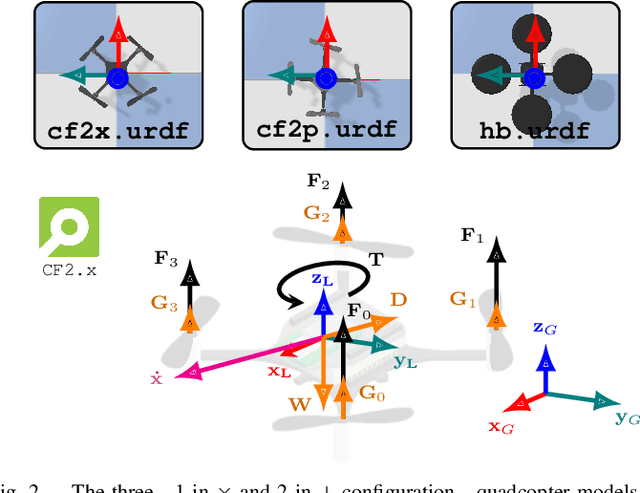

Abstract:Robotic simulators are crucial for academic research and education as well as the development of safety-critical applications. Reinforcement learning environments -- simple simulations coupled with a problem specification in the form of a reward function -- are also important to standardize the development (and benchmarking) of learning algorithms. Yet, full-scale simulators typically lack portability and parallelizability. Vice versa, many reinforcement learning environments trade-off realism for high sample throughputs in toy-like problems. While public data sets have greatly benefited deep learning and computer vision, we still lack the software tools to simultaneously develop -- and fairly compare -- control theory and reinforcement learning approaches. In this paper, we propose an open-source OpenAI Gym-like environment for multiple quadcopters based on the Bullet physics engine. Its multi-agent and vision based reinforcement learning interfaces, as well as the support of realistic collisions and aerodynamic effects, make it, to the best of our knowledge, a first of its kind. We demonstrate its use through several examples, either for control (trajectory tracking with PID control, multi-robot flight with downwash, etc.) or reinforcement learning (single and multi-agent stabilization tasks), hoping to inspire future research that combines control theory and machine learning.

An Analysis of the Expressiveness of Deep Neural Network Architectures Based on Their Lipschitz Constants

Dec 24, 2019

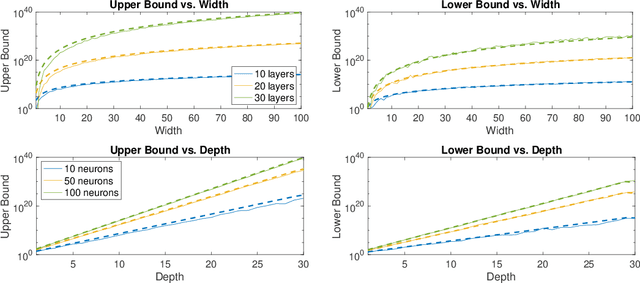

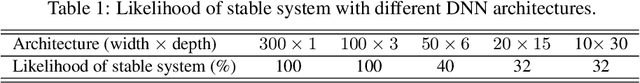

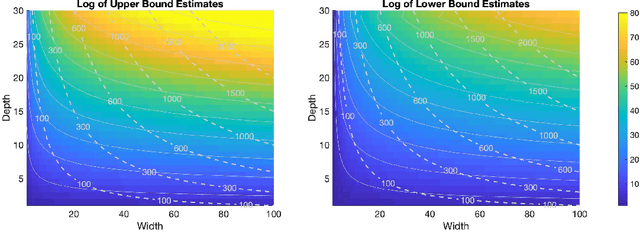

Abstract:Deep neural networks (DNNs) have emerged as a popular mathematical tool for function approximation due to their capability of modelling highly nonlinear functions. Their applications range from image classification and natural language processing to learning-based control. Despite their empirical successes, there is still a lack of theoretical understanding of the representative power of such deep architectures. In this work, we provide a theoretical analysis of the expressiveness of fully-connected, feedforward DNNs with 1-Lipschitz activation functions. In particular, we characterize the expressiveness of a DNN by its Lipchitz constant. By leveraging random matrix theory, we show that, given sufficiently large and randomly distributed weights, the expected upper and lower bounds of the Lipschitz constant of a DNN and hence their expressiveness increase exponentially with depth and polynomially with width, which gives rise to the benefit of the depth of DNN architectures for efficient function approximation. This observation is consistent with established results based on alternative expressiveness measures of DNNs. In contrast to most of the existing work, our analysis based on the Lipschitz properties of DNNs is applicable to a wider range of activation nonlinearities and potentially allows us to make sensible comparisons between the complexity of a DNN and the function to be approximated by the DNN. We consider this work to be a step towards understanding the expressive power of DNNs and towards designing appropriate deep architectures for practical applications such as system control.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge