Shenghao Qin

SAT: Data-light Uncertainty Set Merging via Synthetics, Aggregation, and Test Inversion

Oct 16, 2024

Abstract:The integration of uncertainty sets has diverse applications but also presents challenges, particularly when only initial sets and their control levels are available, along with potential dependencies. Examples include merging confidence sets from different distributed sites with communication constraints, as well as combining conformal prediction sets generated by different learning algorithms or data splits. In this article, we introduce an efficient and flexible Synthetic, Aggregation, and Test inversion (SAT) approach to merge various potentially dependent uncertainty sets into a single set. The proposed method constructs a novel class of synthetic test statistics, aggregates them, and then derives merged sets through test inversion. Our approach leverages the duality between set estimation and hypothesis testing, ensuring reliable coverage in dependent scenarios. The procedure is data-light, meaning it relies solely on initial sets and control levels without requiring raw data, and it adapts to any user-specified initial uncertainty sets, accommodating potentially varying coverage levels. Theoretical analyses and numerical experiments confirm that SAT provides finite-sample coverage guarantees and achieves small set sizes.

Alteration Detection of Tensor Dependence Structure via Sparsity-Exploited Reranking Algorithm

Oct 13, 2023Abstract:Tensor-valued data arise frequently from a wide variety of scientific applications, and many among them can be translated into an alteration detection problem of tensor dependence structures. In this article, we formulate the problem under the popularly adopted tensor-normal distributions and aim at two-sample correlation/partial correlation comparisons of tensor-valued observations. Through decorrelation and centralization, a separable covariance structure is employed to pool sample information from different tensor modes to enhance the power of the test. Additionally, we propose a novel Sparsity-Exploited Reranking Algorithm (SERA) to further improve the multiple testing efficiency. The algorithm is approached through reranking of the p-values derived from the primary test statistics, by incorporating a carefully constructed auxiliary tensor sequence. Besides the tensor framework, SERA is also generally applicable to a wide range of two-sample large-scale inference problems with sparsity structures, and is of independent interest. The asymptotic properties of the proposed test are derived and the algorithm is shown to control the false discovery at the pre-specified level. We demonstrate the efficacy of the proposed method through intensive simulations and two scientific applications.

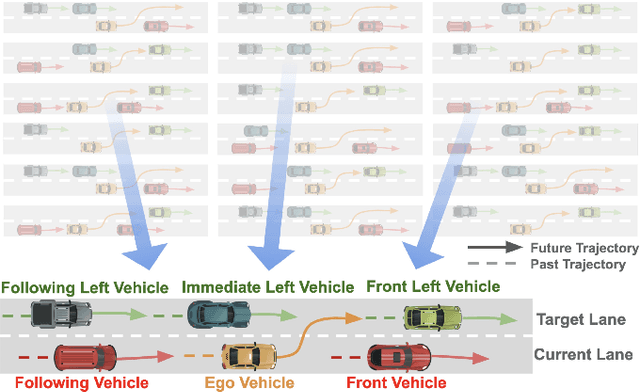

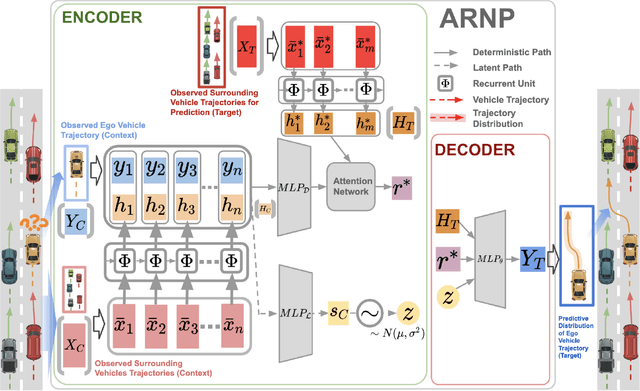

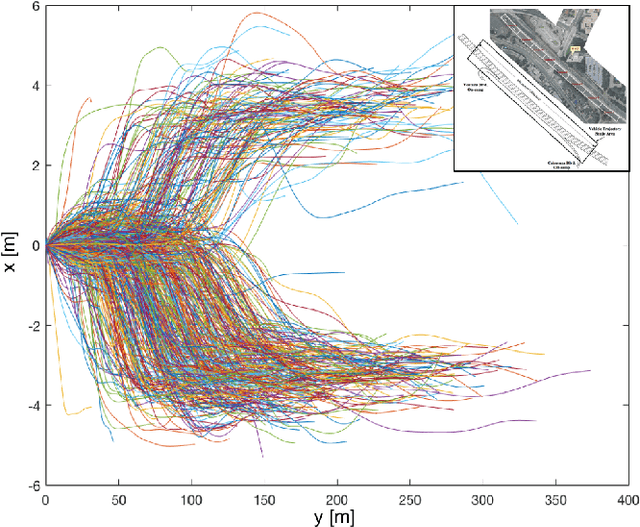

Probabilistic Trajectory Prediction for Autonomous Vehicles with Attentive Recurrent Neural Process

Oct 17, 2019

Abstract:Predicting surrounding vehicle behaviors are critical to autonomous vehicles when negotiating in multi-vehicle interaction scenarios. Most existing approaches require tedious training process with large amounts of data and may fail to capture the propagating uncertainty in interaction behaviors. The multi-vehicle behaviors are assumed to be generated from a stochastic process. This paper proposes an attentive recurrent neural process (ARNP) approach to overcome the above limitations, which uses a neural process (NP) to learn a distribution of multi-vehicle interaction behavior. Our proposed model inherits the flexibility of neural networks while maintaining Bayesian probabilistic characteristics. Constructed by incorporating NPs with recurrent neural networks (RNNs), the ARNP model predicts the distribution of a target vehicle trajectory conditioned on the observed long-term sequential data of all surrounding vehicles. This approach is verified by learning and predicting lane-changing trajectories in complex traffic scenarios. Experimental results demonstrate that our proposed method outperforms previous counterparts in terms of accuracy and uncertainty expressiveness. Moreover, the meta-learning instinct of NPs enables our proposed ARNP model to capture global information of all observations, thereby being able to adapt to new targets efficiently.

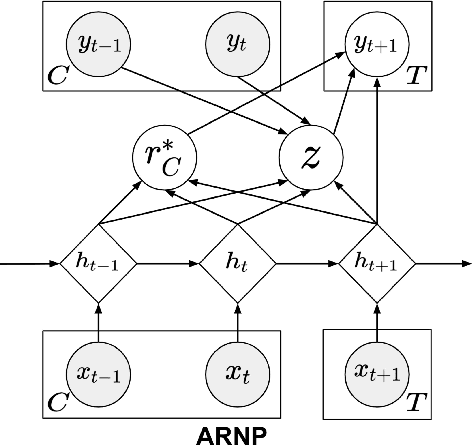

Recurrent Attentive Neural Process for Sequential Data

Oct 17, 2019

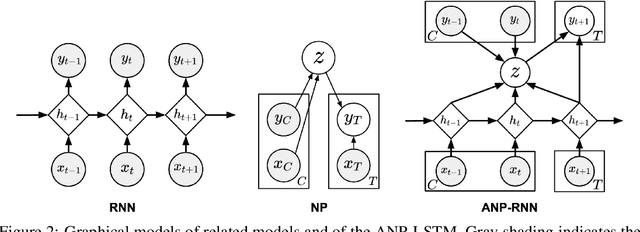

Abstract:Neural processes (NPs) learn stochastic processes and predict the distribution of target output adaptively conditioned on a context set of observed input-output pairs. Furthermore, Attentive Neural Process (ANP) improved the prediction accuracy of NPs by incorporating attention mechanism among contexts and targets. In a number of real-world applications such as robotics, finance, speech, and biology, it is critical to learn the temporal order and recurrent structure from sequential data. However, the capability of NPs capturing these properties is limited due to its permutation invariance instinct. In this paper, we proposed the Recurrent Attentive Neural Process (RANP), or alternatively, Attentive Neural Process-RecurrentNeural Network(ANP-RNN), in which the ANP is incorporated into a recurrent neural network. The proposed model encapsulates both the inductive biases of recurrent neural networks and also the strength of NPs for modelling uncertainty. We demonstrate that RANP can effectively model sequential data and outperforms NPs and LSTMs remarkably in a 1D regression toy example as well as autonomous-driving applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge