Shea Hagstrom

Methods for evaluating the resolution of 3D data derived from satellite images

Jun 13, 2025Abstract:3D data derived from satellite images is essential for scene modeling applications requiring large-scale coverage or involving locations not accessible by airborne lidar or cameras. Measuring the resolution of this data is important for determining mission utility and tracking improvements. In this work, we consider methods to evaluate the resolution of point clouds, digital surface models, and 3D mesh models. We describe 3D metric evaluation tools and workflows that enable automated evaluation based on high-resolution reference airborne lidar, and we present results of analyses with data of varying quality.

Cumulative Assessment for Urban 3D Modeling

Jul 09, 2021

Abstract:Urban 3D modeling from satellite images requires accurate semantic segmentation to delineate urban features, multiple view stereo for 3D reconstruction of surface heights, and 3D model fitting to produce compact models with accurate surface slopes. In this work, we present a cumulative assessment metric that succinctly captures error contributions from each of these components. We demonstrate our approach by providing challenging public datasets and extending two open source projects to provide an end-to-end 3D modeling baseline solution to stimulate further research and evaluation with a public leaderboard.

Single View Geocentric Pose in the Wild

May 18, 2021

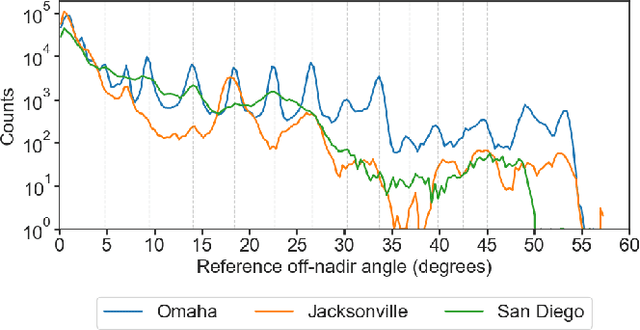

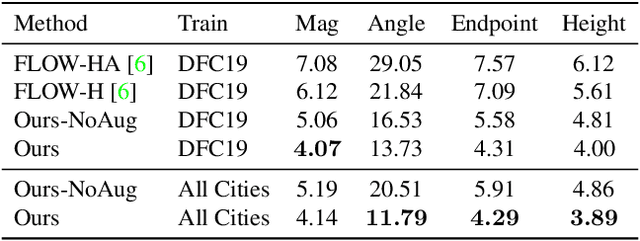

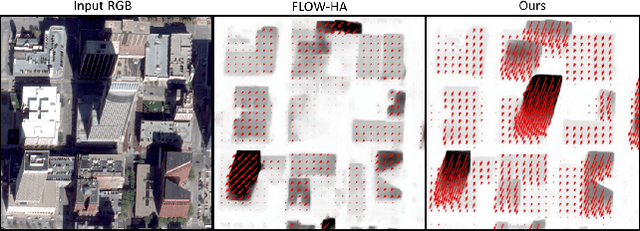

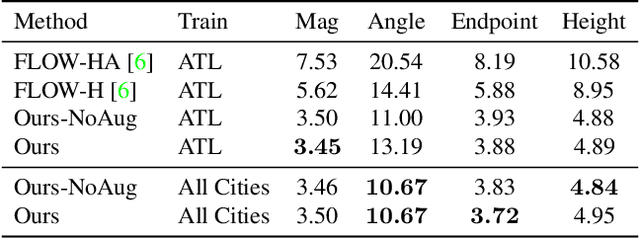

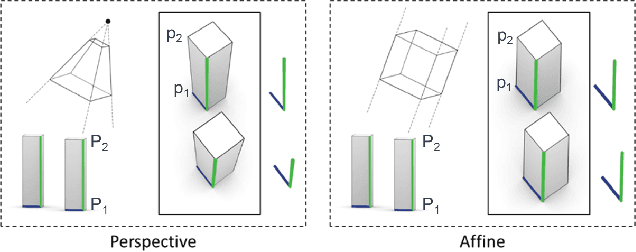

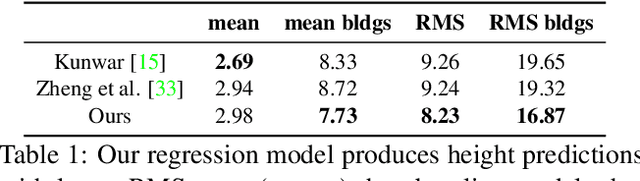

Abstract:Current methods for Earth observation tasks such as semantic mapping, map alignment, and change detection rely on near-nadir images; however, often the first available images in response to dynamic world events such as natural disasters are oblique. These tasks are much more difficult for oblique images due to observed object parallax. There has been recent success in learning to regress geocentric pose, defined as height above ground and orientation with respect to gravity, by training with airborne lidar registered to satellite images. We present a model for this novel task that exploits affine invariance properties to outperform state of the art performance by a wide margin. We also address practical issues required to deploy this method in the wild for real-world applications. Our data and code are publicly available.

Learning Geocentric Object Pose in Oblique Monocular Images

Jul 01, 2020

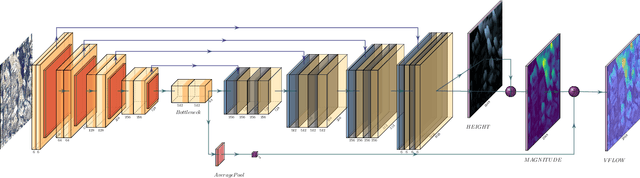

Abstract:An object's geocentric pose, defined as the height above ground and orientation with respect to gravity, is a powerful representation of real-world structure for object detection, segmentation, and localization tasks using RGBD images. For close-range vision tasks, height and orientation have been derived directly from stereo-computed depth and more recently from monocular depth predicted by deep networks. For long-range vision tasks such as Earth observation, depth cannot be reliably estimated with monocular images. Inspired by recent work in monocular height above ground prediction and optical flow prediction from static images, we develop an encoding of geocentric pose to address this challenge and train a deep network to compute the representation densely, supervised by publicly available airborne lidar. We exploit these attributes to rectify oblique images and remove observed object parallax to dramatically improve the accuracy of localization and to enable accurate alignment of multiple images taken from very different oblique viewpoints. We demonstrate the value of our approach by extending two large-scale public datasets for semantic segmentation in oblique satellite images. All of our data and code are publicly available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge