Shahin Atakishiyev

Incorporating Explanations into Human-Machine Interfaces for Trust and Situation Awareness in Autonomous Vehicles

Apr 10, 2024

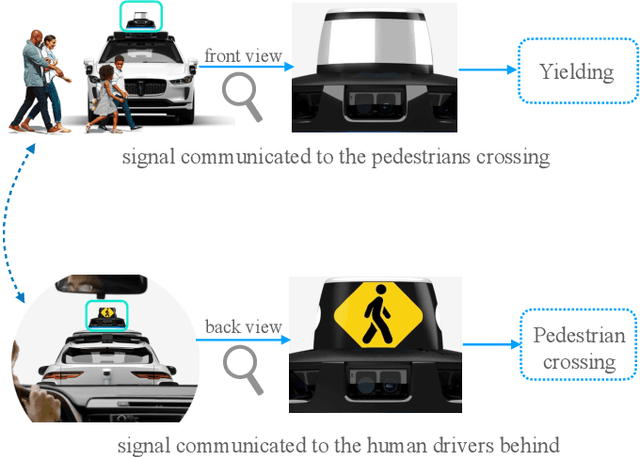

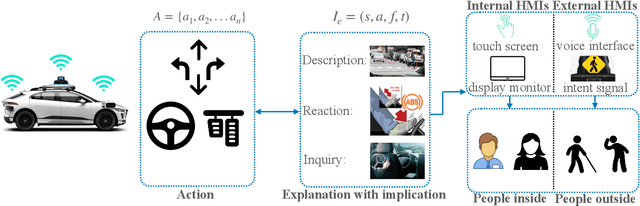

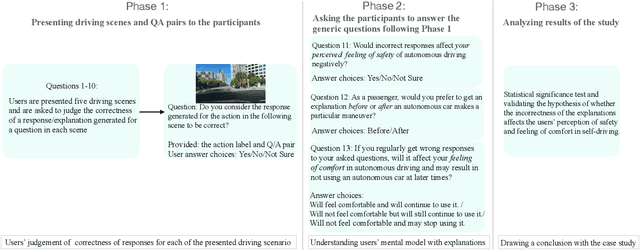

Abstract:Autonomous vehicles often make complex decisions via machine learning-based predictive models applied to collected sensor data. While this combination of methods provides a foundation for real-time actions, self-driving behavior primarily remains opaque to end users. In this sense, explainability of real-time decisions is a crucial and natural requirement for building trust in autonomous vehicles. Moreover, as autonomous vehicles still cause serious traffic accidents for various reasons, timely conveyance of upcoming hazards to road users can help improve scene understanding and prevent potential risks. Hence, there is also a need to supply autonomous vehicles with user-friendly interfaces for effective human-machine teaming. Motivated by this problem, we study the role of explainable AI and human-machine interface jointly in building trust in vehicle autonomy. We first present a broad context of the explanatory human-machine systems with the "3W1H" (what, whom, when, how) approach. Based on these findings, we present a situation awareness framework for calibrating users' trust in self-driving behavior. Finally, we perform an experiment on our framework, conduct a user study on it, and validate the empirical findings with hypothesis testing.

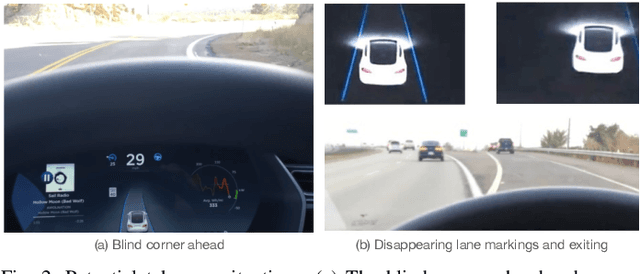

Safety Implications of Explainable Artificial Intelligence in End-to-End Autonomous Driving

Mar 18, 2024

Abstract:The end-to-end learning pipeline is gradually creating a paradigm shift in the ongoing development of highly autonomous vehicles, largely due to advances in deep learning, the availability of large-scale training datasets, and improvements in integrated sensor devices. However, a lack of interpretability in real-time decisions with contemporary learning methods impedes user trust and attenuates the widespread deployment and commercialization of such vehicles. Moreover, the issue is exacerbated when these cars are involved in or cause traffic accidents. Such drawback raises serious safety concerns from societal and legal perspectives. Consequently, explainability in end-to-end autonomous driving is essential to enable the safety of vehicular automation. However, the safety and explainability aspects of autonomous driving have generally been investigated disjointly by researchers in today's state of the art. In this paper, we aim to bridge the gaps between these topics and seek to answer the following research question: When and how can explanations improve safety of autonomous driving? In this regard, we first revisit established safety and state-of-the-art explainability techniques in autonomous driving. Furthermore, we present three critical case studies and show the pivotal role of explanations in enhancing self-driving safety. Finally, we describe our empirical investigation and reveal potential value, limitations, and caveats with practical explainable AI methods on their role of assuring safety and transparency for vehicle autonomy.

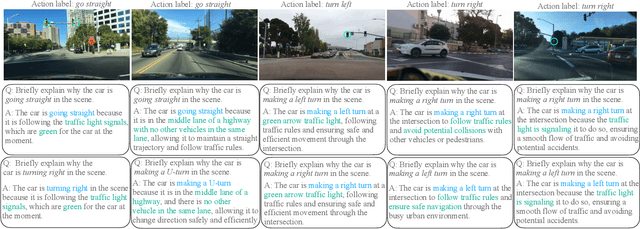

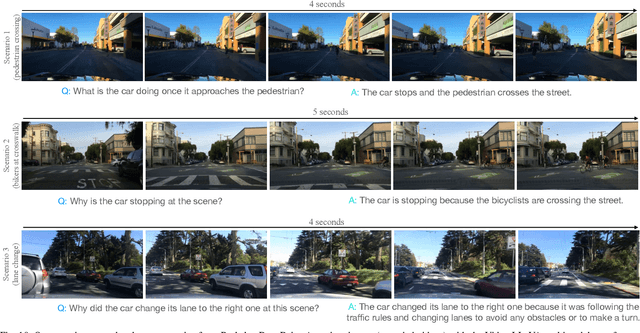

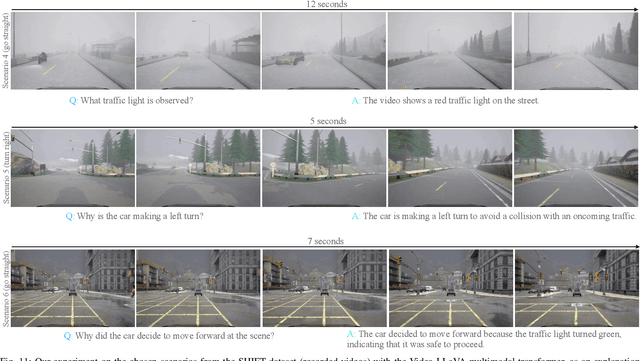

Explaining Autonomous Driving Actions with Visual Question Answering

Jul 19, 2023Abstract:The end-to-end learning ability of self-driving vehicles has achieved significant milestones over the last decade owing to rapid advances in deep learning and computer vision algorithms. However, as autonomous driving technology is a safety-critical application of artificial intelligence (AI), road accidents and established regulatory principles necessitate the need for the explainability of intelligent action choices for self-driving vehicles. To facilitate interpretability of decision-making in autonomous driving, we present a Visual Question Answering (VQA) framework, which explains driving actions with question-answering-based causal reasoning. To do so, we first collect driving videos in a simulation environment using reinforcement learning (RL) and extract consecutive frames from this log data uniformly for five selected action categories. Further, we manually annotate the extracted frames using question-answer pairs as justifications for the actions chosen in each scenario. Finally, we evaluate the correctness of the VQA-predicted answers for actions on unseen driving scenes. The empirical results suggest that the VQA mechanism can provide support to interpret real-time decisions of autonomous vehicles and help enhance overall driving safety.

Explainable Artificial Intelligence for Autonomous Driving: A Comprehensive Overview and Field Guide for Future Research Directions

Dec 21, 2021

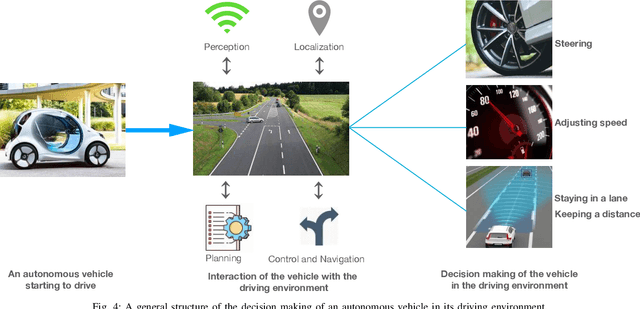

Abstract:Autonomous driving has achieved a significant milestone in research and development over the last decade. There is increasing interest in the field as the deployment of self-operating vehicles on roads promises safer and more ecologically friendly transportation systems. With the rise of computationally powerful artificial intelligence (AI) techniques, autonomous vehicles can sense their environment with high precision, make safe real-time decisions, and operate more reliably without human interventions. However, intelligent decision-making in autonomous cars is not generally understandable by humans in the current state of the art, and such deficiency hinders this technology from being socially acceptable. Hence, aside from making safe real-time decisions, the AI systems of autonomous vehicles also need to explain how these decisions are constructed in order to be regulatory compliant across many jurisdictions. Our study sheds a comprehensive light on developing explainable artificial intelligence (XAI) approaches for autonomous vehicles. In particular, we make the following contributions. First, we provide a thorough overview of the present gaps with respect to explanations in the state-of-the-art autonomous vehicle industry. We then show the taxonomy of explanations and explanation receivers in this field. Thirdly, we propose a framework for an architecture of end-to-end autonomous driving systems and justify the role of XAI in both debugging and regulating such systems. Finally, as future research directions, we provide a field guide on XAI approaches for autonomous driving that can improve operational safety and transparency towards achieving public approval by regulators, manufacturers, and all engaged stakeholders.

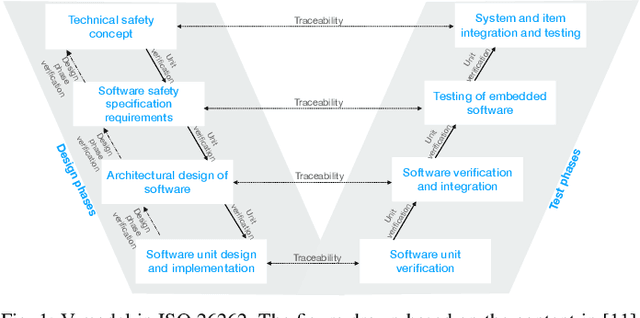

Towards safe, explainable, and regulated autonomous driving

Nov 20, 2021

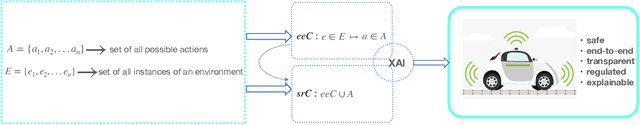

Abstract:There has been growing interest in the development and deployment of autonomous vehicles on modern road networks over the last few years, encouraged by the empirical successes of powerful artificial intelligence approaches (AI), especially in the applications of deep and reinforcement learning. However, there have been several road accidents with ``autonomous'' cars that prevent this technology from being publicly acceptable at a wider level. As AI is the main driving force behind the intelligent navigation systems of such vehicles, both the stakeholders and transportation jurisdictions require their AI-driven software architecture to be safe, explainable, and regulatory compliant. We present a framework that integrates autonomous control, explainable AI architecture, and regulatory compliance to address this issue and further provide several conceptual models from this perspective, to help guide future research directions.

Analysis of Word Embeddings using Fuzzy Clustering

Jul 20, 2019

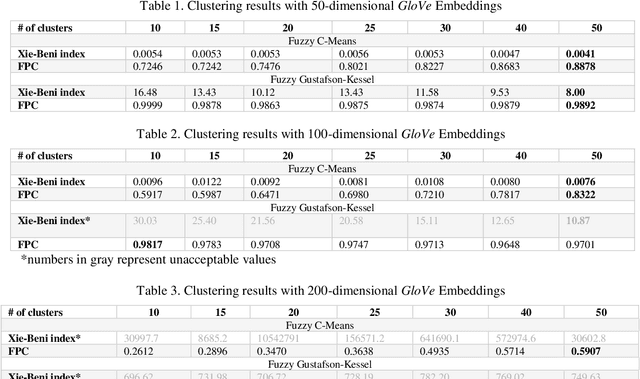

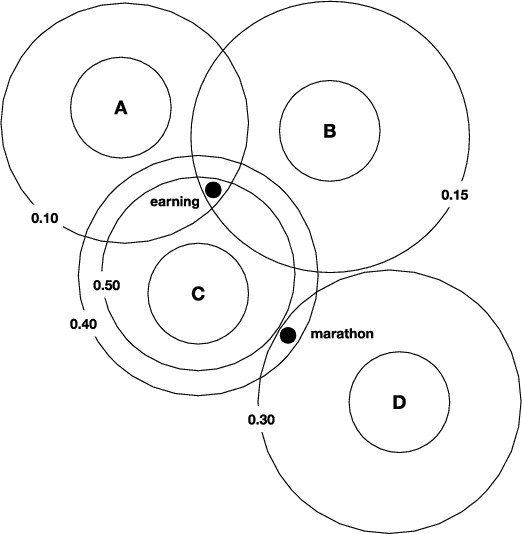

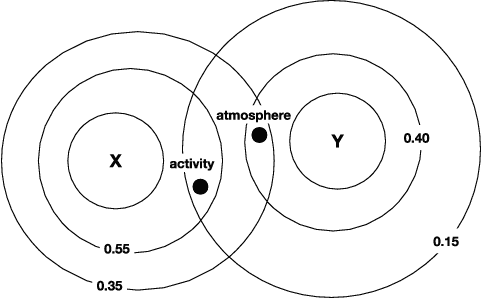

Abstract:In data dominated systems and applications, a concept of representing words in a numerical format has gained a lot of attention. There are a few approaches used to generate such a representation. An interesting issue that should be considered is the ability of such representations - called embeddings - to imitate human-based semantic similarity between words. In this study, we perform a fuzzy-based analysis of vector representations of words, i.e., word embeddings. We use two popular fuzzy clustering algorithms on count-based word embeddings, known as GloVe, of different dimensionality. Words from WordSim-353, called the gold standard, are represented as vectors and clustered. The results indicate that fuzzy clustering algorithms are very sensitive to high-dimensional data, and parameter tuning can dramatically change their performance. We show that by adjusting the value of the fuzzifier parameter, fuzzy clustering can be successfully applied to vectors of high - up to one hundred - dimensions. Additionally, we illustrate that fuzzy clustering allows to provide interesting results regarding membership of words to different clusters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge