Sean Xin Xu

Enhancing Investment Analysis: Optimizing AI-Agent Collaboration in Financial Research

Nov 07, 2024

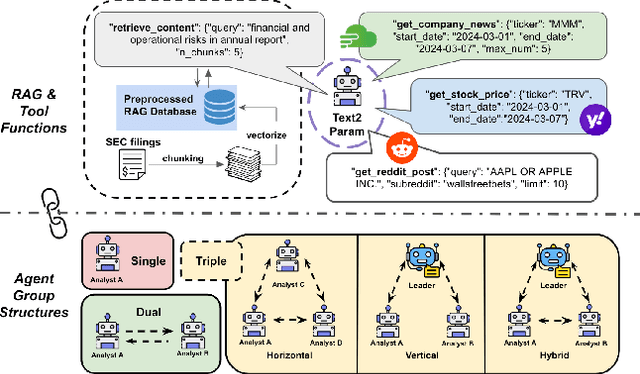

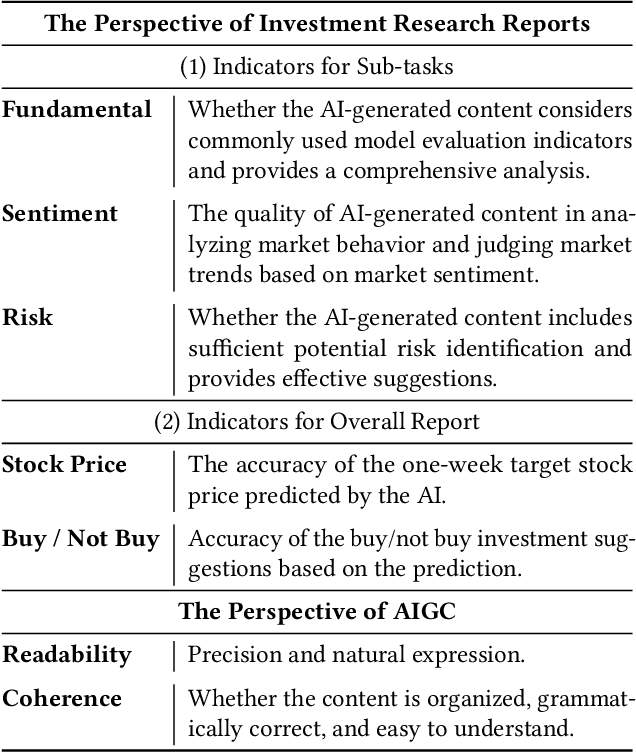

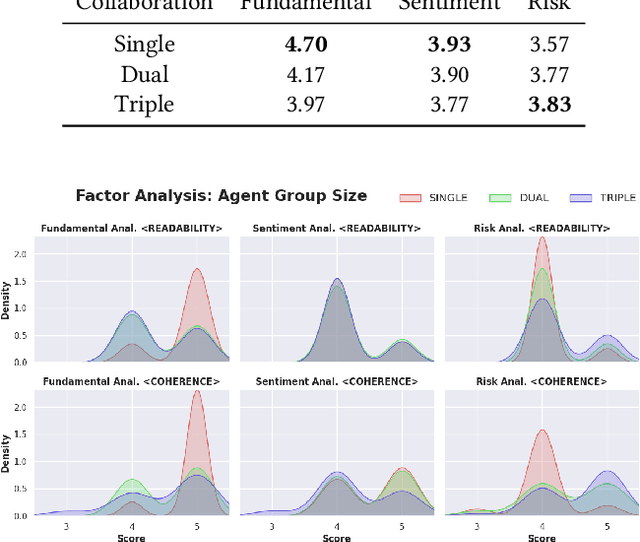

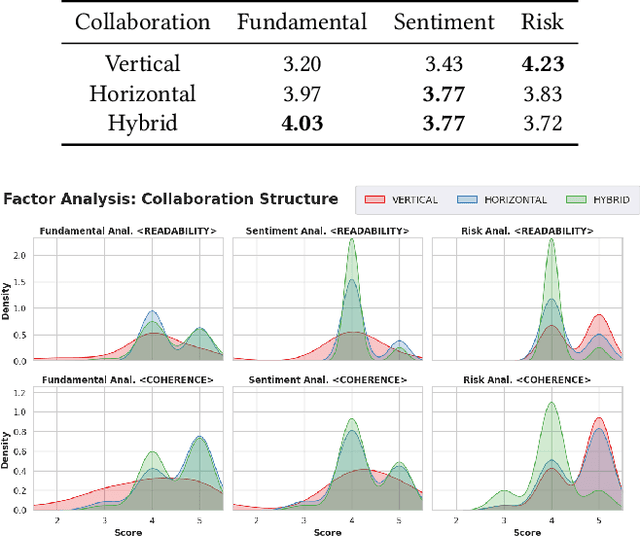

Abstract:In recent years, the application of generative artificial intelligence (GenAI) in financial analysis and investment decision-making has gained significant attention. However, most existing approaches rely on single-agent systems, which fail to fully utilize the collaborative potential of multiple AI agents. In this paper, we propose a novel multi-agent collaboration system designed to enhance decision-making in financial investment research. The system incorporates agent groups with both configurable group sizes and collaboration structures to leverage the strengths of each agent group type. By utilizing a sub-optimal combination strategy, the system dynamically adapts to varying market conditions and investment scenarios, optimizing performance across different tasks. We focus on three sub-tasks: fundamentals, market sentiment, and risk analysis, by analyzing the 2023 SEC 10-K forms of 30 companies listed on the Dow Jones Index. Our findings reveal significant performance variations based on the configurations of AI agents for different tasks. The results demonstrate that our multi-agent collaboration system outperforms traditional single-agent models, offering improved accuracy, efficiency, and adaptability in complex financial environments. This study highlights the potential of multi-agent systems in transforming financial analysis and investment decision-making by integrating diverse analytical perspectives.

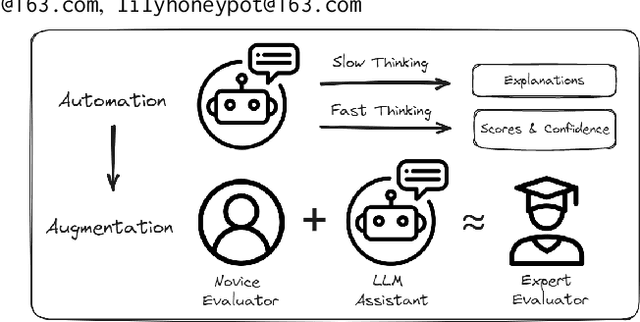

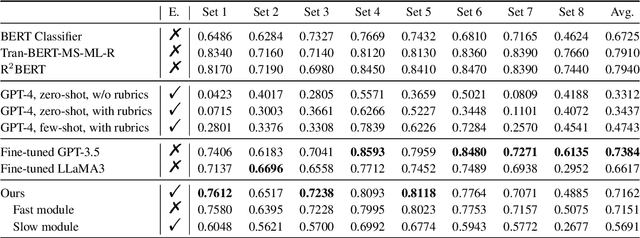

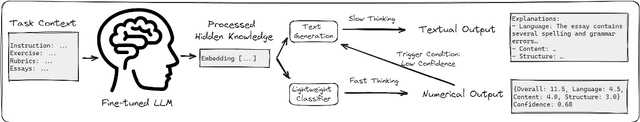

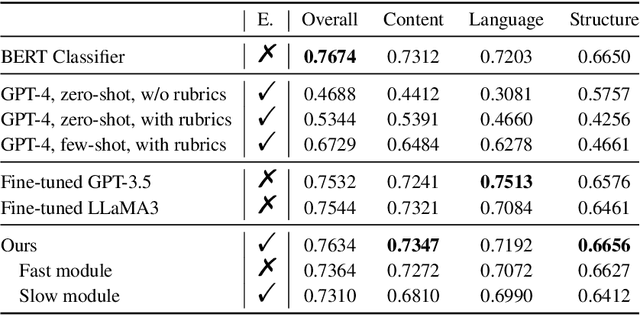

From Automation to Augmentation: Large Language Models Elevating Essay Scoring Landscape

Jan 12, 2024

Abstract:Receiving immediate and personalized feedback is crucial for second-language learners, and Automated Essay Scoring (AES) systems are a vital resource when human instructors are unavailable. This study investigates the effectiveness of Large Language Models (LLMs), specifically GPT-4 and fine-tuned GPT-3.5, as tools for AES. Our comprehensive set of experiments, conducted on both public and private datasets, highlights the remarkable advantages of LLM-based AES systems. They include superior accuracy, consistency, generalizability, and interpretability, with fine-tuned GPT-3.5 surpassing traditional grading models. Additionally, we undertake LLM-assisted human evaluation experiments involving both novice and expert graders. One pivotal discovery is that LLMs not only automate the grading process but also enhance the performance of human graders. Novice graders when provided with feedback generated by LLMs, achieve a level of accuracy on par with experts, while experts become more efficient and maintain greater consistency in their assessments. These results underscore the potential of LLMs in educational technology, paving the way for effective collaboration between humans and AI, ultimately leading to transformative learning experiences through AI-generated feedback.

Multimodal Data Augmentation for Image Captioning using Diffusion Models

May 03, 2023

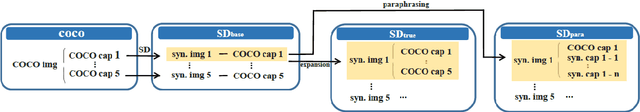

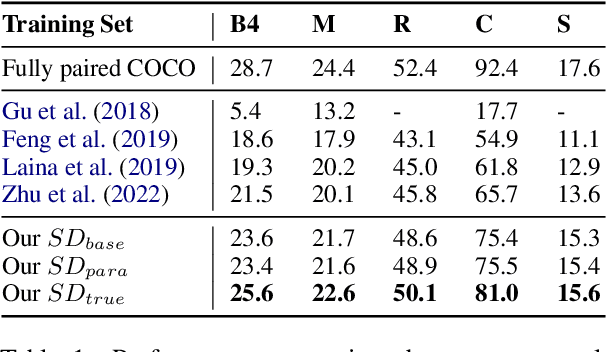

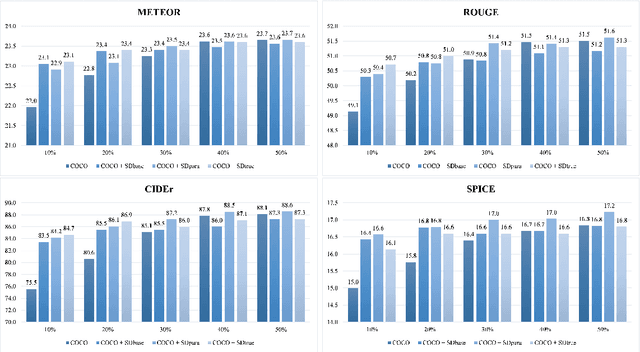

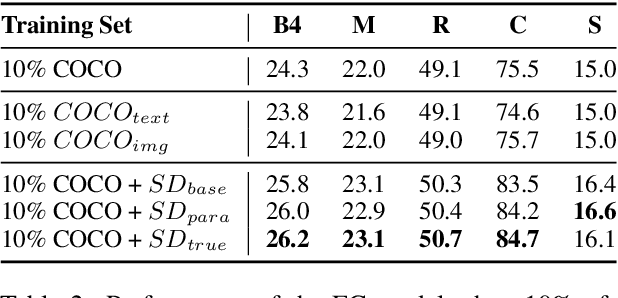

Abstract:Image captioning, an important vision-language task, often requires a tremendous number of finely labeled image-caption pairs for learning the underlying alignment between images and texts. In this paper, we proposed a multimodal data augmentation method, leveraging a recent text-to-image model called Stable Diffusion, to expand the training set via high-quality generation of image-caption pairs. Extensive experiments on the MS COCO dataset demonstrate the advantages of our approach over several benchmark methods, and particularly a significant boost when having fewer training instances. In addition, models trained on our augmented datasets also outperform prior unpaired image captioning methods by a large margin. Finally, further improvement regarding the training efficiency and effectiveness can be obtained after intentionally filtering the generated data based on quality assessment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge