Sandip Kundu

EZClone: Improving DNN Model Extraction Attack via Shape Distillation from GPU Execution Profiles

Apr 06, 2023

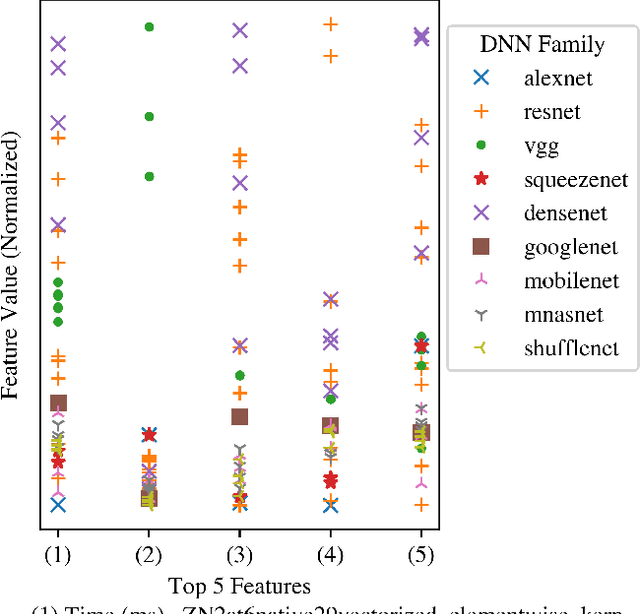

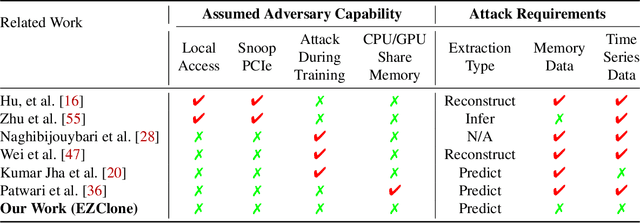

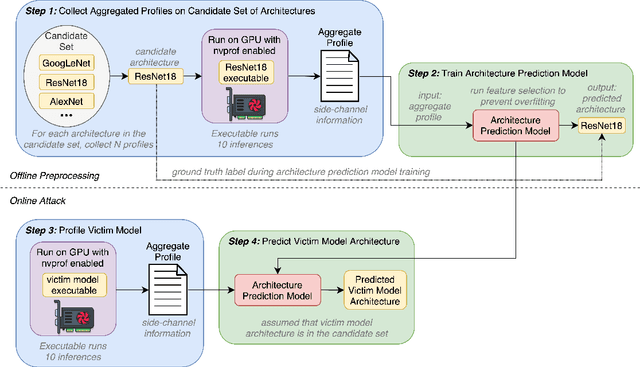

Abstract:Deep Neural Networks (DNNs) have become ubiquitous due to their performance on prediction and classification problems. However, they face a variety of threats as their usage spreads. Model extraction attacks, which steal DNNs, endanger intellectual property, data privacy, and security. Previous research has shown that system-level side-channels can be used to leak the architecture of a victim DNN, exacerbating these risks. We propose two DNN architecture extraction techniques catering to various threat models. The first technique uses a malicious, dynamically linked version of PyTorch to expose a victim DNN architecture through the PyTorch profiler. The second, called EZClone, exploits aggregate (rather than time-series) GPU profiles as a side-channel to predict DNN architecture, employing a simple approach and assuming little adversary capability as compared to previous work. We investigate the effectiveness of EZClone when minimizing the complexity of the attack, when applied to pruned models, and when applied across GPUs. We find that EZClone correctly predicts DNN architectures for the entire set of PyTorch vision architectures with 100% accuracy. No other work has shown this degree of architecture prediction accuracy with the same adversarial constraints or using aggregate side-channel information. Prior work has shown that, once a DNN has been successfully cloned, further attacks such as model evasion or model inversion can be accelerated significantly.

Hardening DNNs against Transfer Attacks during Network Compression using Greedy Adversarial Pruning

Jun 15, 2022

Abstract:The prevalence and success of Deep Neural Network (DNN) applications in recent years have motivated research on DNN compression, such as pruning and quantization. These techniques accelerate model inference, reduce power consumption, and reduce the size and complexity of the hardware necessary to run DNNs, all with little to no loss in accuracy. However, since DNNs are vulnerable to adversarial inputs, it is important to consider the relationship between compression and adversarial robustness. In this work, we investigate the adversarial robustness of models produced by several irregular pruning schemes and by 8-bit quantization. Additionally, while conventional pruning removes the least important parameters in a DNN, we investigate the effect of an unconventional pruning method: removing the most important model parameters based on the gradient on adversarial inputs. We call this method Greedy Adversarial Pruning (GAP) and we find that this pruning method results in models that are resistant to transfer attacks from their uncompressed counterparts.

MILR: Mathematically Induced Layer Recovery for Plaintext Space Error Correction of CNNs

Oct 28, 2020

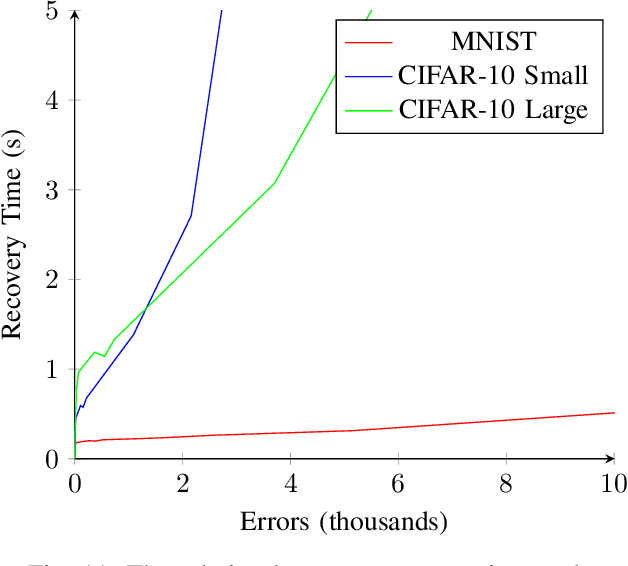

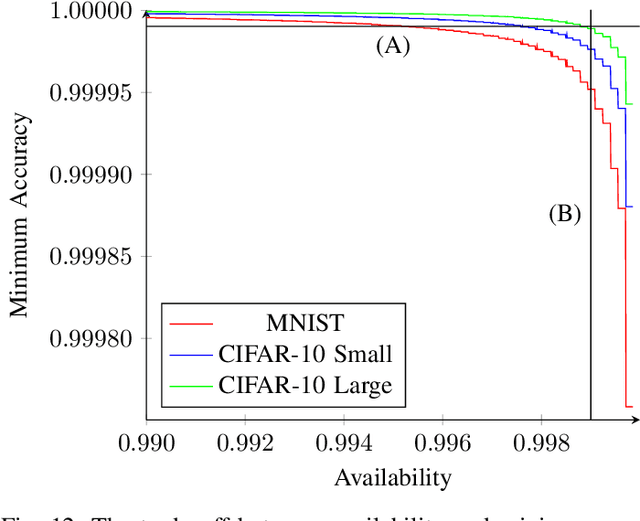

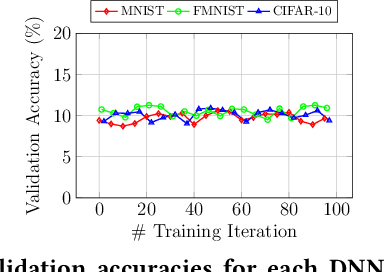

Abstract:The increased use of Convolutional Neural Networks (CNN) in mission critical systems has increased the need for robust and resilient networks in the face of both naturally occurring faults as well as security attacks. The lack of robustness and resiliency can lead to unreliable inference results. Current methods that address CNN robustness require hardware modification, network modification, or network duplication. This paper proposes MILR a software based CNN error detection and error correction system that enables self-healing of the network from single and multi bit errors. The self-healing capabilities are based on mathematical relationships between the inputs,outputs, and parameters(weights) of a layers, exploiting these relationships allow the recovery of erroneous parameters (weights) throughout a layer and the network. MILR is suitable for plaintext-space error correction (PSEC) given its ability to correct whole-weight and even whole-layer errors in CNNs.

Deep-Lock: Secure Authorization for Deep Neural Networks

Aug 13, 2020

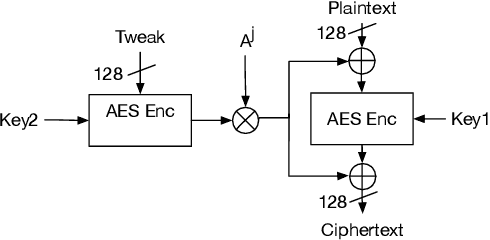

Abstract:Trained Deep Neural Network (DNN) models are considered valuable Intellectual Properties (IP) in several business models. Prevention of IP theft and unauthorized usage of such DNN models has been raised as of significant concern by industry. In this paper, we address the problem of preventing unauthorized usage of DNN models by proposing a generic and lightweight key-based model-locking scheme, which ensures that a locked model functions correctly only upon applying the correct secret key. The proposed scheme, known as Deep-Lock, utilizes S-Boxes with good security properties to encrypt each parameter of a trained DNN model with secret keys generated from a master key via a key scheduling algorithm. The resulting dense network of encrypted weights is found robust against model fine-tuning attacks. Finally, Deep-Lock does not require any intervention in the structure and training of the DNN models, making it applicable for all existing software and hardware implementations of DNN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge