Ryusuke Sagawa

Self-supervised Extraction of Human Motion Structures via Frame-wise Discrete Features

Sep 12, 2023

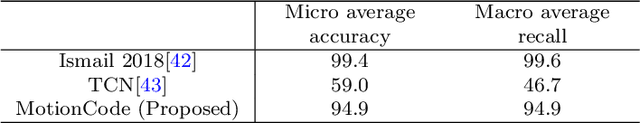

Abstract:The present paper proposes an encoder-decoder model for extracting the structures of human motions represented by frame-wise discrete features in a self-supervised manner. In the proposed method, features are extracted as codes in a motion codebook without the use of human knowledge, and the relationship between these codes can be visualized on a graph. Since the codes are expected to be temporally sparse compared to the captured frame rate and can be shared by multiple sequences, the proposed network model also addresses the need for training constraints. Specifically, the model consists of self-attention layers and a vector clustering block. The attention layers contribute to finding sparse keyframes and discrete features as motion codes, which are then extracted by vector clustering. The constraints are realized as training losses so that the same motion codes can be as contiguous as possible and can be shared by multiple sequences. In addition, we propose the use of causal self-attention as a method by which to calculate attention for long sequences consisting of numerous frames. In our experiments, the sparse structures of motion codes were used to compile a graph that facilitates visualization of the relationship between the codes and the differences between sequences. We then evaluated the effectiveness of the extracted motion codes by applying them to multiple recognition tasks and found that performance levels comparable to task-optimized methods could be achieved by linear probing.

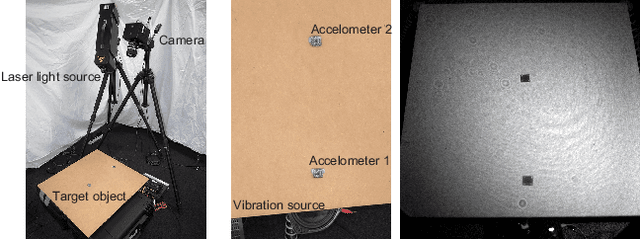

Dense Pixel-wise Micro-motion Estimation of Object Surface by using Low Dimensional Embedding of Laser Speckle Pattern

Oct 31, 2020

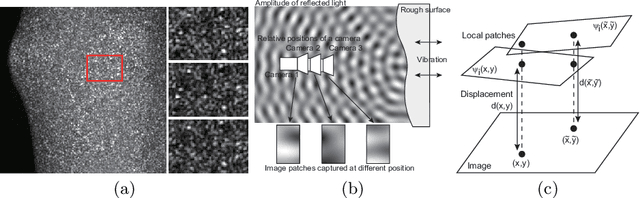

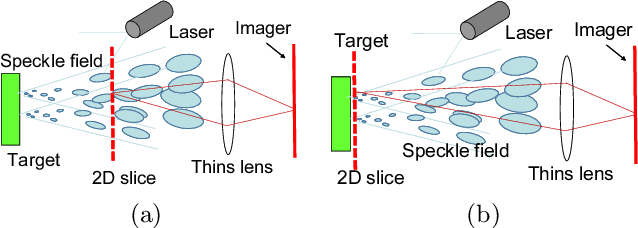

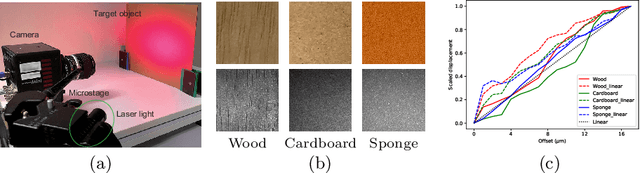

Abstract:This paper proposes a method of estimating micro-motion of an object at each pixel that is too small to detect under a common setup of camera and illumination. The method introduces an active-lighting approach to make the motion visually detectable. The approach is based on speckle pattern, which is produced by the mutual interference of laser light on object's surface and continuously changes its appearance according to the out-of-plane motion of the surface. In addition, speckle pattern becomes uncorrelated with large motion. To compensate such micro- and large motion, the method estimates the motion parameters up to scale at each pixel by nonlinear embedding of the speckle pattern into low-dimensional space. The out-of-plane motion is calculated by making the motion parameters spatially consistent across the image. In the experiments, the proposed method is compared with other measuring devices to prove the effectiveness of the method.

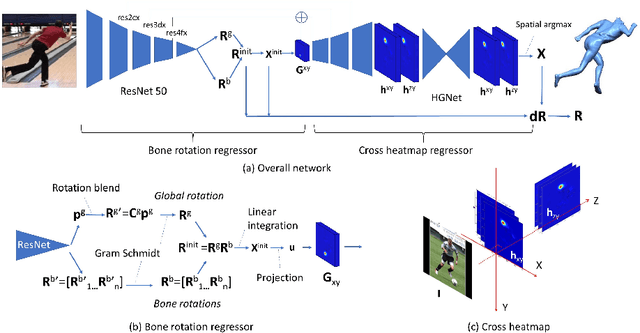

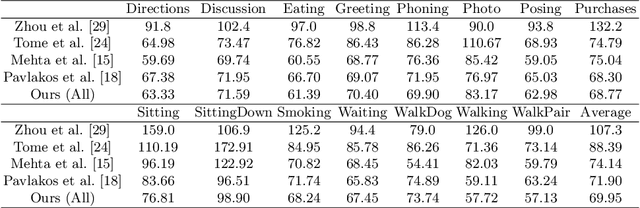

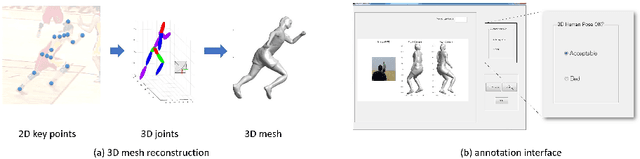

Skeleton Transformer Networks: 3D Human Pose and Skinned Mesh from Single RGB Image

Dec 29, 2018

Abstract:In this paper, we present Skeleton Transformer Networks (SkeletonNet), an end-to-end framework that can predict not only 3D joint positions but also 3D angular pose (bone rotations) of a human skeleton from a single color image. This in turn allows us to generate skinned mesh animations. Here, we propose a two-step regression approach. The first step regresses bone rotations in order to obtain an initial solution by considering skeleton structure. The second step performs refinement based on heatmap regressor using a 3D pose representation called cross heatmap which stacks heatmaps of xy and zy coordinates. By training the network using the proposed 3D human pose dataset that is comprised of images annotated with 3D skeletal angular poses, we showed that SkeletonNet can predict a full 3D human pose (joint positions and bone rotations) from a single image in-the-wild.

Depth estimation using structured light flow -- analysis of projected pattern flow on an object's surface --

Oct 02, 2017

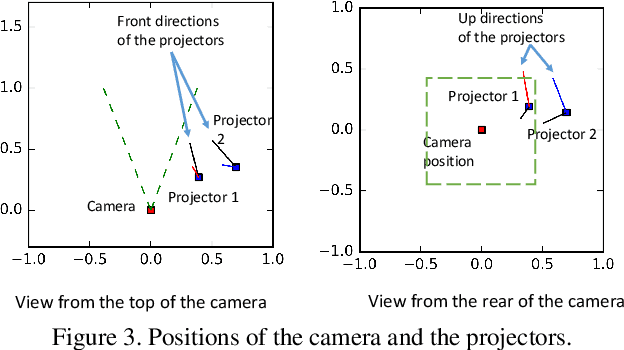

Abstract:Shape reconstruction techniques using structured light have been widely researched and developed due to their robustness, high precision, and density. Because the techniques are based on decoding a pattern to find correspondences, it implicitly requires that the projected patterns be clearly captured by an image sensor, i.e., to avoid defocus and motion blur of the projected pattern. Although intensive researches have been conducted for solving defocus blur, few researches for motion blur and only solution is to capture with extremely fast shutter speed. In this paper, unlike the previous approaches, we actively utilize motion blur, which we refer to as a light flow, to estimate depth. Analysis reveals that minimum two light flows, which are retrieved from two projected patterns on the object, are required for depth estimation. To retrieve two light flows at the same time, two sets of parallel line patterns are illuminated from two video projectors and the size of motion blur of each line is precisely measured. By analyzing the light flows, i.e. lengths of the blurs, scene depth information is estimated. In the experiments, 3D shapes of fast moving objects, which are inevitably captured with motion blur, are successfully reconstructed by our technique.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge