Ryan Gabbard

ADAM: A Sandbox for Implementing Language Learning

May 05, 2021

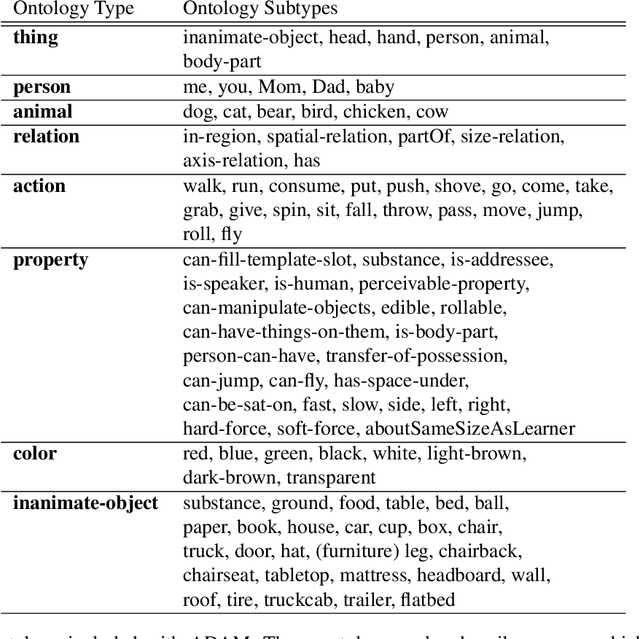

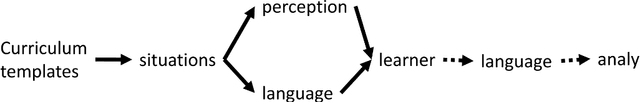

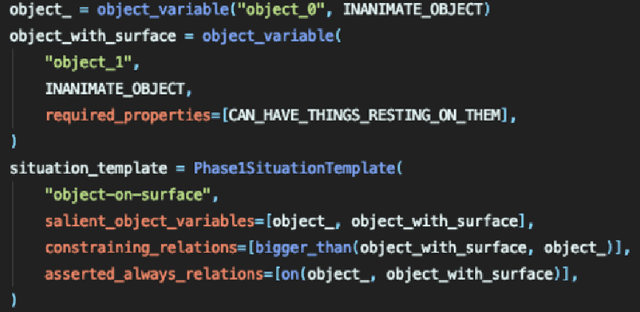

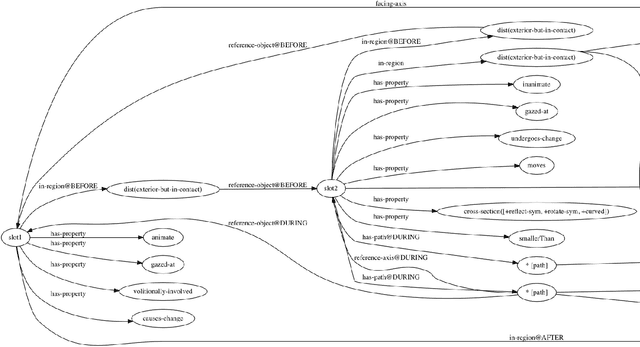

Abstract:We present ADAM, a software system for designing and running child language learning experiments in Python. The system uses a virtual world to simulate a grounded language acquisition process in which the language learner utilizes cognitively plausible learning algorithms to form perceptual and linguistic representations of the observed world. The modular nature of ADAM makes it easy to design and test different language learning curricula as well as learning algorithms. In this report, we describe the architecture of the ADAM system in detail, and illustrate its components with examples. We provide our code.

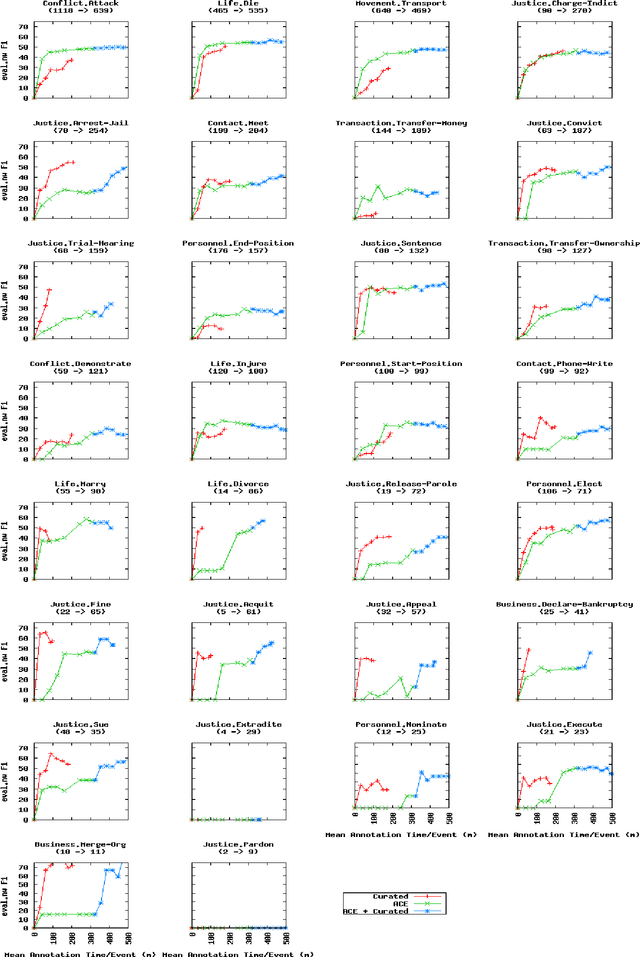

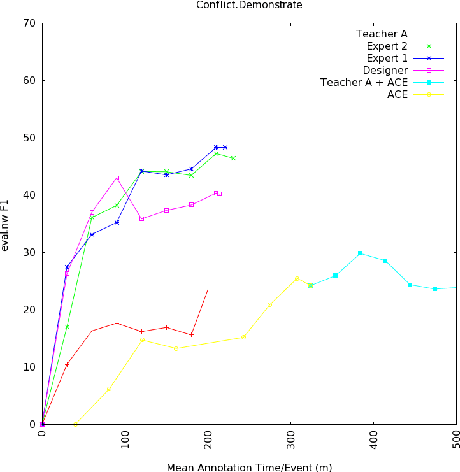

Events Beyond ACE: Curated Training for Events

Sep 24, 2018

Abstract:We explore a human-driven approach to annotation, curated training (CT), in which annotation is framed as teaching the system by using interactive search to identify informative snippets of text to annotate, unlike traditional approaches which either annotate preselected text or use active learning. A trained annotator performed 80 hours of CT for the thirty event types of the NIST TAC KBP Event Argument Extraction evaluation. Combining this annotation with ACE results in a 6% reduction in error and the learning curve of CT plateaus more slowly than for full-document annotation. 3 NLP researchers performed CT for one event type and showed much sharper learning curves with all three exceeding ACE performance in less than ninety minutes, suggesting that CT can provide further benefits when the annotator deeply understands the system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge