Ruoxi Qin

Dynamic ensemble selection based on Deep Neural Network Uncertainty Estimation for Adversarial Robustness

Aug 01, 2023

Abstract:The deep neural network has attained significant efficiency in image recognition. However, it has vulnerable recognition robustness under extensive data uncertainty in practical applications. The uncertainty is attributed to the inevitable ambient noise and, more importantly, the possible adversarial attack. Dynamic methods can effectively improve the defense initiative in the arms race of attack and defense of adversarial examples. Different from the previous dynamic method depend on input or decision, this work explore the dynamic attributes in model level through dynamic ensemble selection technology to further protect the model from white-box attacks and improve the robustness. Specifically, in training phase the Dirichlet distribution is apply as prior of sub-models' predictive distribution, and the diversity constraint in parameter space is introduced under the lightweight sub-models to construct alternative ensembel model spaces. In test phase, the certain sub-models are dynamically selected based on their rank of uncertainty value for the final prediction to ensure the majority accurate principle in ensemble robustness and accuracy. Compared with the previous dynamic method and staic adversarial traning model, the presented approach can achieve significant robustness results without damaging accuracy by combining dynamics and diversity property.

Dynamic Stochastic Ensemble with Adversarial Robust Lottery Ticket Subnetworks

Oct 06, 2022

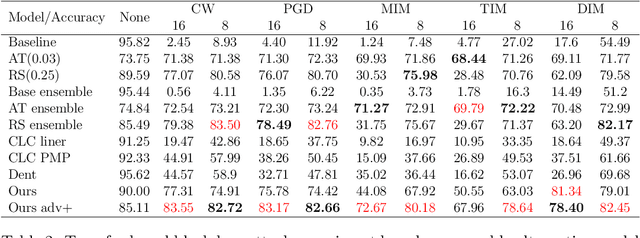

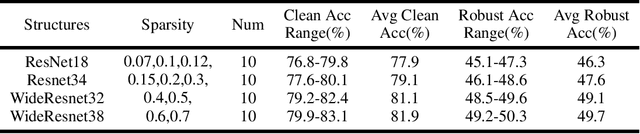

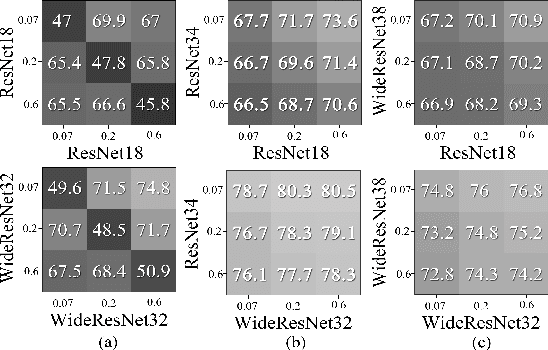

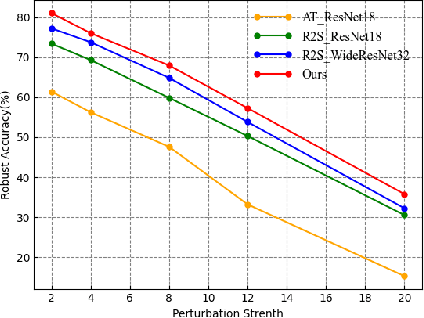

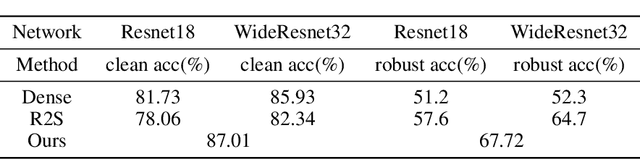

Abstract:Adversarial attacks are considered the intrinsic vulnerability of CNNs. Defense strategies designed for attacks have been stuck in the adversarial attack-defense arms race, reflecting the imbalance between attack and defense. Dynamic Defense Framework (DDF) recently changed the passive safety status quo based on the stochastic ensemble model. The diversity of subnetworks, an essential concern in the DDF, can be effectively evaluated by the adversarial transferability between different networks. Inspired by the poor adversarial transferability between subnetworks of scratch tickets with various remaining ratios, we propose a method to realize the dynamic stochastic ensemble defense strategy. We discover the adversarial transferable diversity between robust lottery ticket subnetworks drawn from different basic structures and sparsity. The experimental results suggest that our method achieves better robust and clean recognition accuracy by adversarial transferable diversity, which would decrease the reliability of attacks.

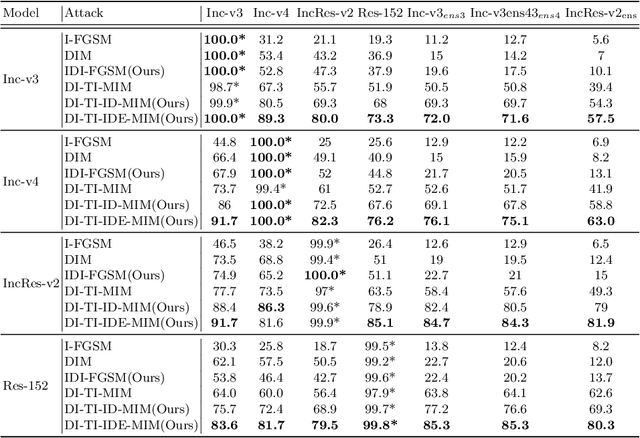

Improving the Transferability of Adversarial Examples with New Iteration Framework and Input Dropout

Jun 22, 2021

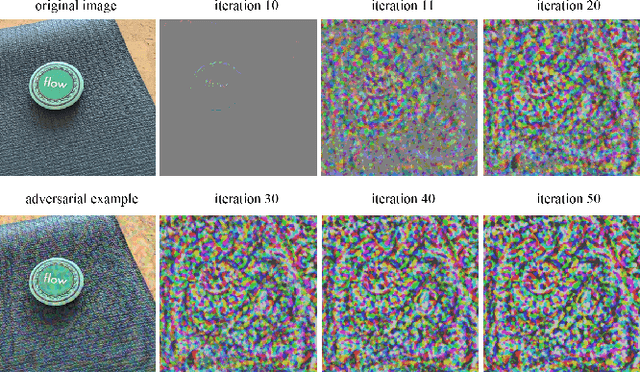

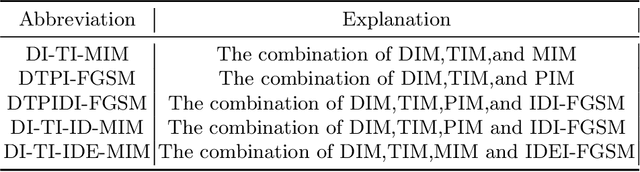

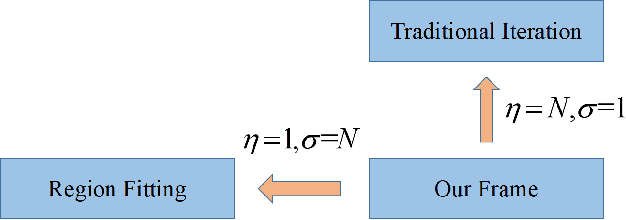

Abstract:Deep neural networks(DNNs) is vulnerable to be attacked by adversarial examples. Black-box attack is the most threatening attack. At present, black-box attack methods mainly adopt gradient-based iterative attack methods, which usually limit the relationship between the iteration step size, the number of iterations, and the maximum perturbation. In this paper, we propose a new gradient iteration framework, which redefines the relationship between the above three. Under this framework, we easily improve the attack success rate of DI-TI-MIM. In addition, we propose a gradient iterative attack method based on input dropout, which can be well combined with our framework. We further propose a multi dropout rate version of this method. Experimental results show that our best method can achieve attack success rate of 96.2\% for defense model on average, which is higher than the state-of-the-art gradient-based attacks.

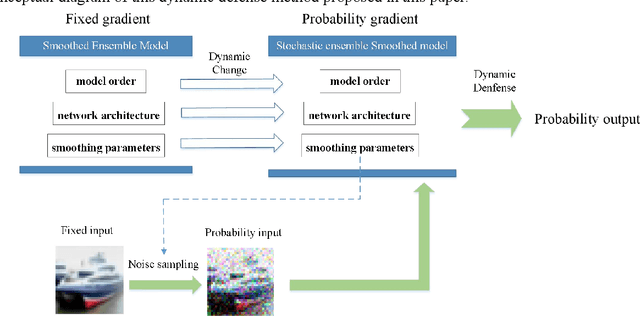

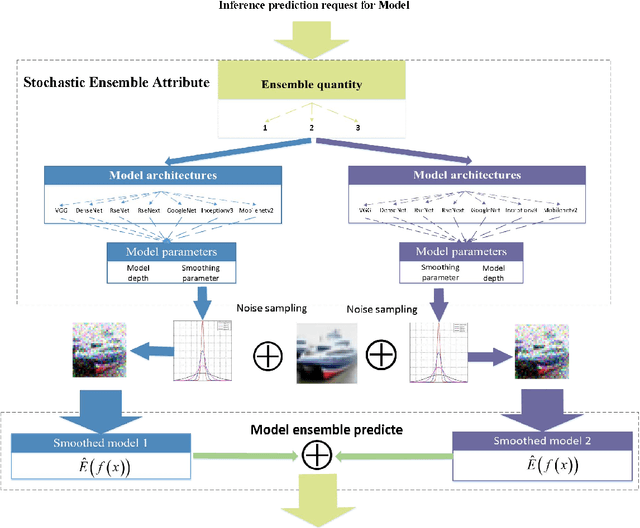

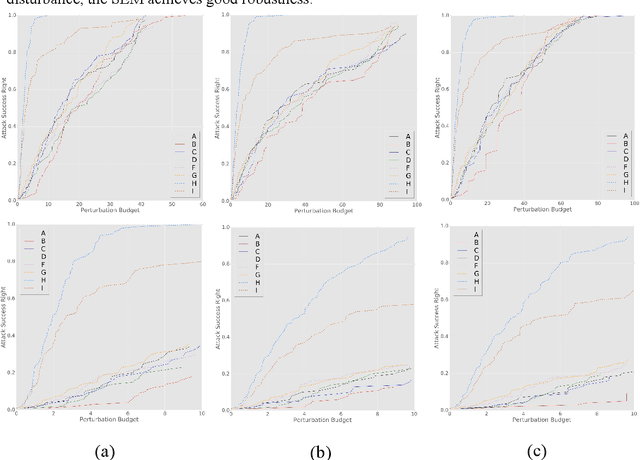

Dynamic Defense Approach for Adversarial Robustness in Deep Neural Networks via Stochastic Ensemble Smoothed Model

May 06, 2021

Abstract:Deep neural networks have been shown to suffer from critical vulnerabilities under adversarial attacks. This phenomenon stimulated the creation of different attack and defense strategies similar to those adopted in cyberspace security. The dependence of such strategies on attack and defense mechanisms makes the associated algorithms on both sides appear as closely reciprocating processes. The defense strategies are particularly passive in these processes, and enhancing initiative of such strategies can be an effective way to get out of this arms race. Inspired by the dynamic defense approach in cyberspace, this paper builds upon stochastic ensemble smoothing based on defense method of random smoothing and model ensemble. Proposed method employs network architecture and smoothing parameters as ensemble attributes, and dynamically change attribute-based ensemble model before every inference prediction request. The proposed method handles the extreme transferability and vulnerability of ensemble models under white-box attacks. Experimental comparison of ASR-vs-distortion curves with different attack scenarios shows that even the attacker with the highest attack capability cannot easily exceed the attack success rate associated with the ensemble smoothed model, especially under untargeted attacks.

Cycle-Consistent Adversarial GAN: the integration of adversarial attack and defense

Apr 12, 2019

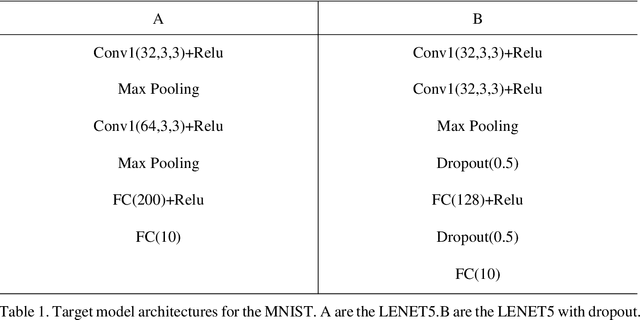

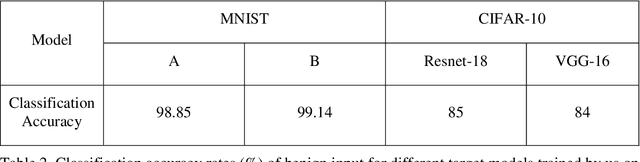

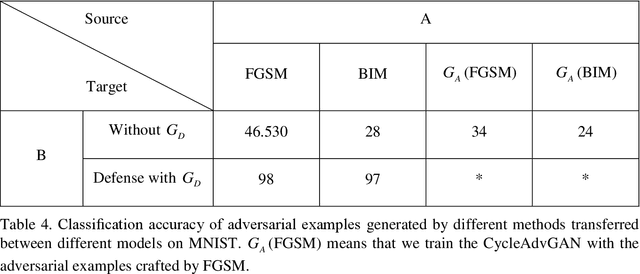

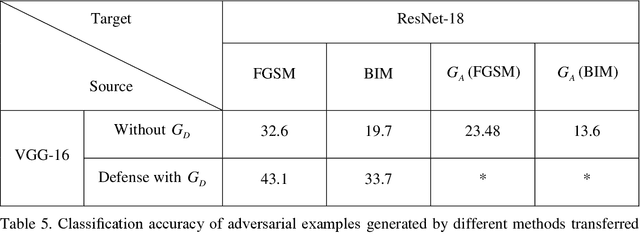

Abstract:In image classification of deep learning, adversarial examples where inputs intended to add small magnitude perturbations may mislead deep neural networks (DNNs) to incorrect results, which means DNNs are vulnerable to them. Different attack and defense strategies have been proposed to better research the mechanism of deep learning. However, those research in these networks are only for one aspect, either an attack or a defense, not considering that attacks and defenses should be interdependent and mutually reinforcing, just like the relationship between spears and shields. In this paper, we propose Cycle-Consistent Adversarial GAN (CycleAdvGAN) to generate adversarial examples, which can learn and approximate the distribution of original instances and adversarial examples. For CycleAdvGAN, once the Generator and are trained, can generate adversarial perturbations efficiently for any instance, so as to make DNNs predict wrong, and recovery adversarial examples to clean instances, so as to make DNNs predict correct. We apply CycleAdvGAN under semi-white box and black-box settings on two public datasets MNIST and CIFAR10. Using the extensive experiments, we show that our method has achieved the state-of-the-art adversarial attack method and also efficiently improve the defense ability, which make the integration of adversarial attack and defense come true. In additional, it has improved attack effect only trained on the adversarial dataset generated by any kind of adversarial attack.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge