Rundong Ge

Vision-based Relative Detection and Tracking for Teams of Micro Aerial Vehicles

Jul 17, 2022

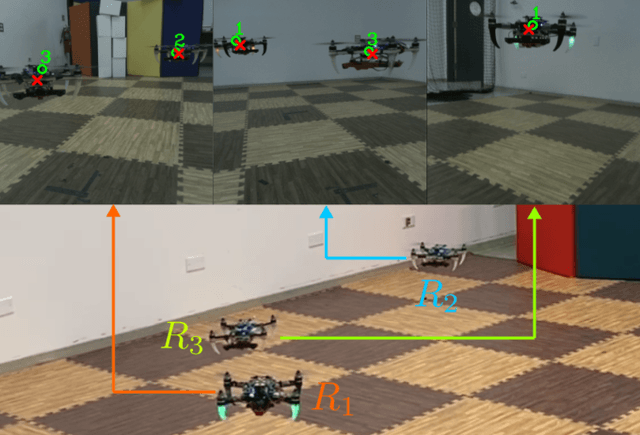

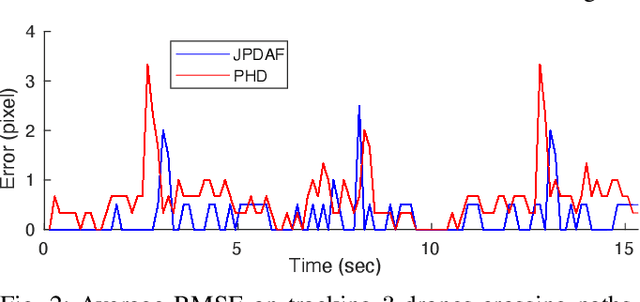

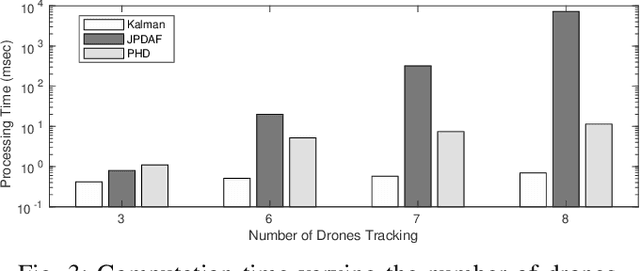

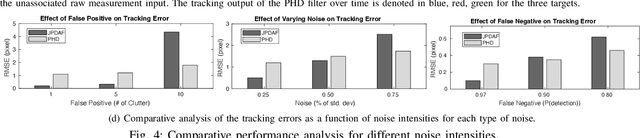

Abstract:In this paper, we address the vision-based detection and tracking problems of multiple aerial vehicles using a single camera and Inertial Measurement Unit (IMU) as well as the corresponding perception consensus problem (i.e., uniqueness and identical IDs across all observing agents). We design several vision-based decentralized Bayesian multi-tracking filtering strategies to resolve the association between the incoming unsorted measurements obtained by a visual detector algorithm and the tracked agents. We compare their accuracy in different operating conditions as well as their scalability according to the number of agents in the team. This analysis provides useful insights about the most appropriate design choice for the given task. We further show that the proposed perception and inference pipeline which includes a Deep Neural Network (DNN) as visual target detector is lightweight and capable of concurrently running control and planning with Size, Weight, and Power (SWaP) constrained robots on-board. Experimental results show the effective tracking of multiple drones in various challenging scenarios such as heavy occlusions.

VIPose: Real-time Visual-Inertial 6D Object Pose Tracking

Aug 01, 2021

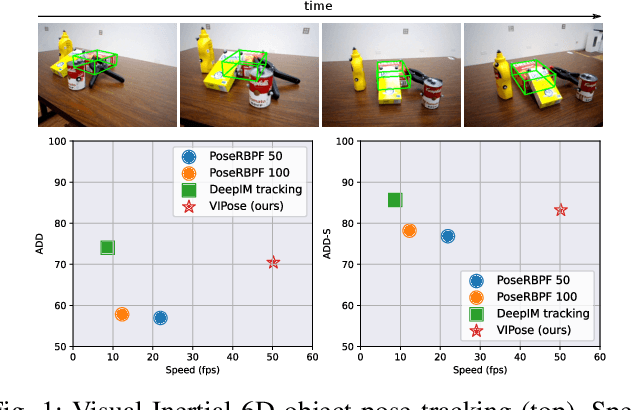

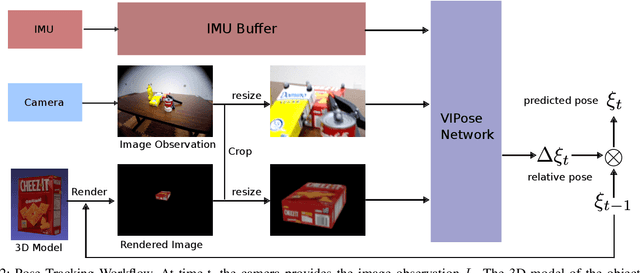

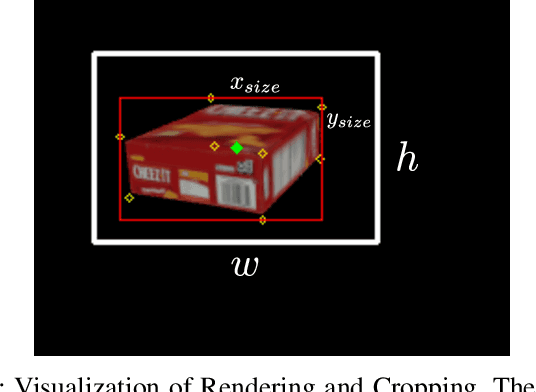

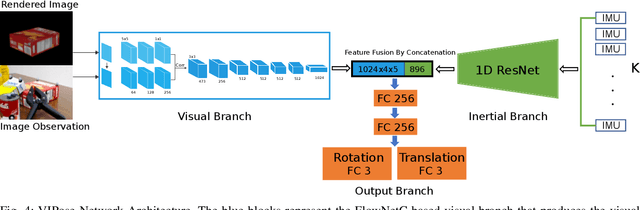

Abstract:Estimating the 6D pose of objects is beneficial for robotics tasks such as transportation, autonomous navigation, manipulation as well as in scenarios beyond robotics like virtual and augmented reality. With respect to single image pose estimation, pose tracking takes into account the temporal information across multiple frames to overcome possible detection inconsistencies and to improve the pose estimation efficiency. In this work, we introduce a novel Deep Neural Network (DNN) called VIPose, that combines inertial and camera data to address the object pose tracking problem in real-time. The key contribution is the design of a novel DNN architecture which fuses visual and inertial features to predict the objects' relative 6D pose between consecutive image frames. The overall 6D pose is then estimated by consecutively combining relative poses. Our approach shows remarkable pose estimation results for heavily occluded objects that are well known to be very challenging to handle by existing state-of-the-art solutions. The effectiveness of the proposed approach is validated on a new dataset called VIYCB with RGB image, IMU data, and accurate 6D pose annotations created by employing an automated labeling technique. The approach presents accuracy performances comparable to state-of-the-art techniques, but with the additional benefit of being real-time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge