Rui Fukushima

Comparing Generalization in Learning with Limited Numbers of Exemplars: Transformer vs. RNN in Attractor Dynamics

Nov 15, 2023

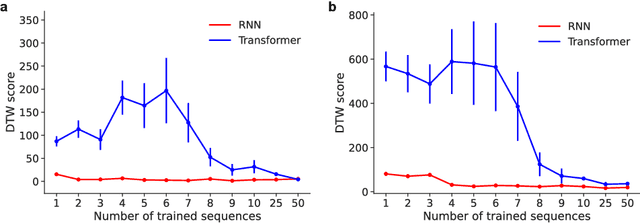

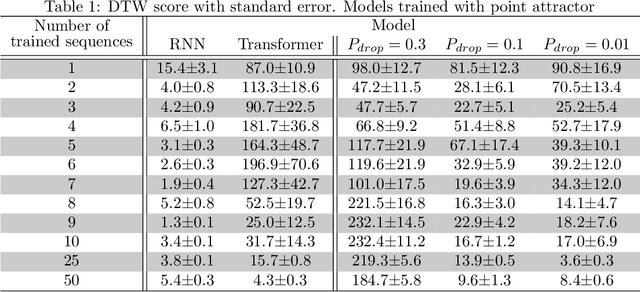

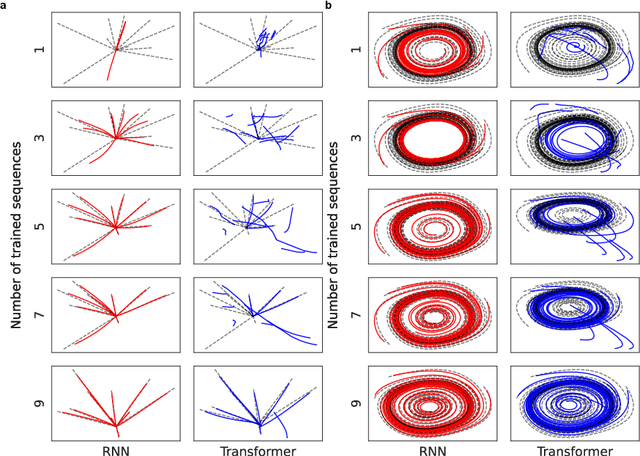

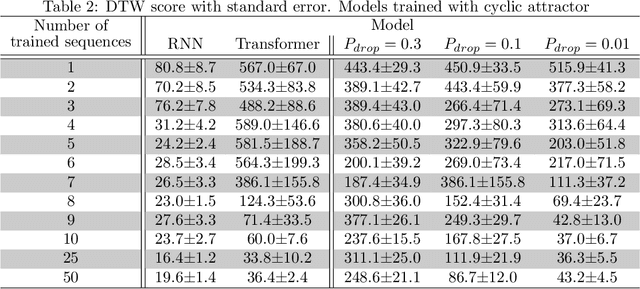

Abstract:ChatGPT, a widely-recognized large language model (LLM), has recently gained substantial attention for its performance scaling, attributed to the billions of web-sourced natural language sentences used for training. Its underlying architecture, Transformer, has found applications across diverse fields, including video, audio signals, and robotic movement. %The crucial question this raises concerns the Transformer's generalization-in-learning (GIL) capacity. However, this raises a crucial question about Transformer's generalization in learning (GIL) capacity. Is ChatGPT's success chiefly due to the vast dataset used for training, or is there more to the story? To investigate this, we compared Transformer's GIL capabilities with those of a traditional Recurrent Neural Network (RNN) in tasks involving attractor dynamics learning. For performance evaluation, the Dynamic Time Warping (DTW) method has been employed. Our simulation results suggest that under conditions of limited data availability, Transformer's GIL abilities are markedly inferior to those of RNN.

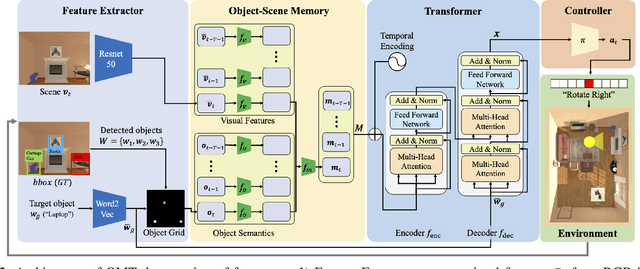

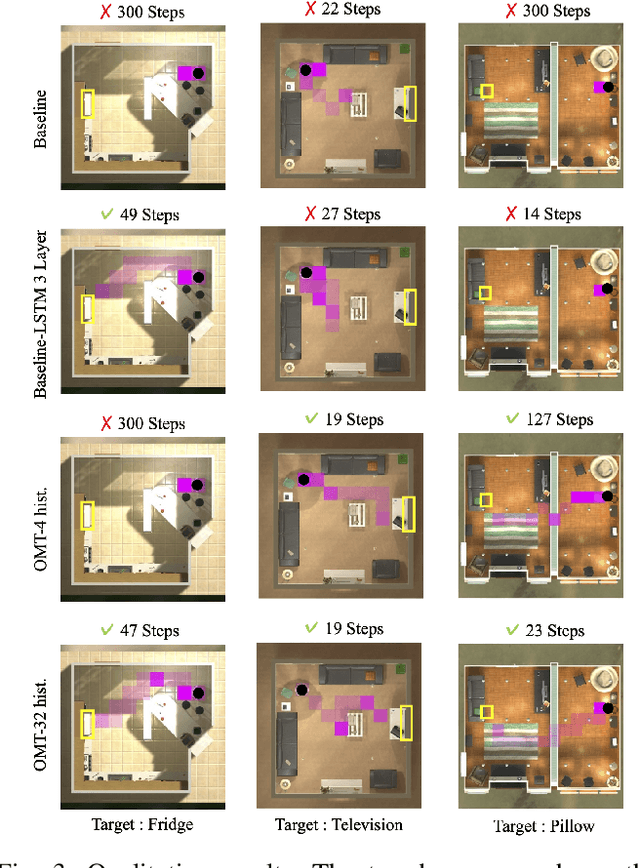

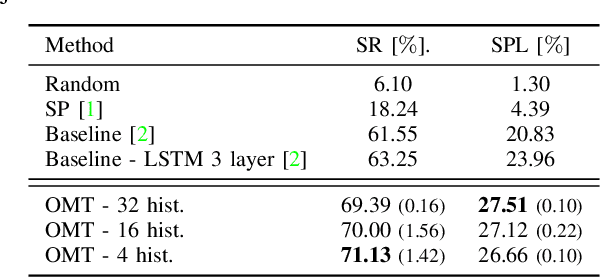

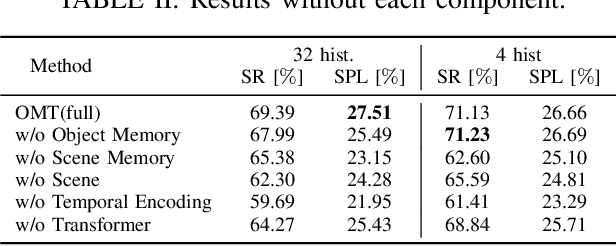

Object Memory Transformer for Object Goal Navigation

Mar 24, 2022

Abstract:This paper presents a reinforcement learning method for object goal navigation (ObjNav) where an agent navigates in 3D indoor environments to reach a target object based on long-term observations of objects and scenes. To this end, we propose Object Memory Transformer (OMT) that consists of two key ideas: 1) Object-Scene Memory (OSM) that enables to store long-term scenes and object semantics, and 2) Transformer that attends to salient objects in the sequence of previously observed scenes and objects stored in OSM. This mechanism allows the agent to efficiently navigate in the indoor environment without prior knowledge about the environments, such as topological maps or 3D meshes. To the best of our knowledge, this is the first work that uses a long-term memory of object semantics in a goal-oriented navigation task. Experimental results conducted on the AI2-THOR dataset show that OMT outperforms previous approaches in navigating in unknown environments. In particular, we show that utilizing the long-term object semantics information improves the efficiency of navigation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge